Recently, OpenAI released its newest AI model, GPT-5.4 (1). While much of the praise has focused on its overall performance, I want to highlight its context window length. The context window refers to the amount of information a generative AI can process in a single go. GPT-5.4 now supports 1M (one million) tokens. With its rival Opus 4.6 also at 1M and Google Gemini having achieved 1M two years ago, all frontier models from the "Big Three" now possess 1M-token context windows. We can officially say that AI has entered the Long-Context Era.

How will this impact the development of AI agents? Let’s explore.

1. What is Many-Shot In-Context Learning?

When you ask ChatGPT, "What is the capital of Japan?" and it replies, "Tokyo," that question or instruction is called a prompt. However, you can input much more than just a short prompt.

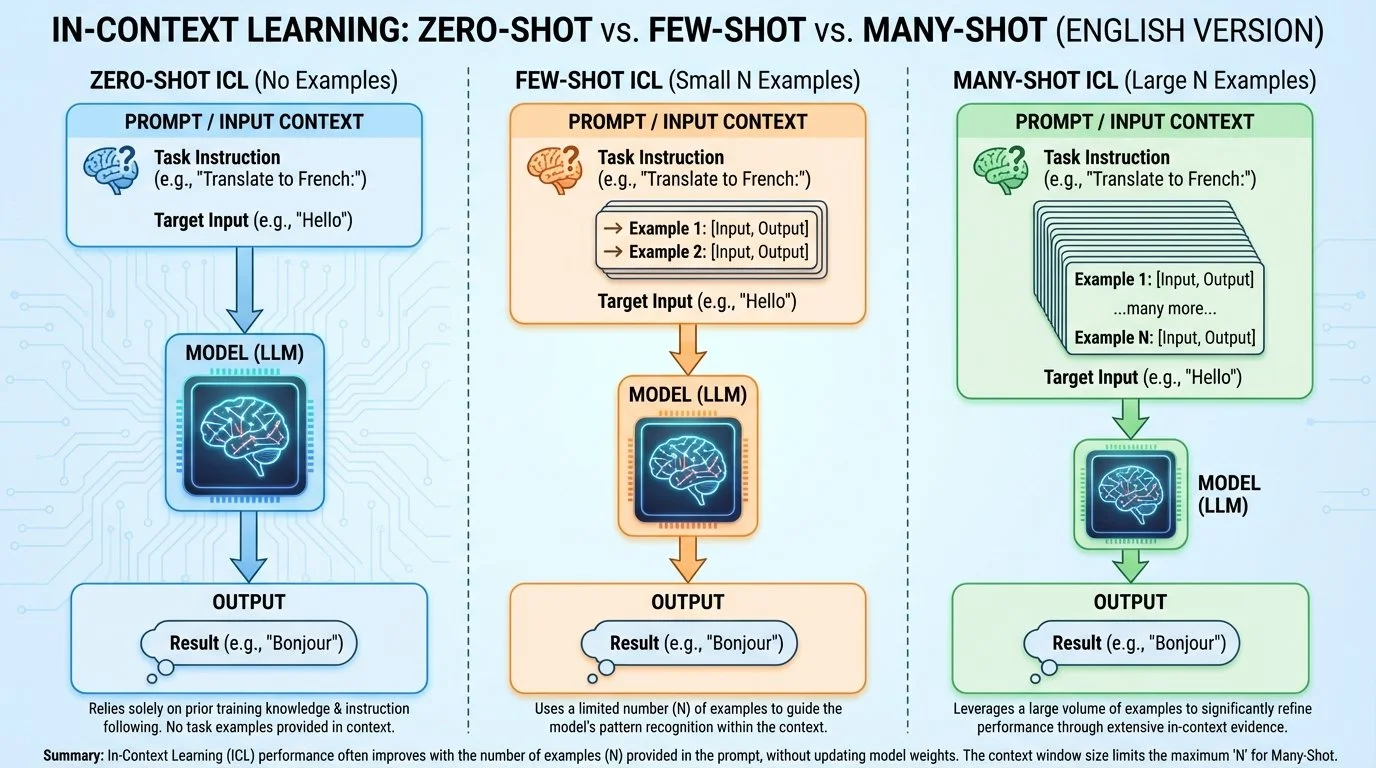

For example, if you provide examples first—such as "Where was the World Expo held in Japan?" followed by "Osaka"—and then ask your actual question, the accuracy is known to improve. This technique is called In-Context Learning. When the number of examples exceeds roughly 10 and you provide a massive amount of data, it is referred to as Many-Shot In-Context Learning. Here is a brief summary.

In-Context Learning

2. Challenging a 20-Class Classification Task Using Bank Complaint Data

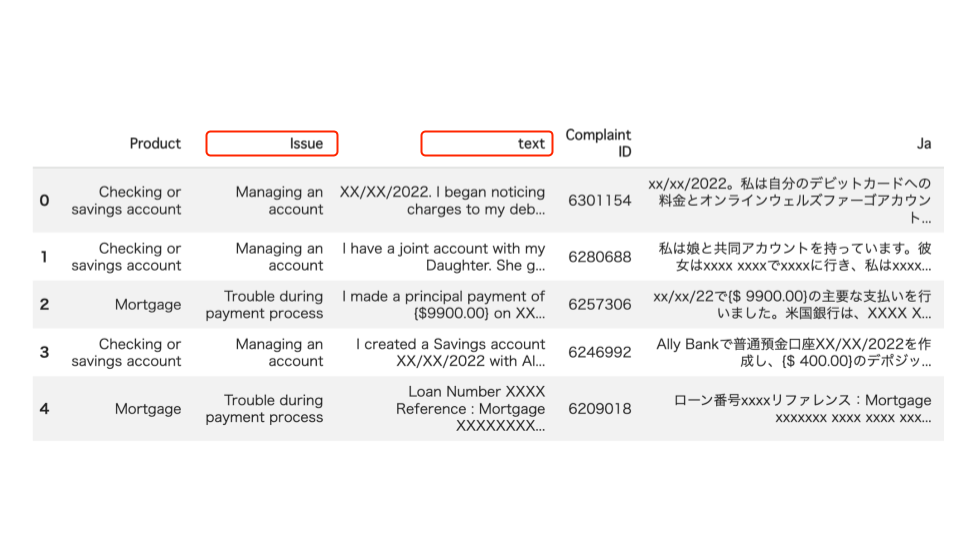

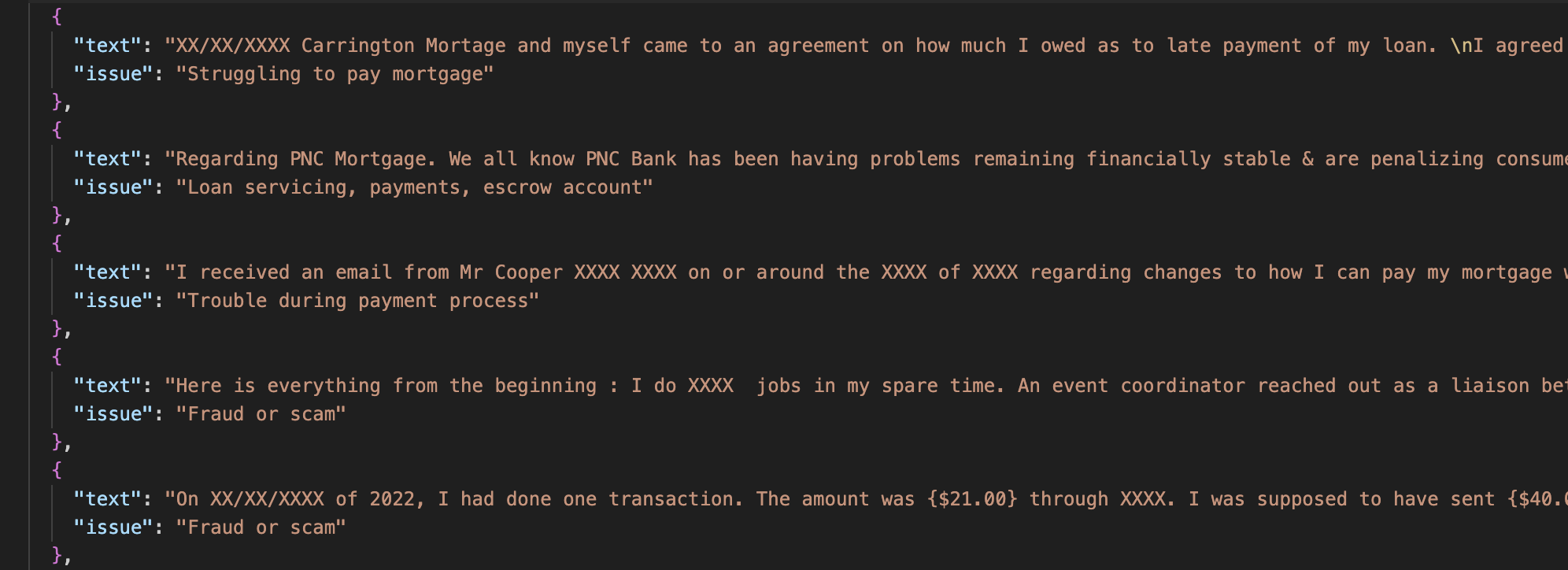

To measure the effectiveness of Many-Shot In-Context Learning, I decided to tackle a difficult 20-class classification task using bank complaint data (2). This dataset contains an "issue" column describing why a complaint occurred. The goal is to read the "text" column and select the correct cause from 20 possible categories. For this, I used Gemini 3.1 Flash-Lite (3).

Banking complaints dataset

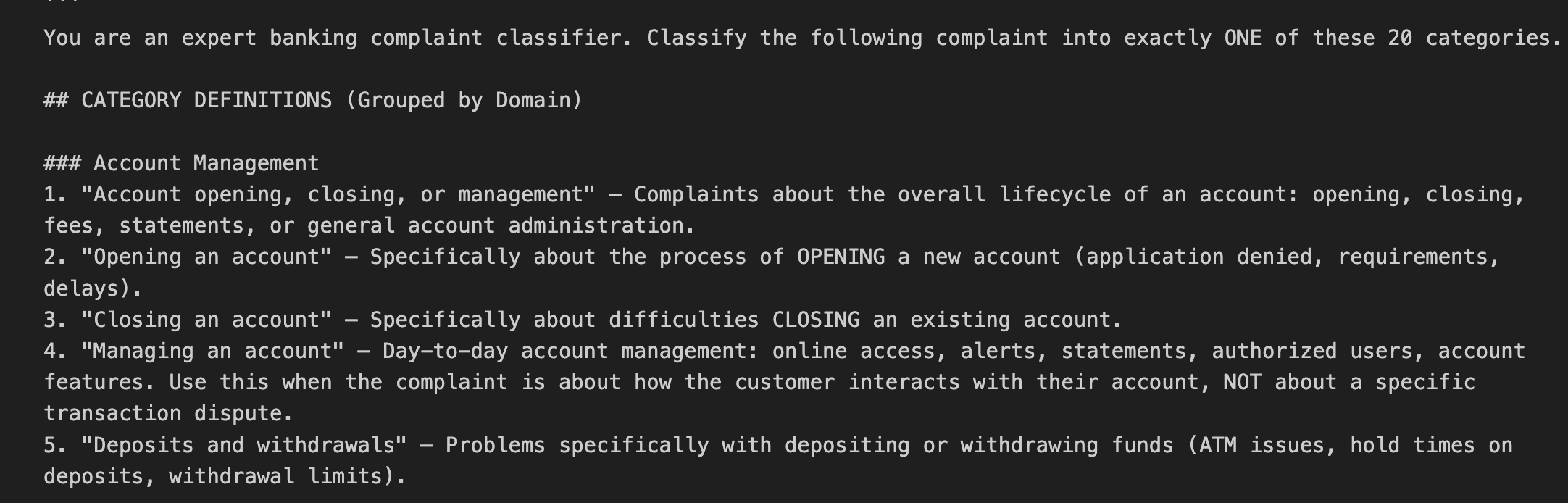

Rather than using a simple prompt like "Please classify this," I asked the AI itself to "create the optimal prompt," resulting in a highly detailed set of instructions—what you might call a "Prompt Powered by AI."

prompt powered by AI

I first attempted this using Zero-shot (providing no examples), even with this enhanced prompt. Unfortunately, the accuracy was only 46%. Since it gets it wrong more than half the time, it isn't yet viable for practical business use.

Zero-Shot accuracy

3. Executing Many-Shot In-Context Learning with 1,000 Samples

Next, I implemented Many-Shot In-Context Learning by providing 1,000 examples alongside the prompt. While the underlying process remains the same as the Zero-shot approach, the volume of information is massive. The following are the first five examples.

Many-Shot samples

The results were dramatic: accuracy jumped to 70%. This clearly demonstrates the sheer power of the "Many-Shot" approach.

Many-Shot accuracy

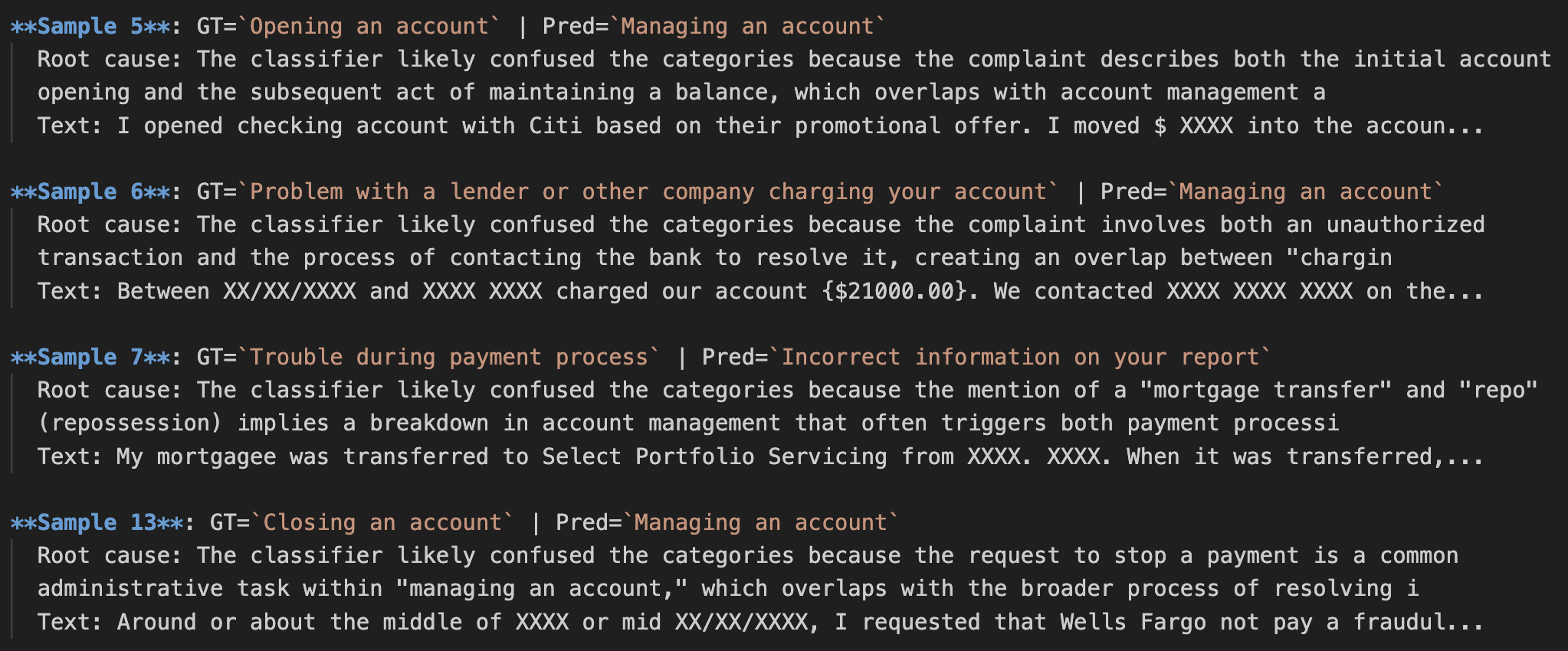

However, with a 30% error rate, there is still room for improvement. I had an AI Agent analyze why the errors occurred and generate a report. The insights gained from this analysis are highly valuable for further refinement.

Root cause analysis

Conclusion

There are several ways to improve the accuracy of generative AI, but as 1M-token context windows become the standard, Many-Shot In-Context Learning is set to become a major focal point. At ToshiStats, we plan to continue evolving this methodology.

Stay tuned!

You can enjoy our video news ToshiStats AI Weekly Review from this link, too!

1) Introducing GPT‑5.4, Open AI, March 5, 2026

2) Consumer Complaint Database

3 )Gemini 3.1 Flash-Lite: Built for intelligence at scale, Google, Mar 03, 2026

Copyright © 2026 ToshiStats Co., Ltd. All right reserved.

Notice: This is for educational purpose only. ToshiStats Co., Ltd. and I do not accept any responsibility or liability for loss or damage occasioned to any person or property through using materials, instructions, methods, algorithms or ideas contained herein, or acting or refraining from acting as a result of such use. ToshiStats Co., Ltd. and I expressly disclaim all implied warranties, including merchantability or fitness for any particular purpose. There will be no duty on ToshiStats Co., Ltd. and me to correct any errors or defects in the report, the codes and the software.