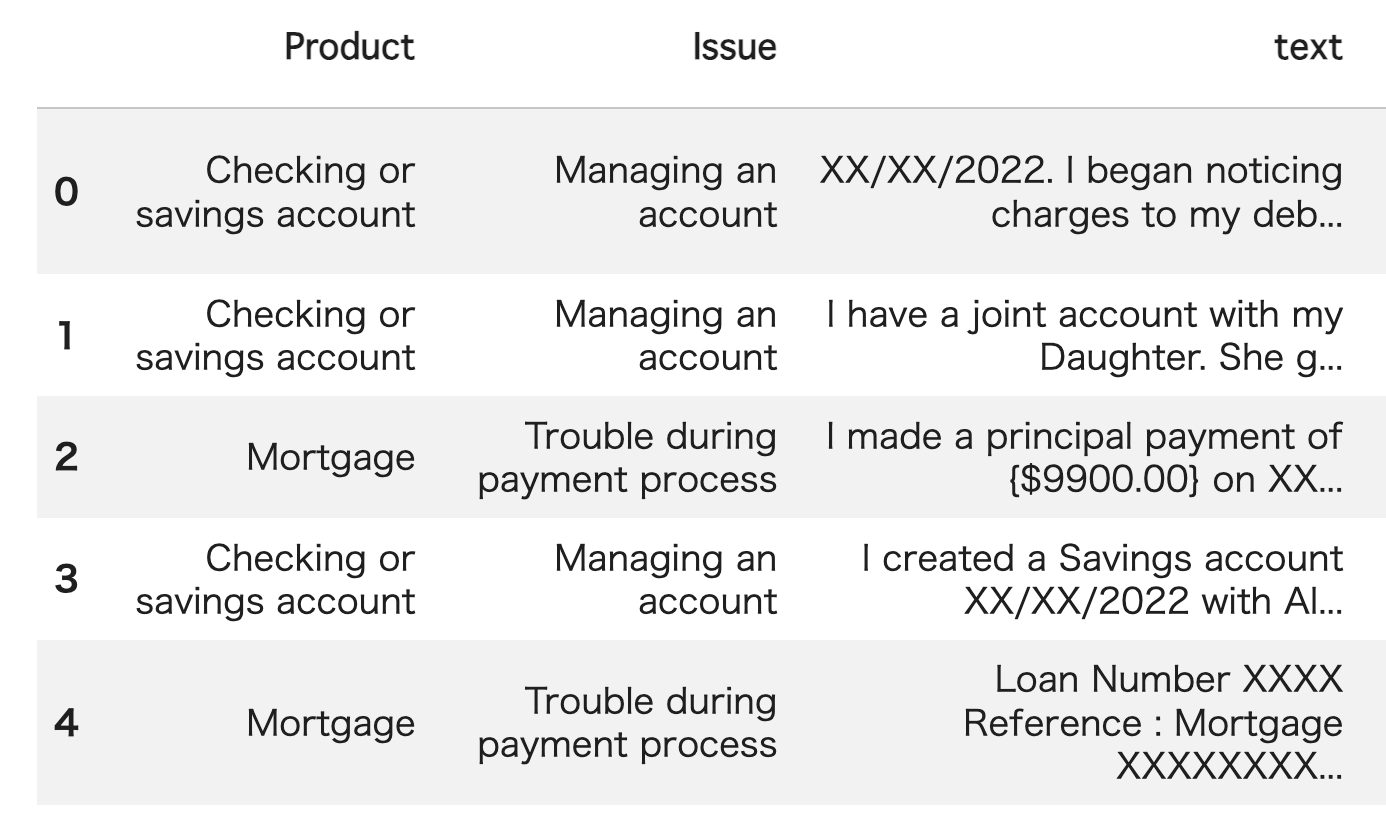

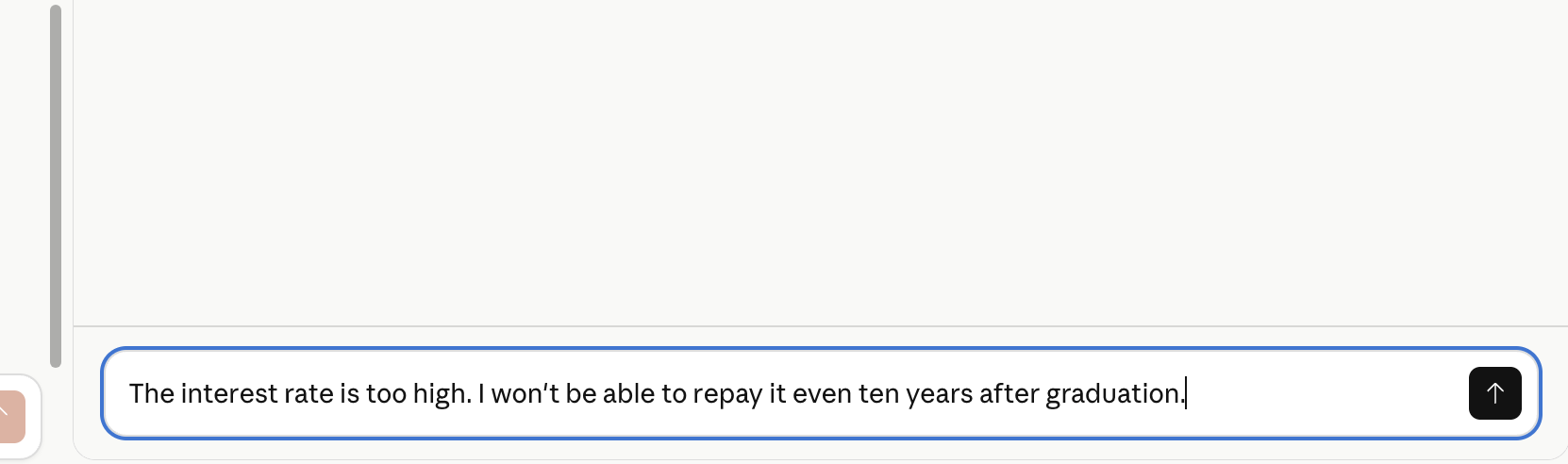

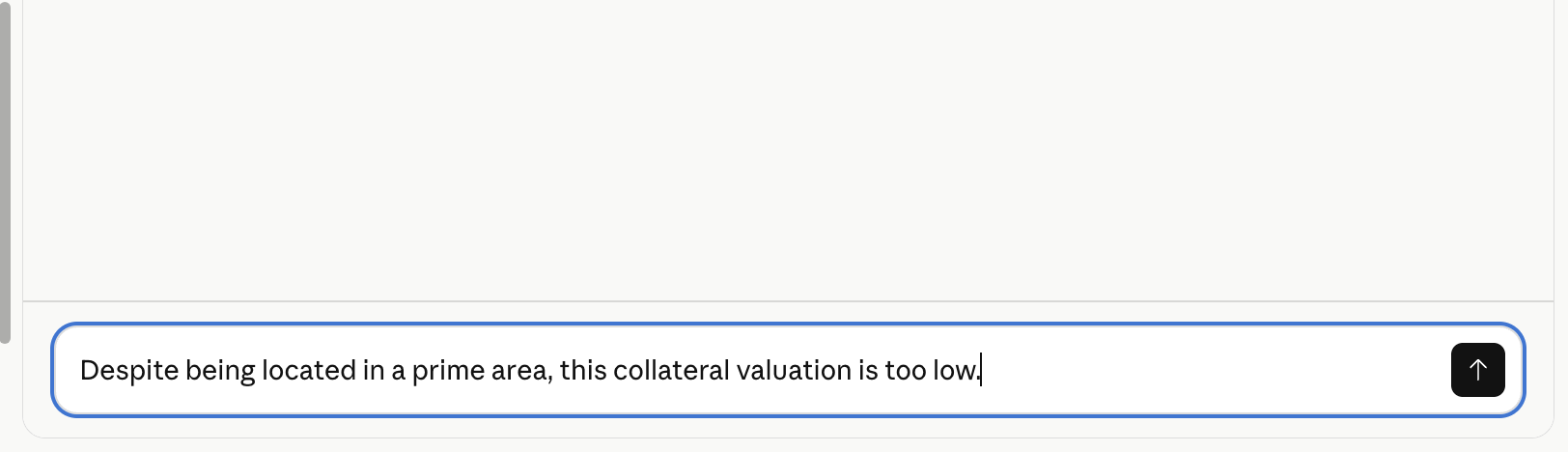

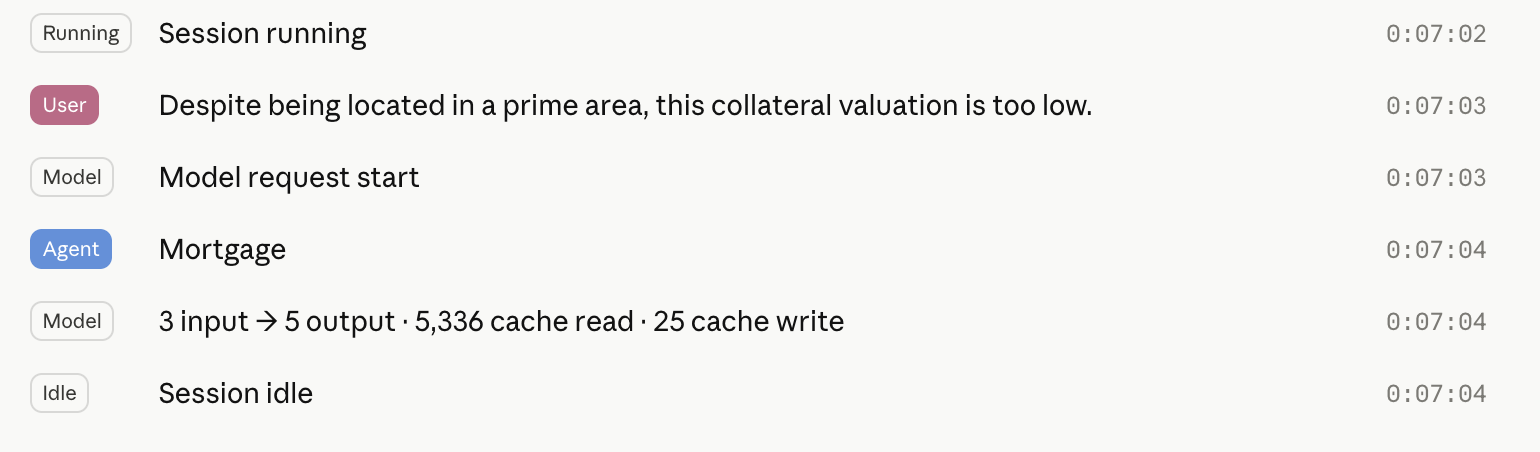

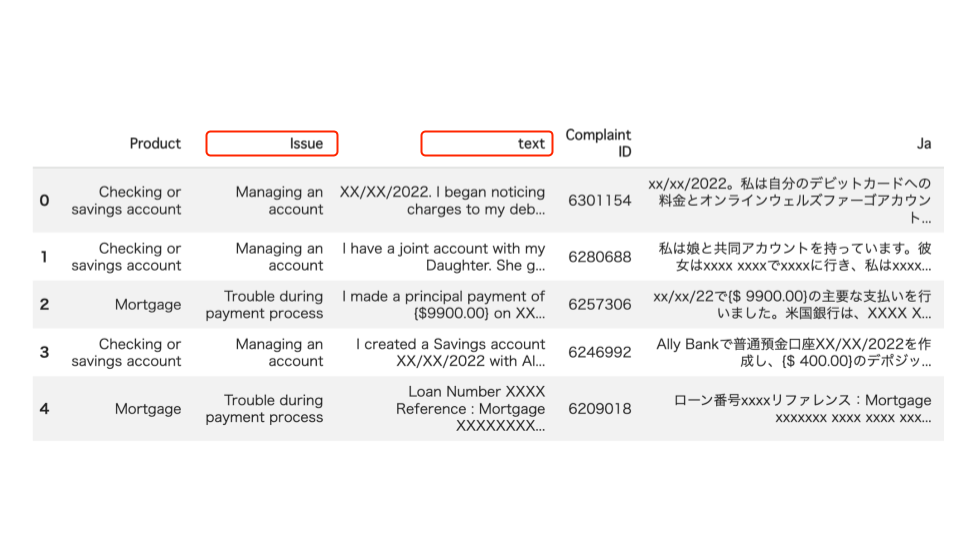

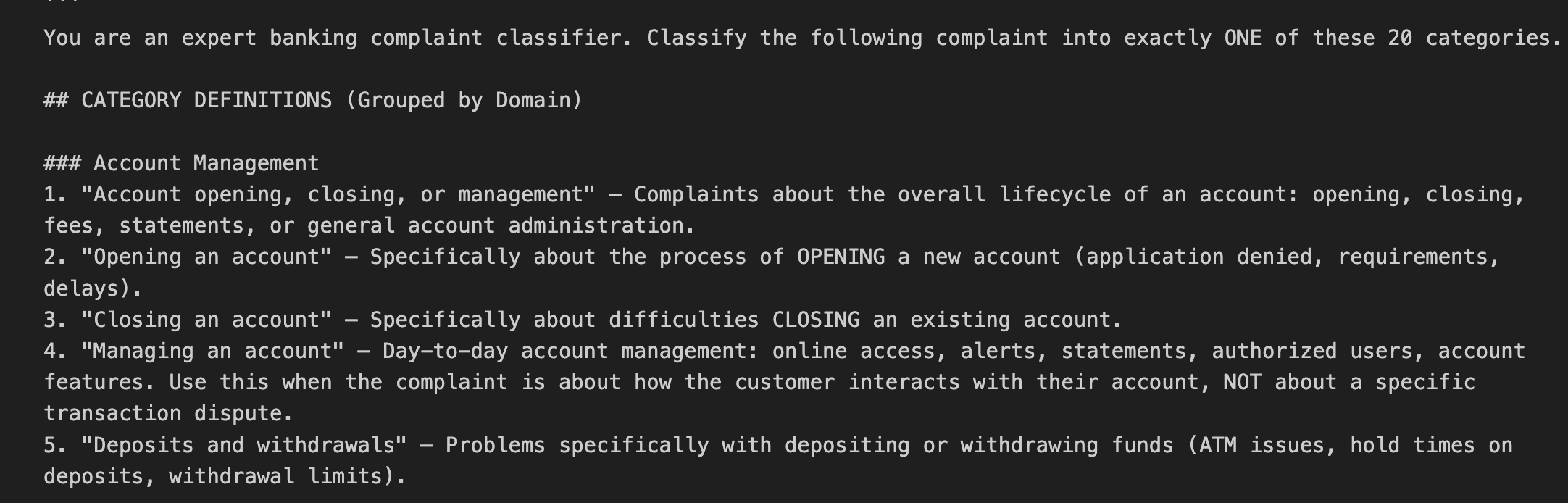

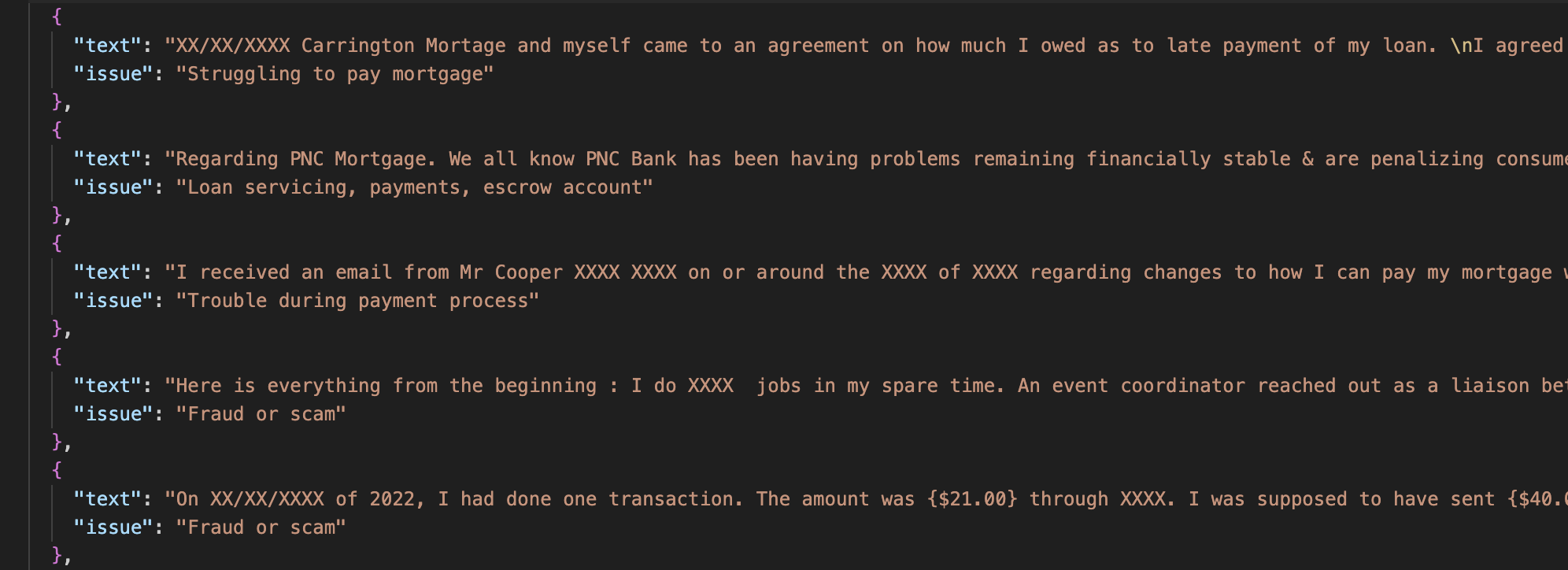

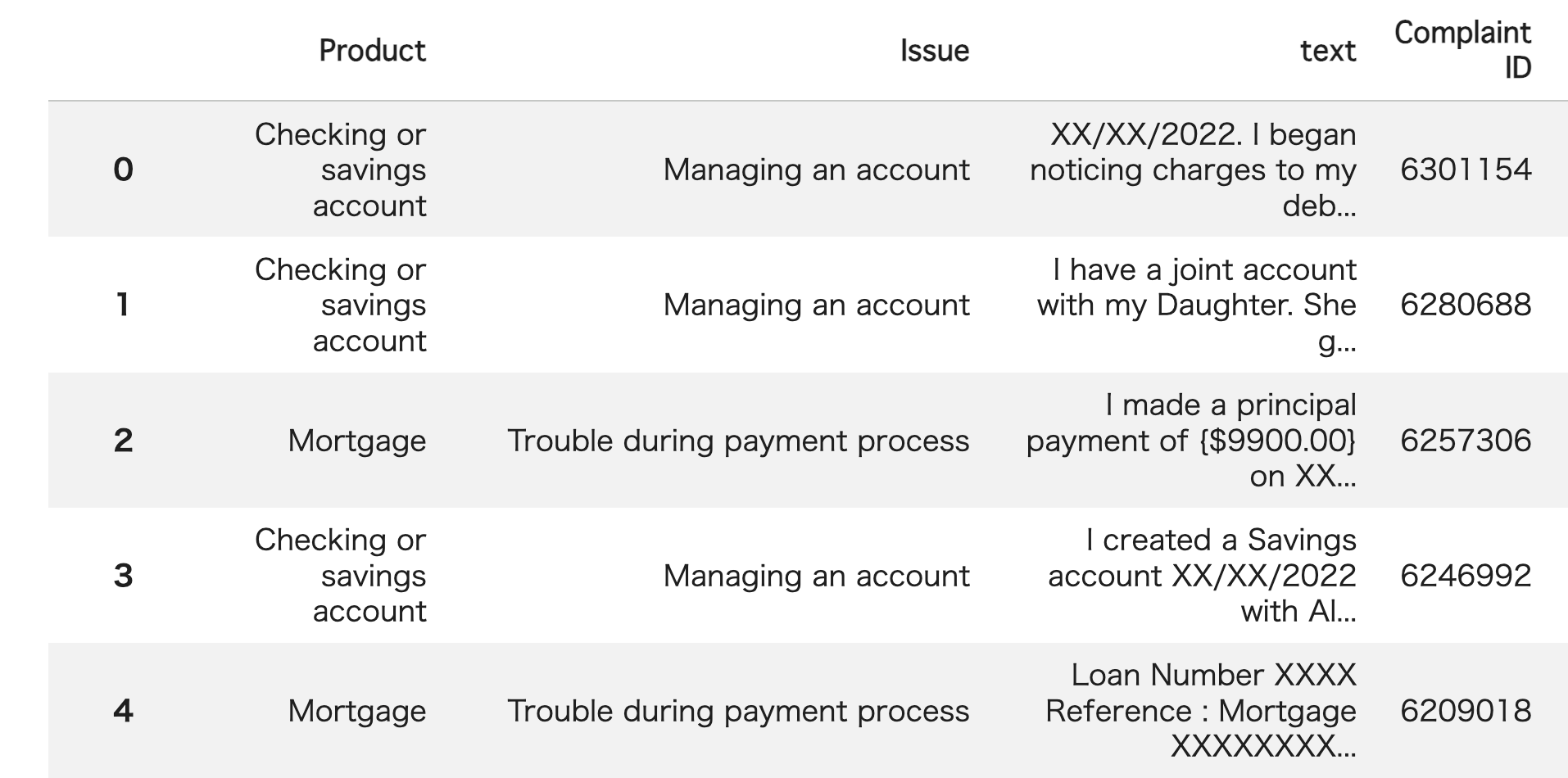

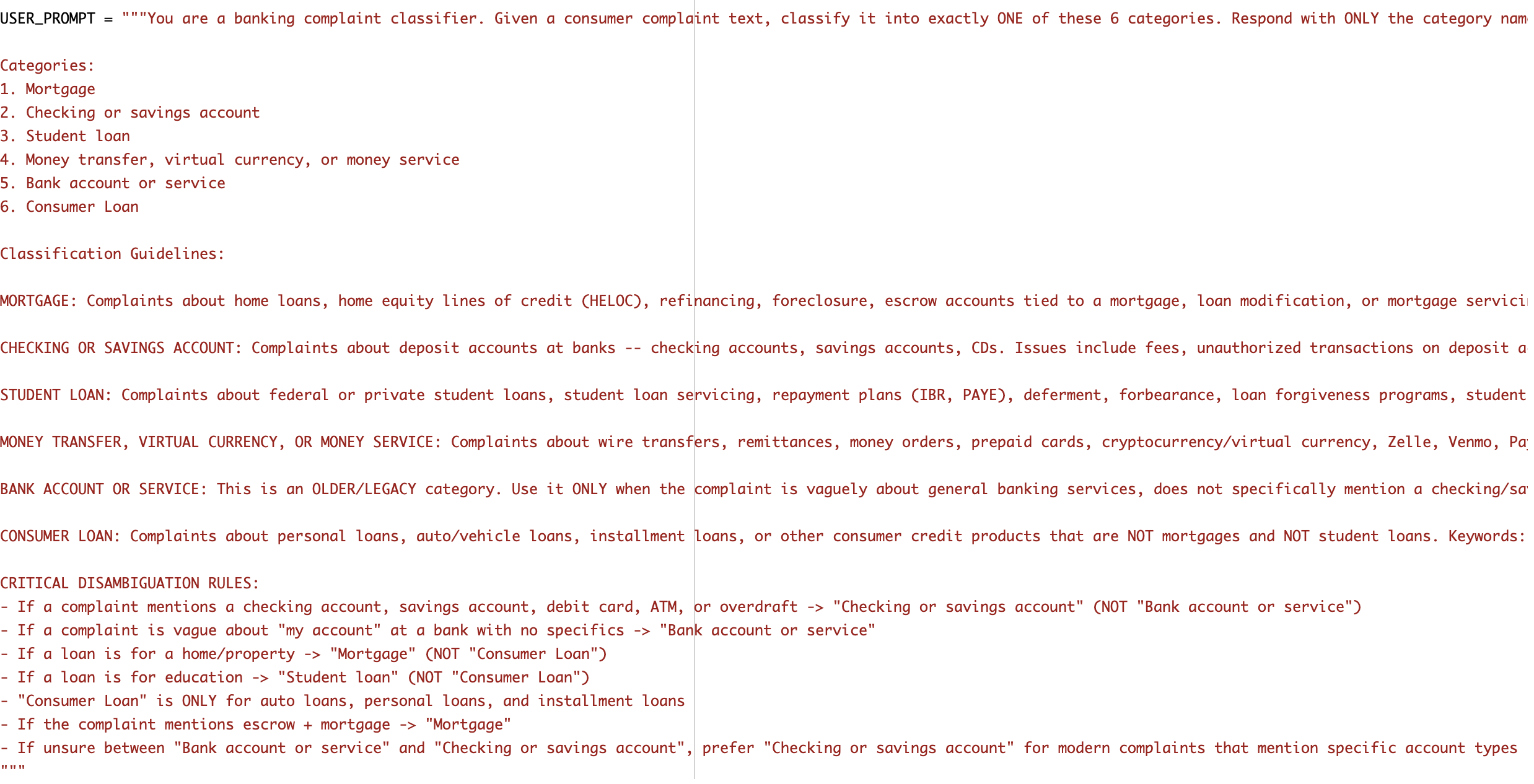

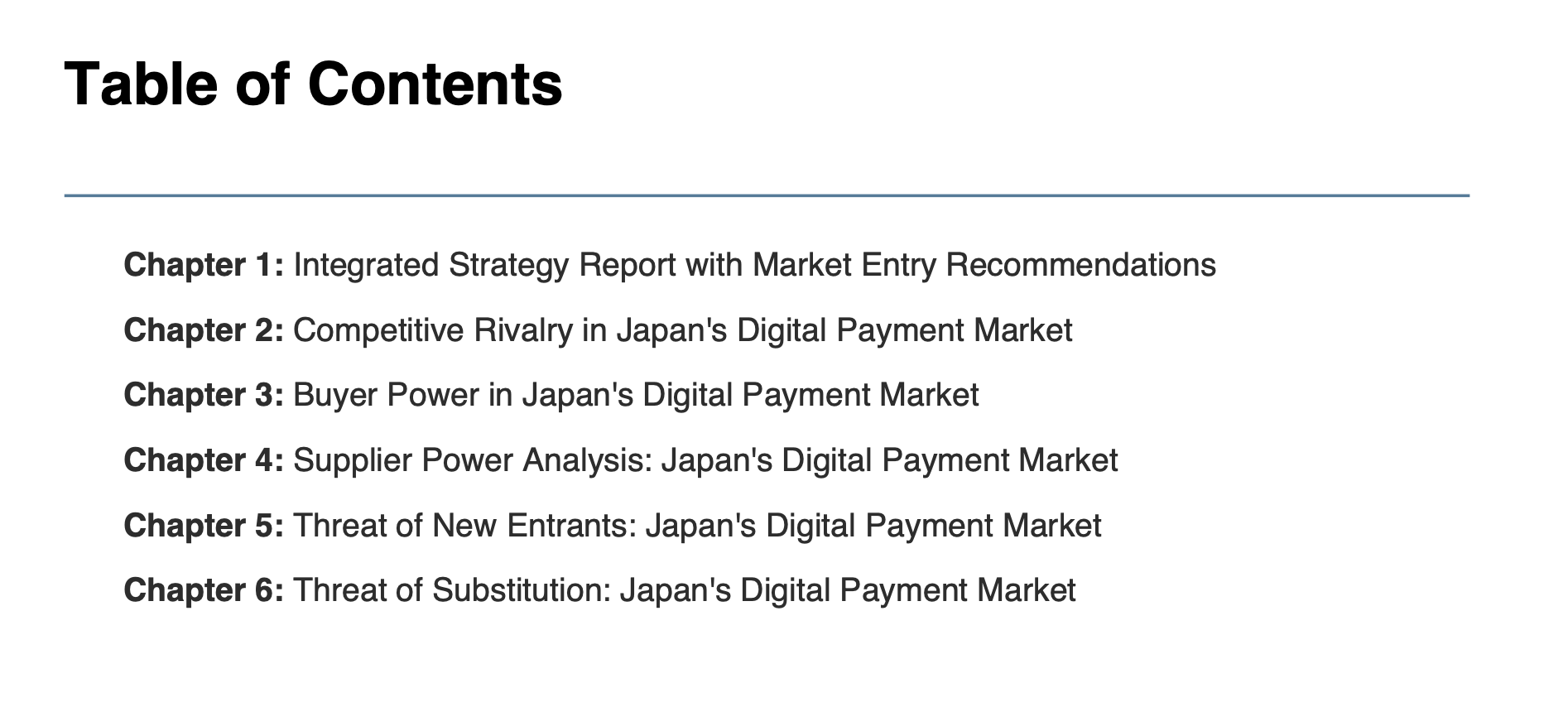

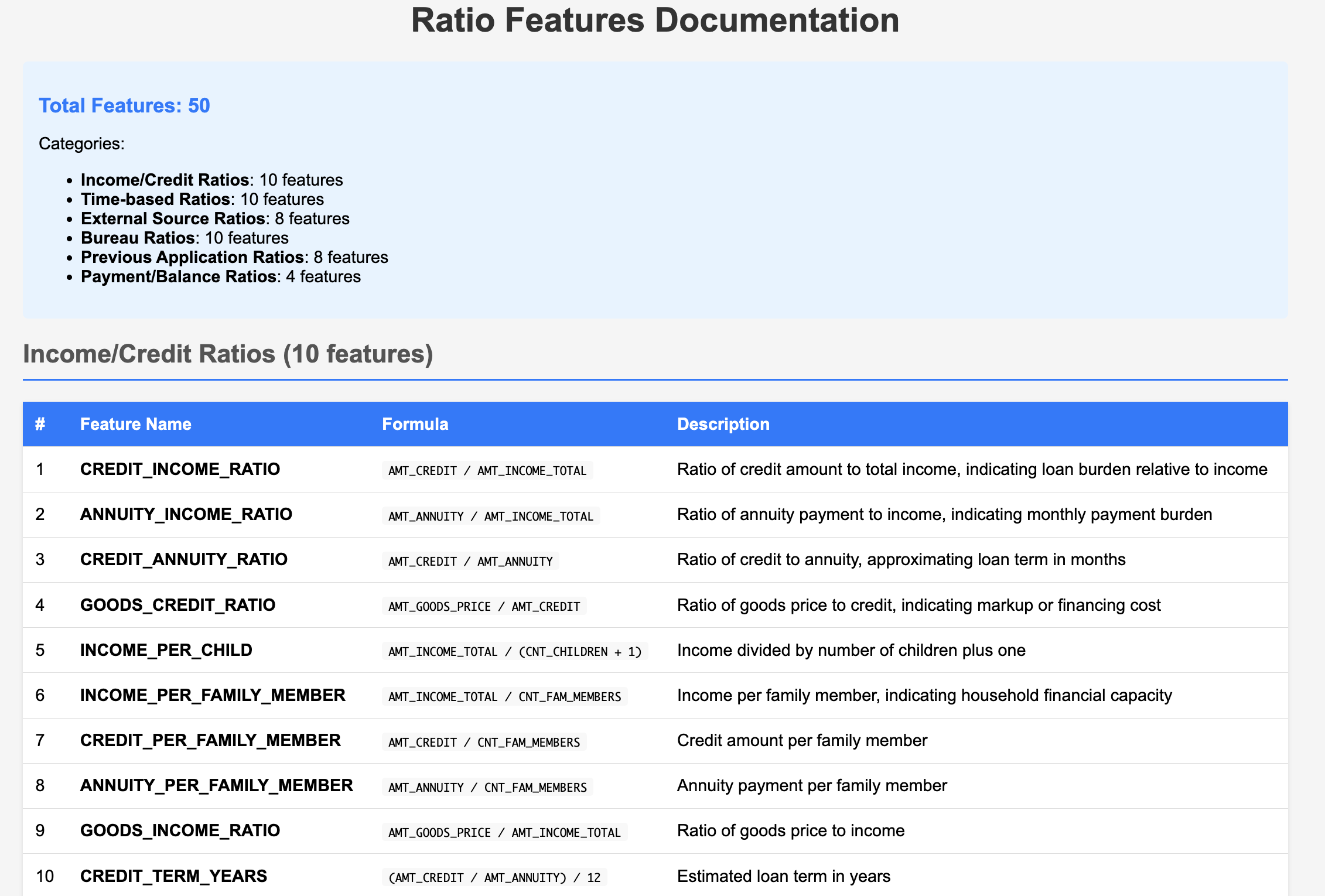

Have you ever tried to automate any classification tasks using Generative AI? I do this quite often, but occasionally, as the number of classification classes increases, the accuracy gradually drops to a point where it is no longer viable for practical business use. So, this time, I will tackle the task of classifying bank customer complaints (1) based on their root causes. There are 20 cause classes in total, making it a difficult problem where a random guess would yield only about a 5% accuracy rate. In the example below, the "text" column contains the customer complaint, and the "Issue," which is the underlying cause, is classified by an AI agent.

Bank Customer Complaints

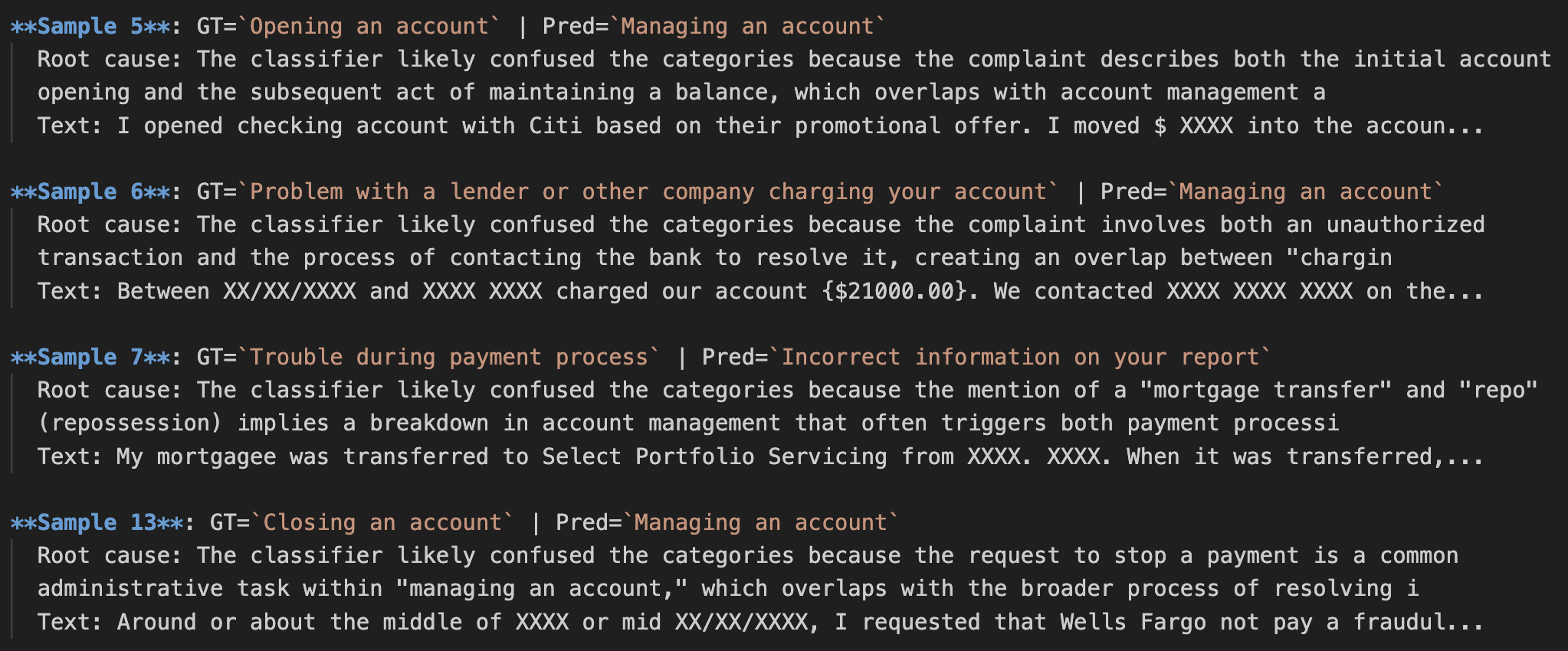

We are provided with a mere 100 samples. I would like to implement Root Cause Analysis (RCA) during the classification process to see just how crucial RCA is for improving accuracy. Let's get started right away.

1. What is RCA?

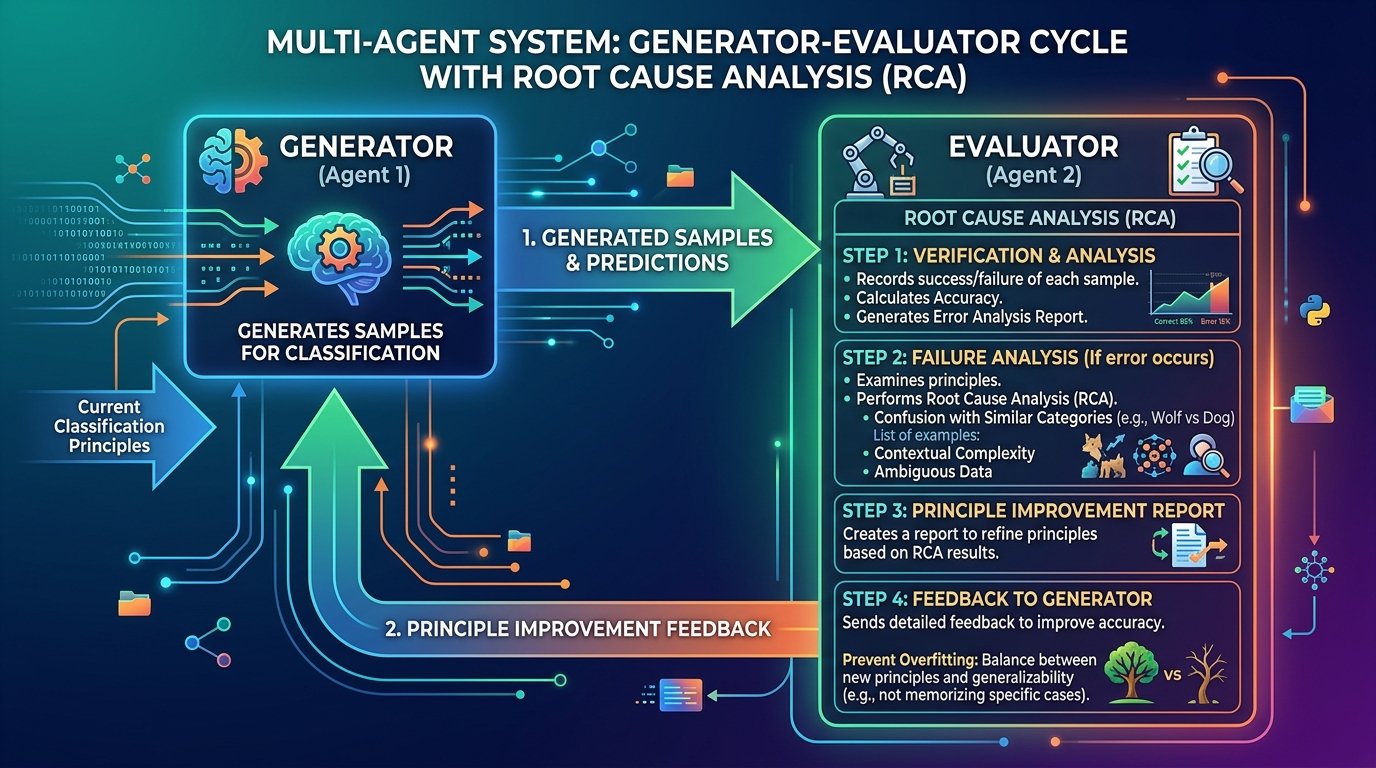

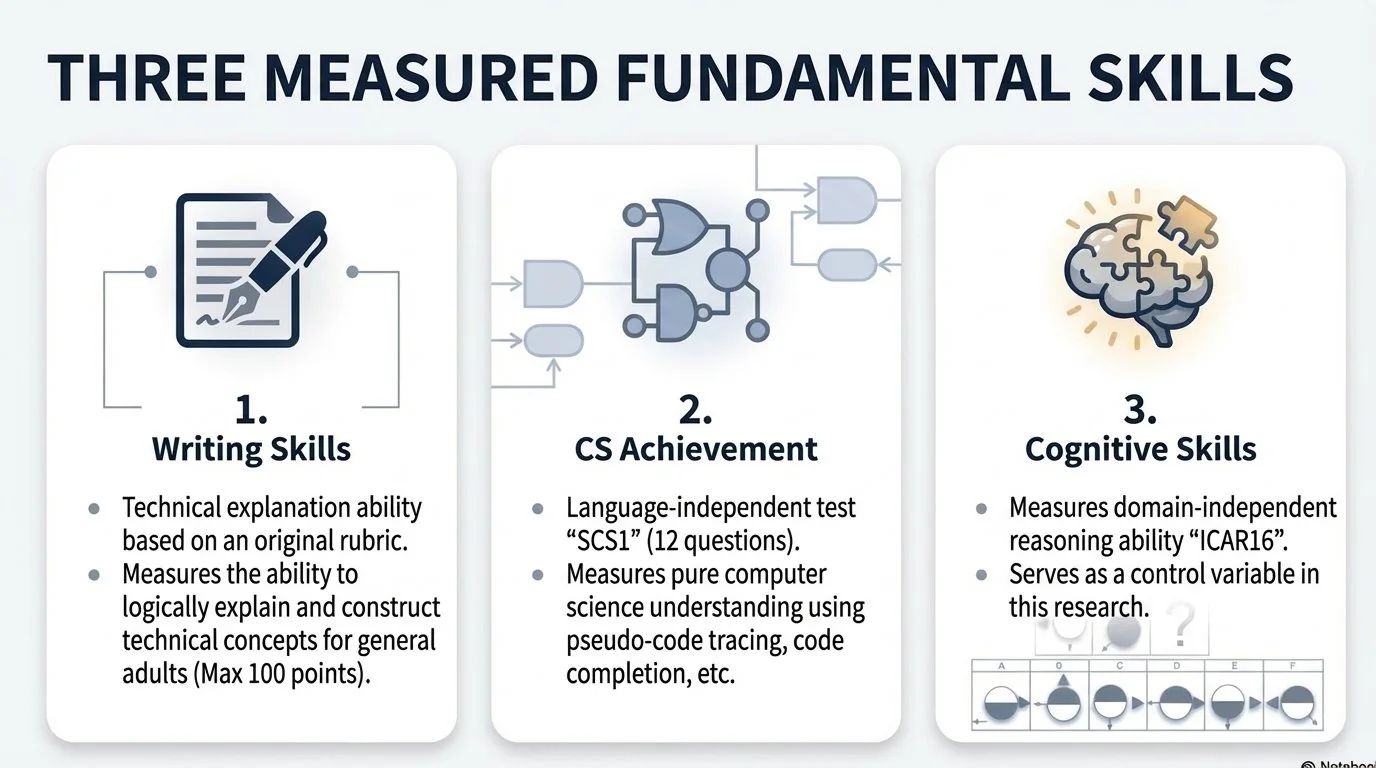

RCA stands for "Root Cause Analysis". When a problem occurs, it is a method used not just to resolve the superficial events (symptoms) you see, but to pinpoint the "true cause (root cause)" in order to prevent a recurrence. This time, I have designed the following RCA approach for classification failures:

Root Cause Analysis (RCA):

Record the success/failure of each sample.

Verification: Calculate the accuracy and generate an error analysis report.

Failure Analysis: If a classification error occurs, scrutinize the principle and conduct a Root Cause Analysis (RCA) on why it failed (e.g., confusion with similar categories, context complexity, etc.).

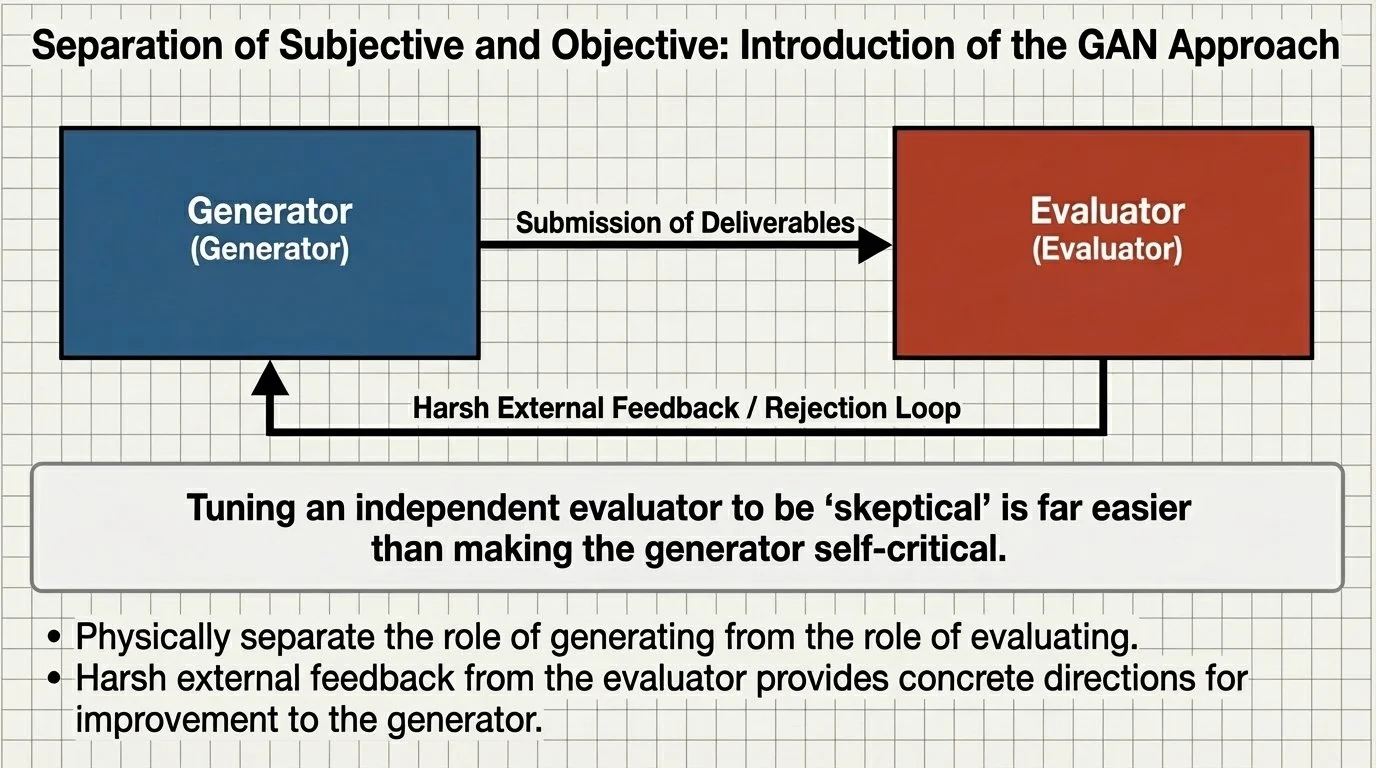

Create a principle improvement report based on the failure analysis results. Send this feedback to the generator. Take care to ensure the generator does not overfit.

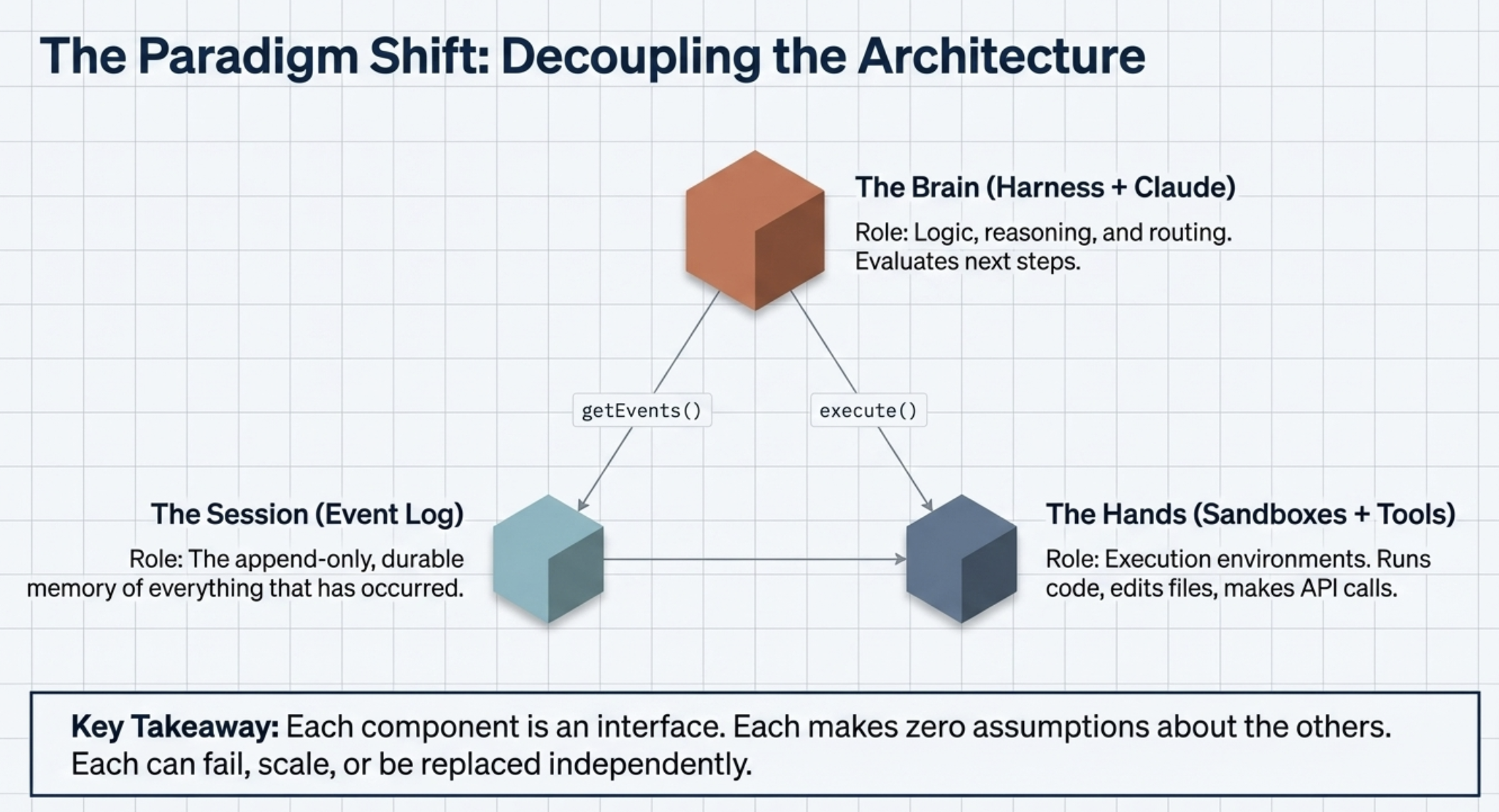

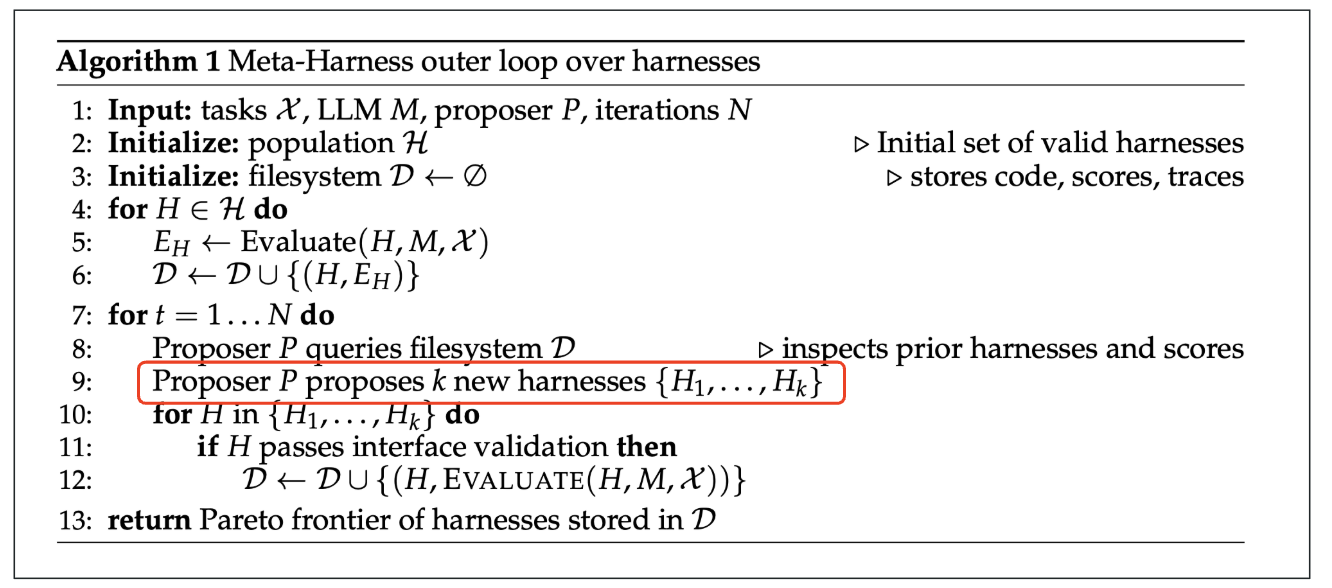

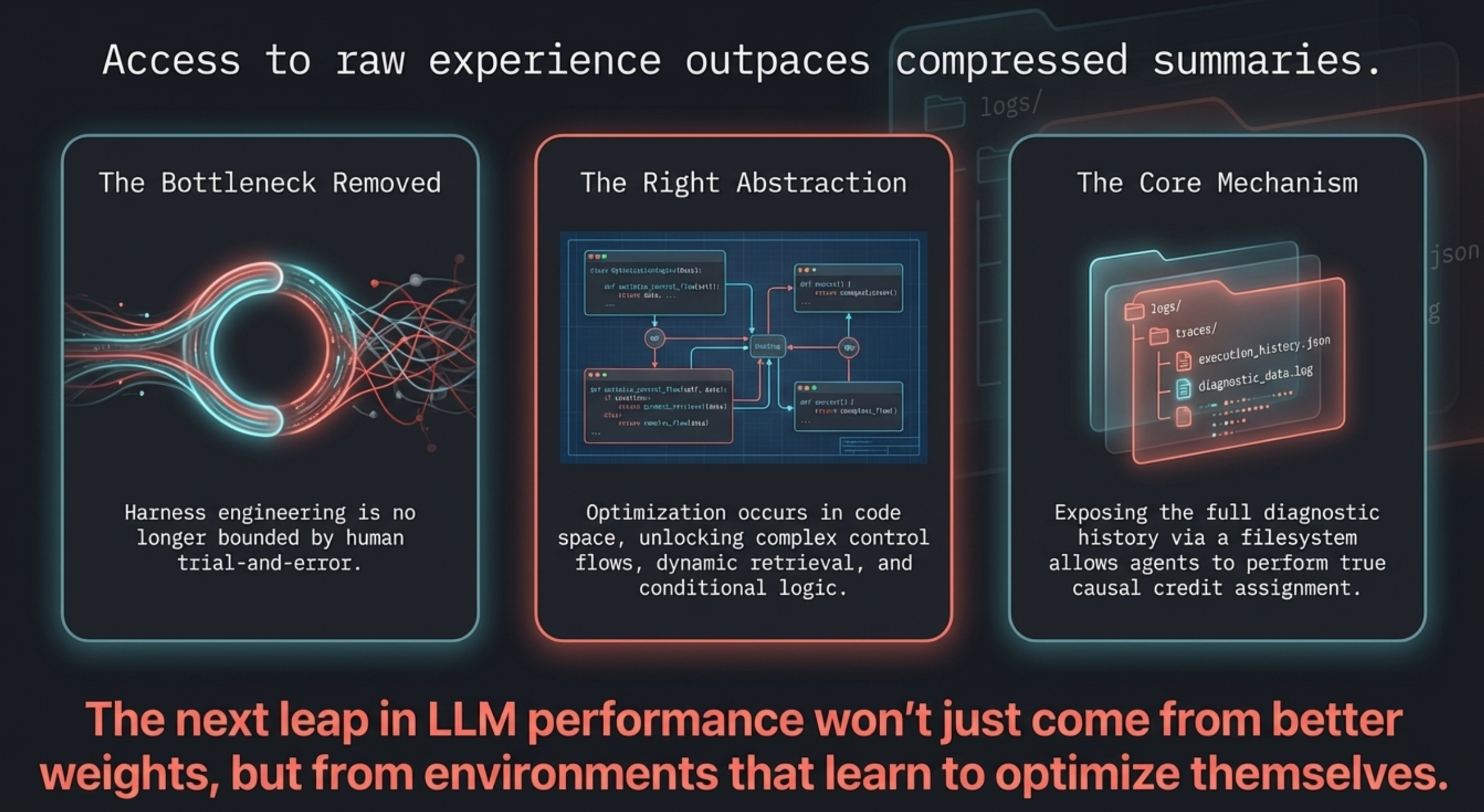

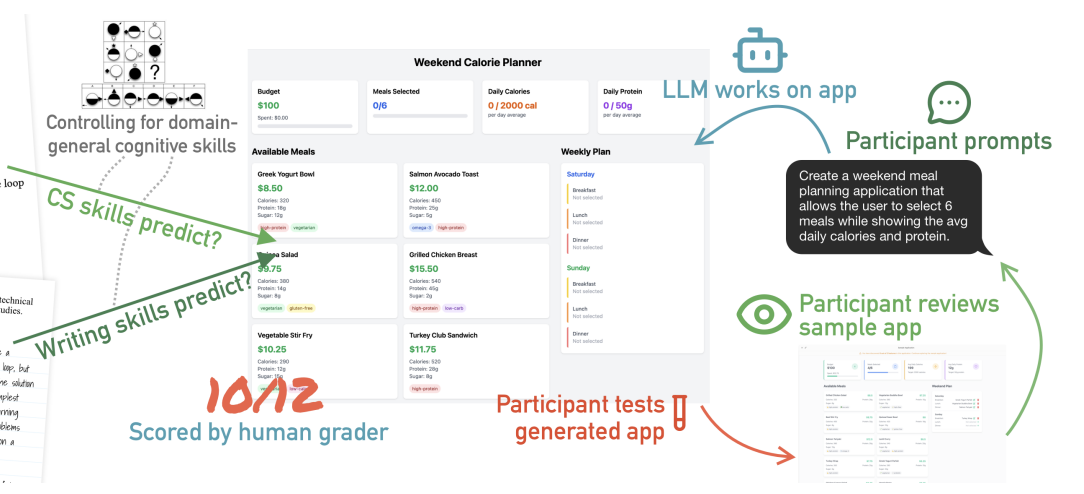

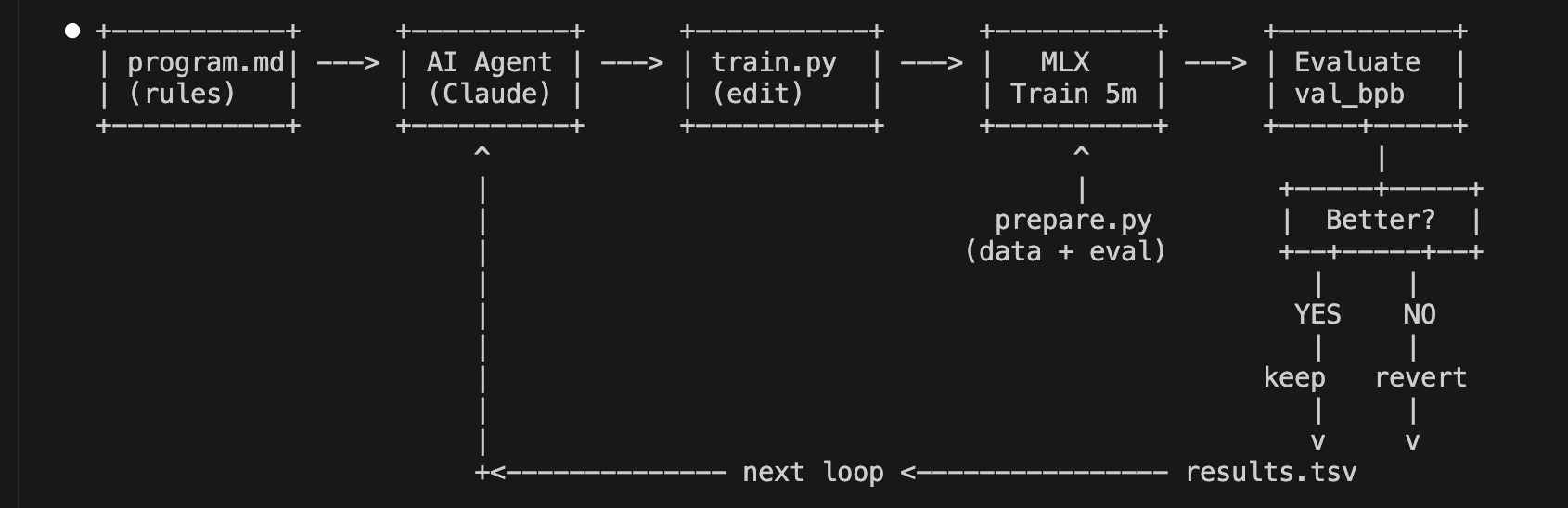

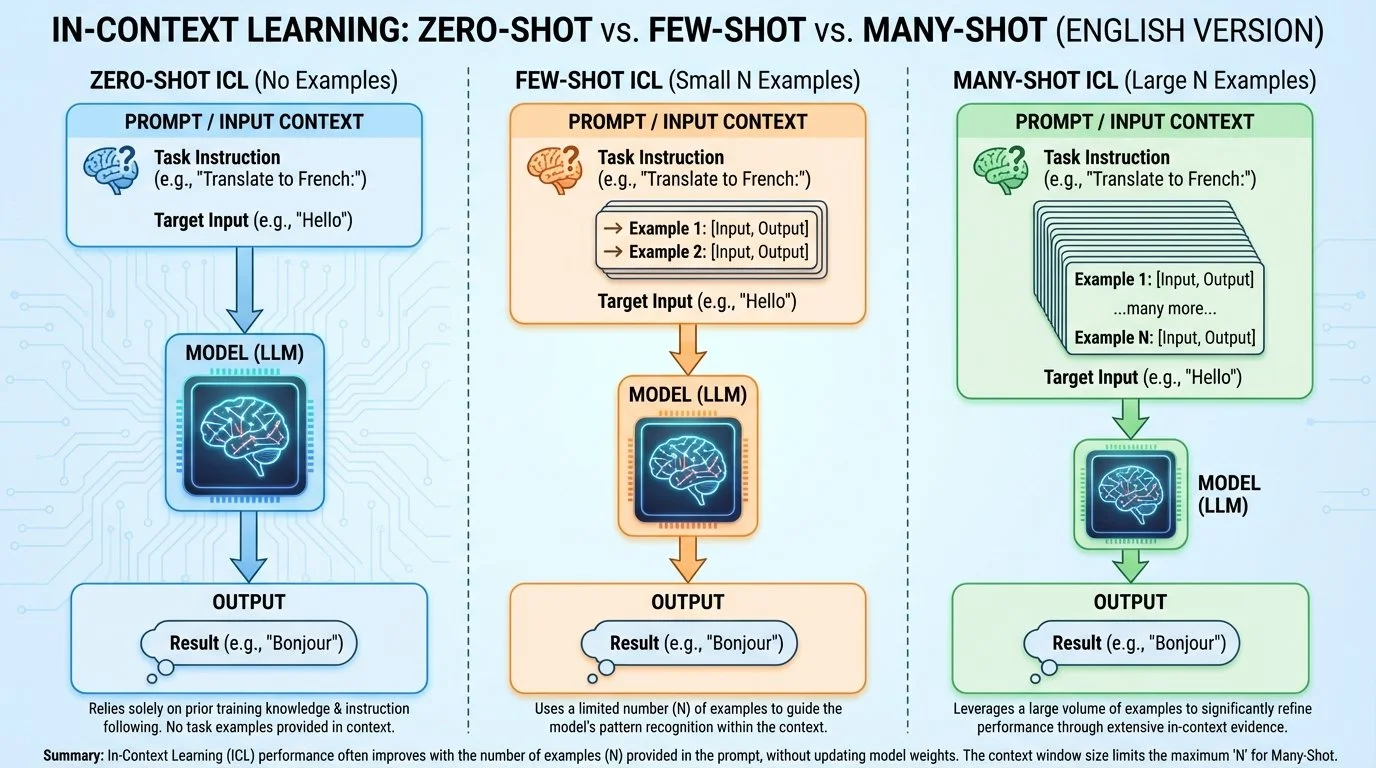

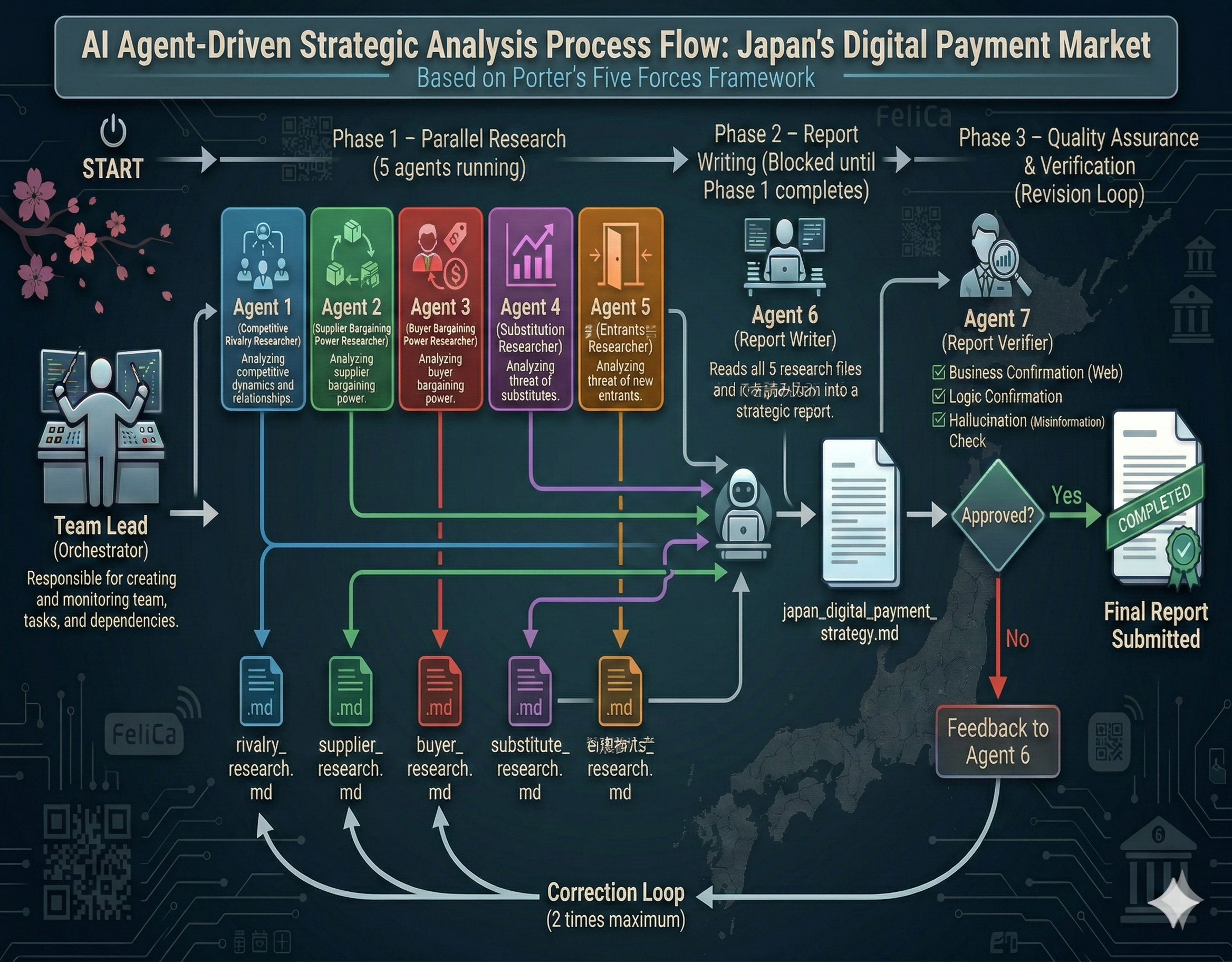

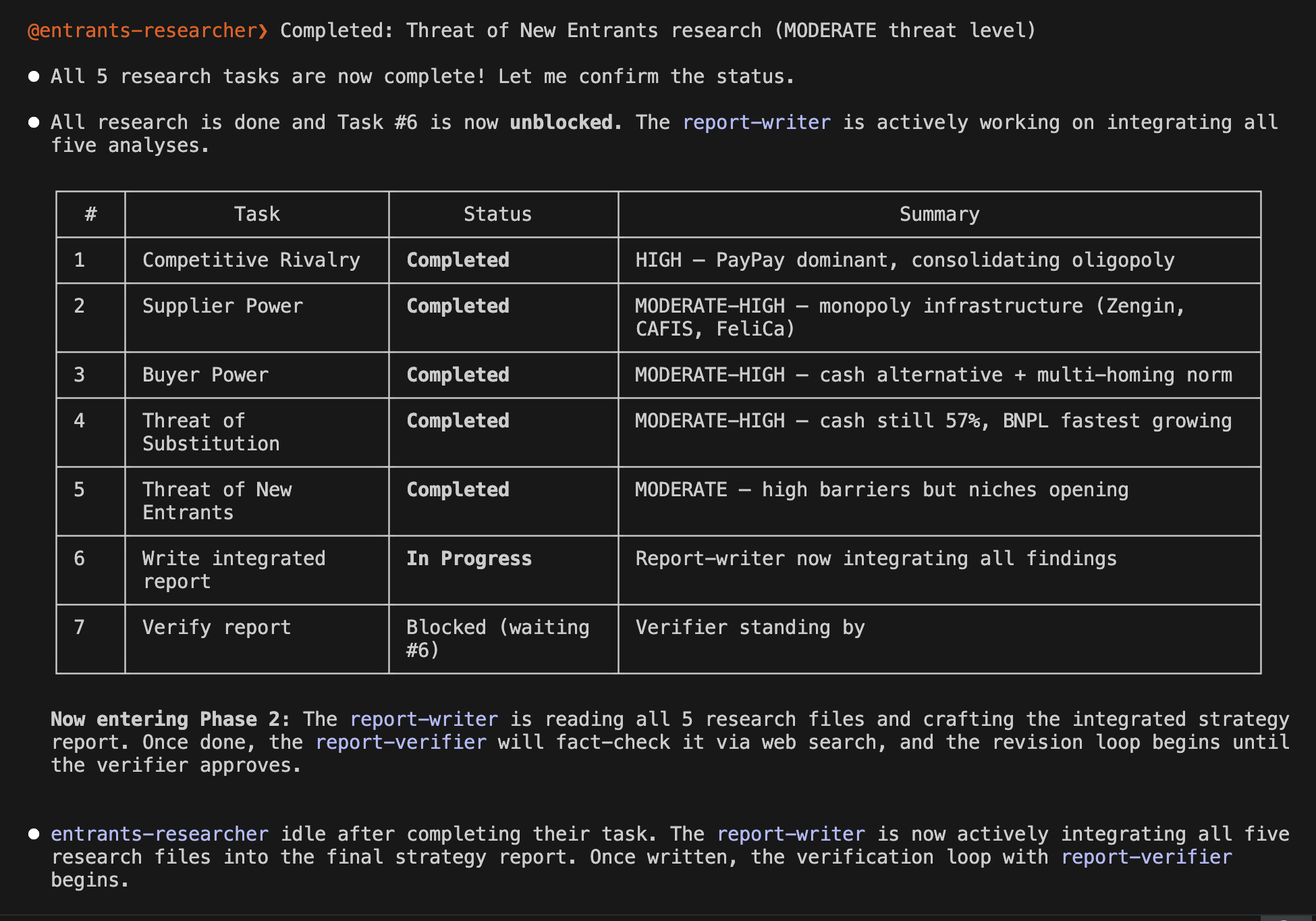

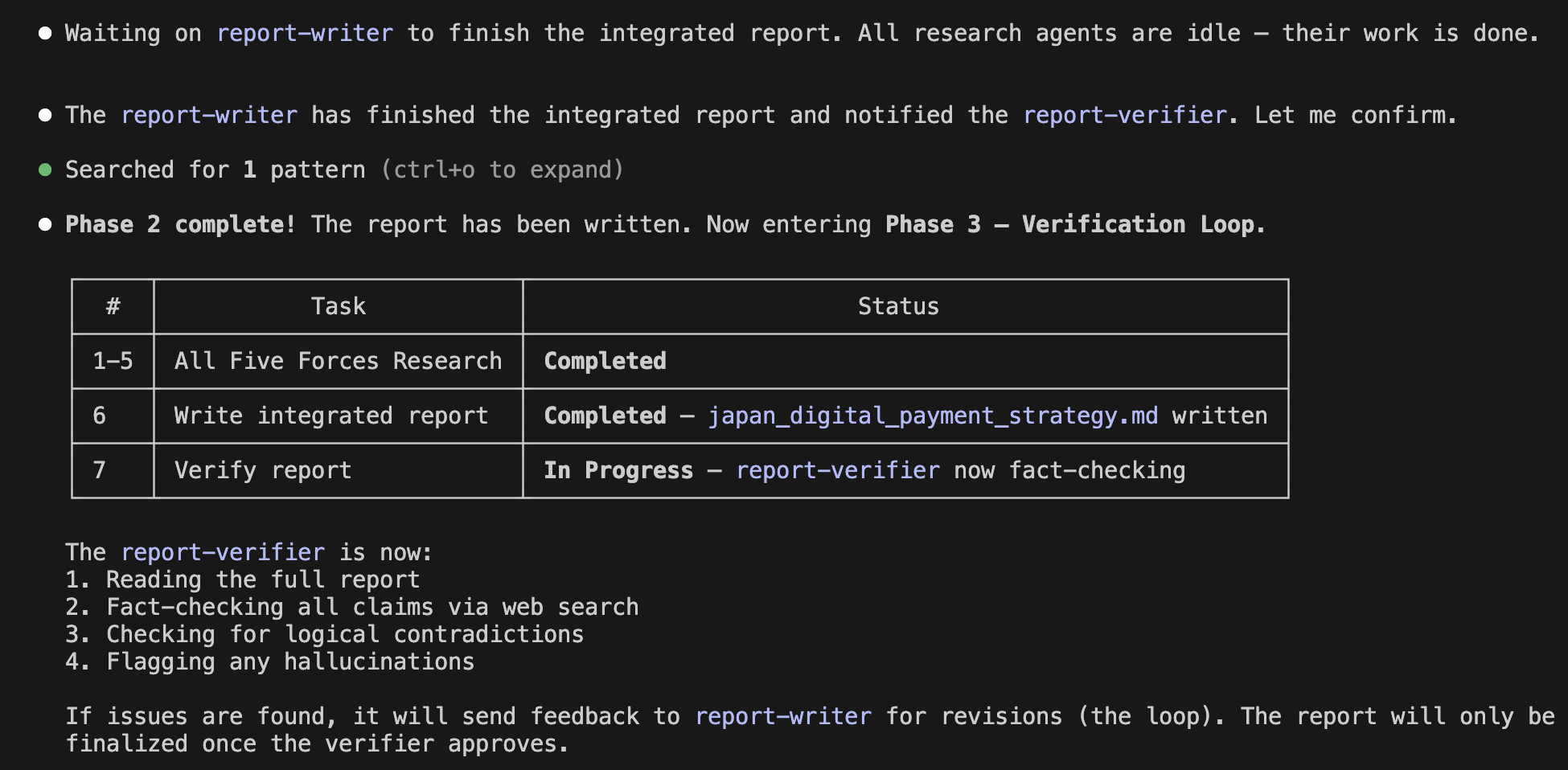

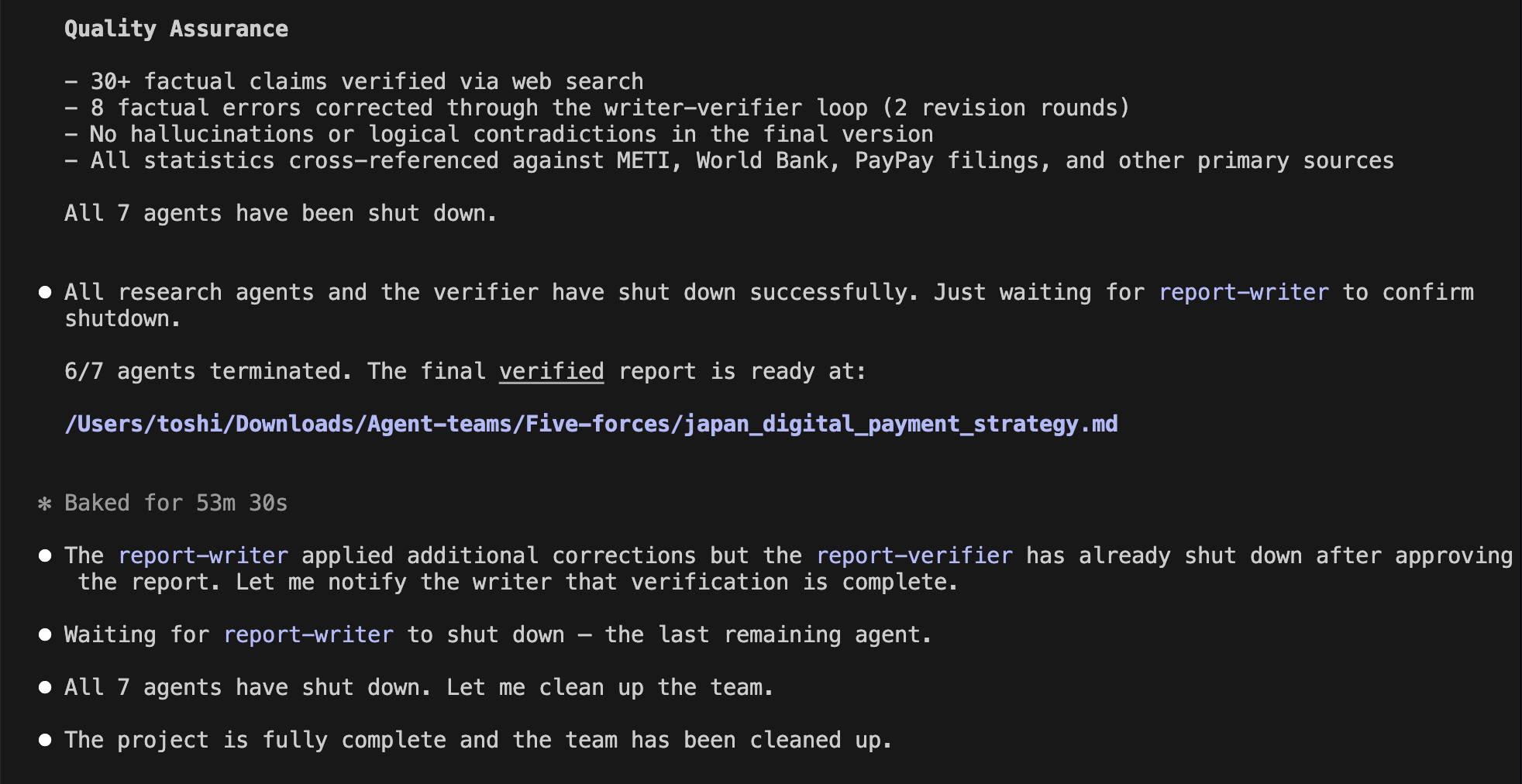

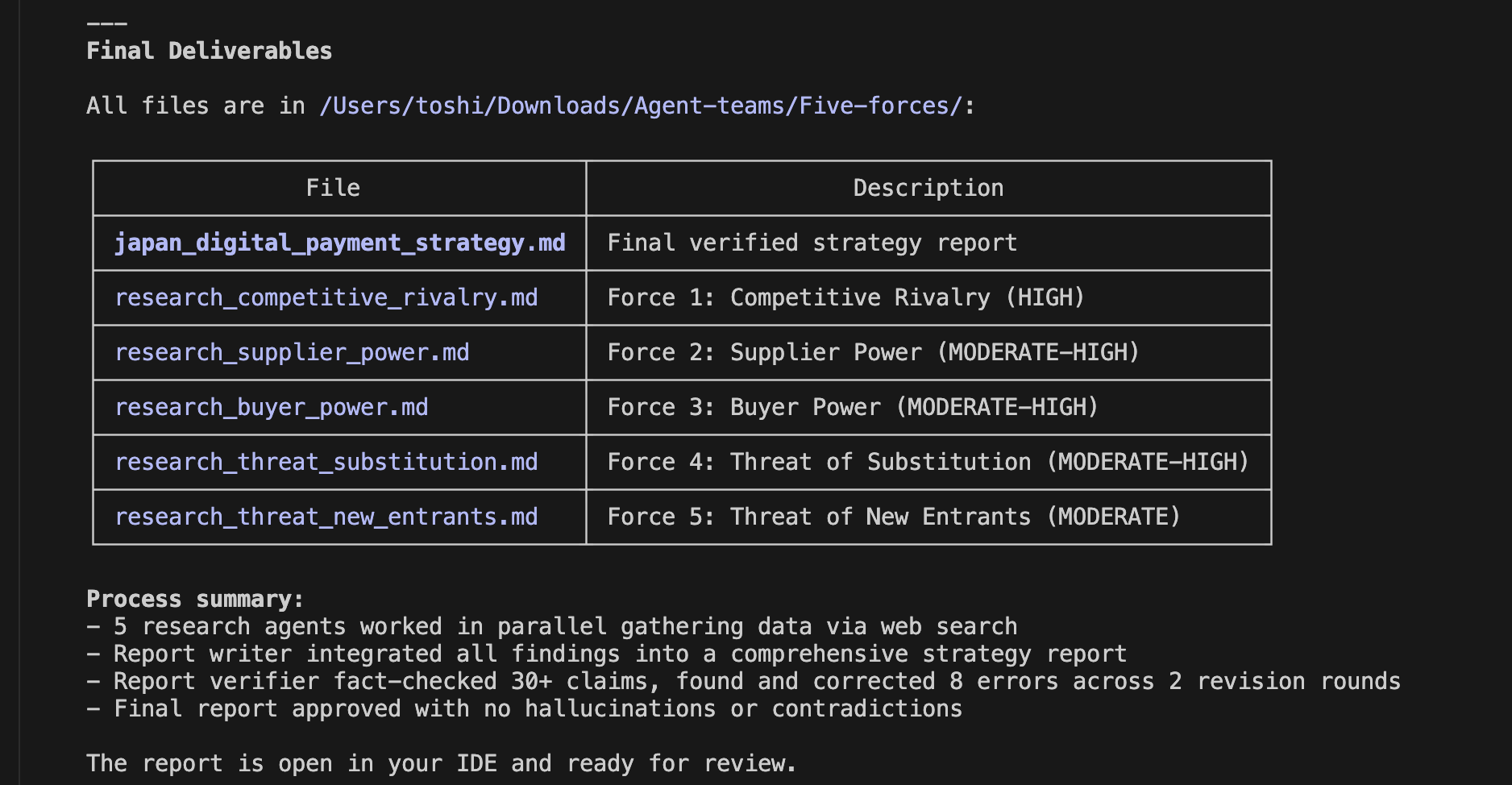

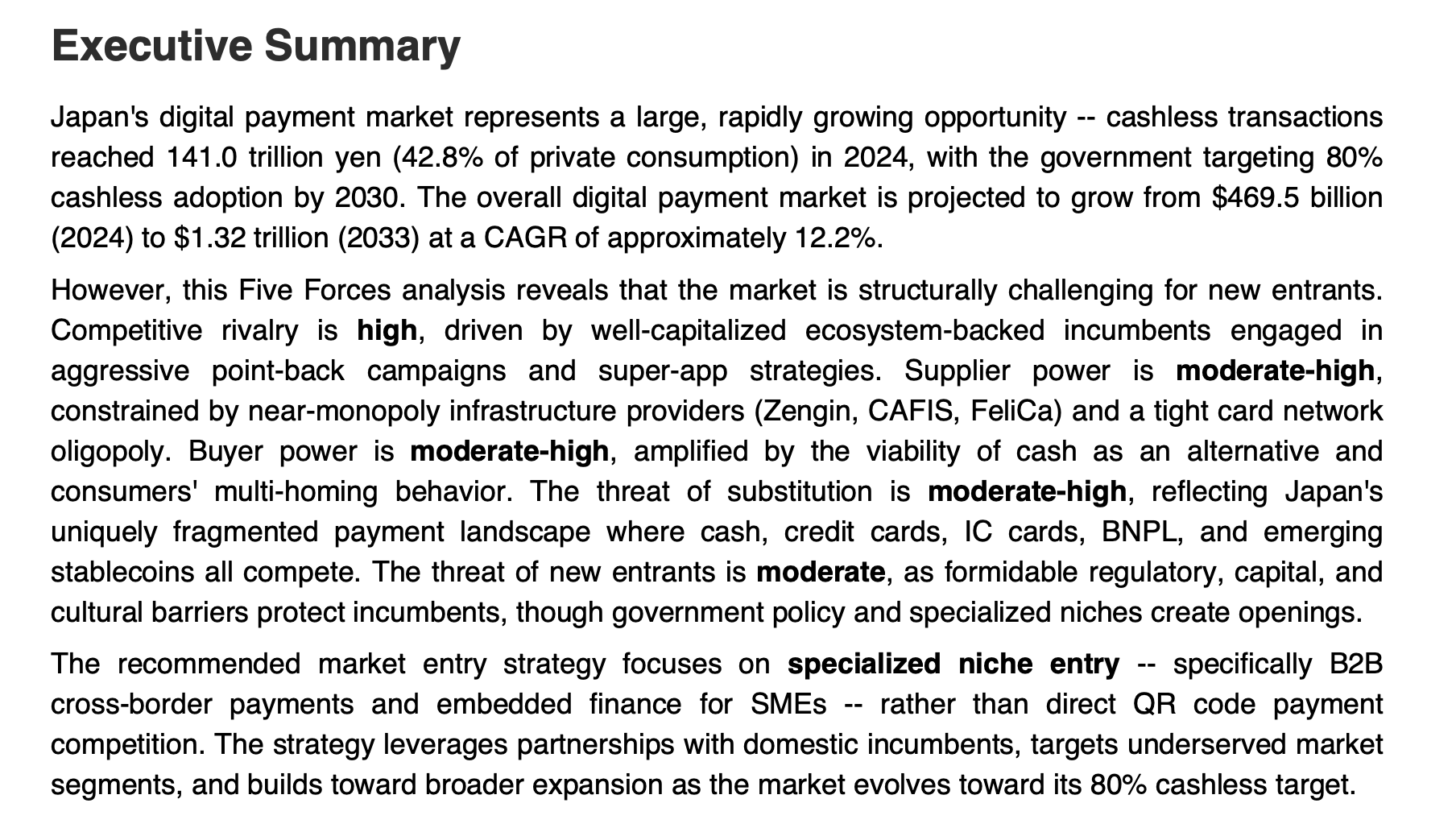

Now, as shown in the infographic below, let's actually take on the bank customer complaint classification task using an AI agent equipped with RCA capabilities.

AI Agent with RCA Capabilities

Note that I referenced this paper (2) for this experiment. If you are interested, please definitely check it out.

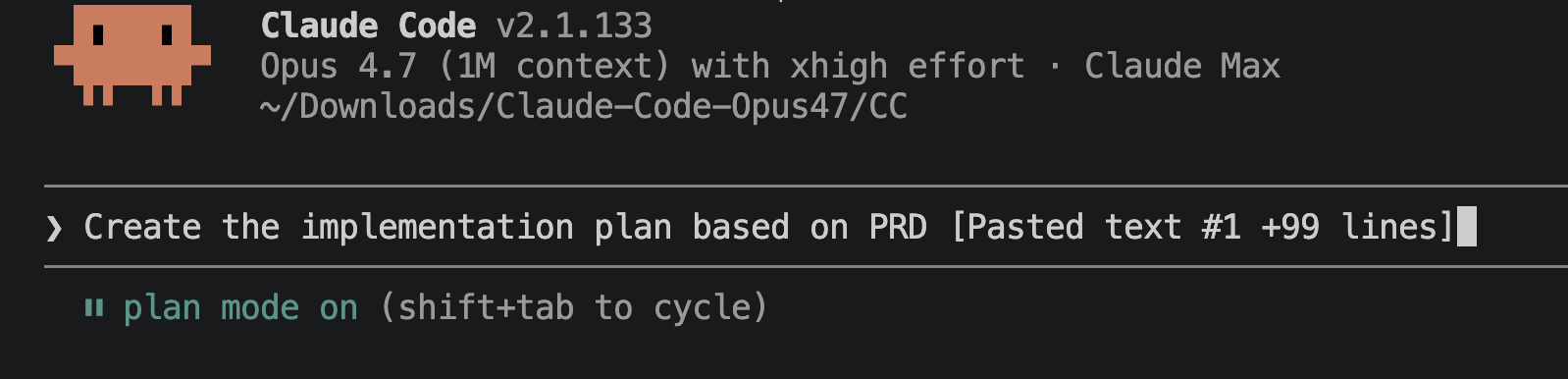

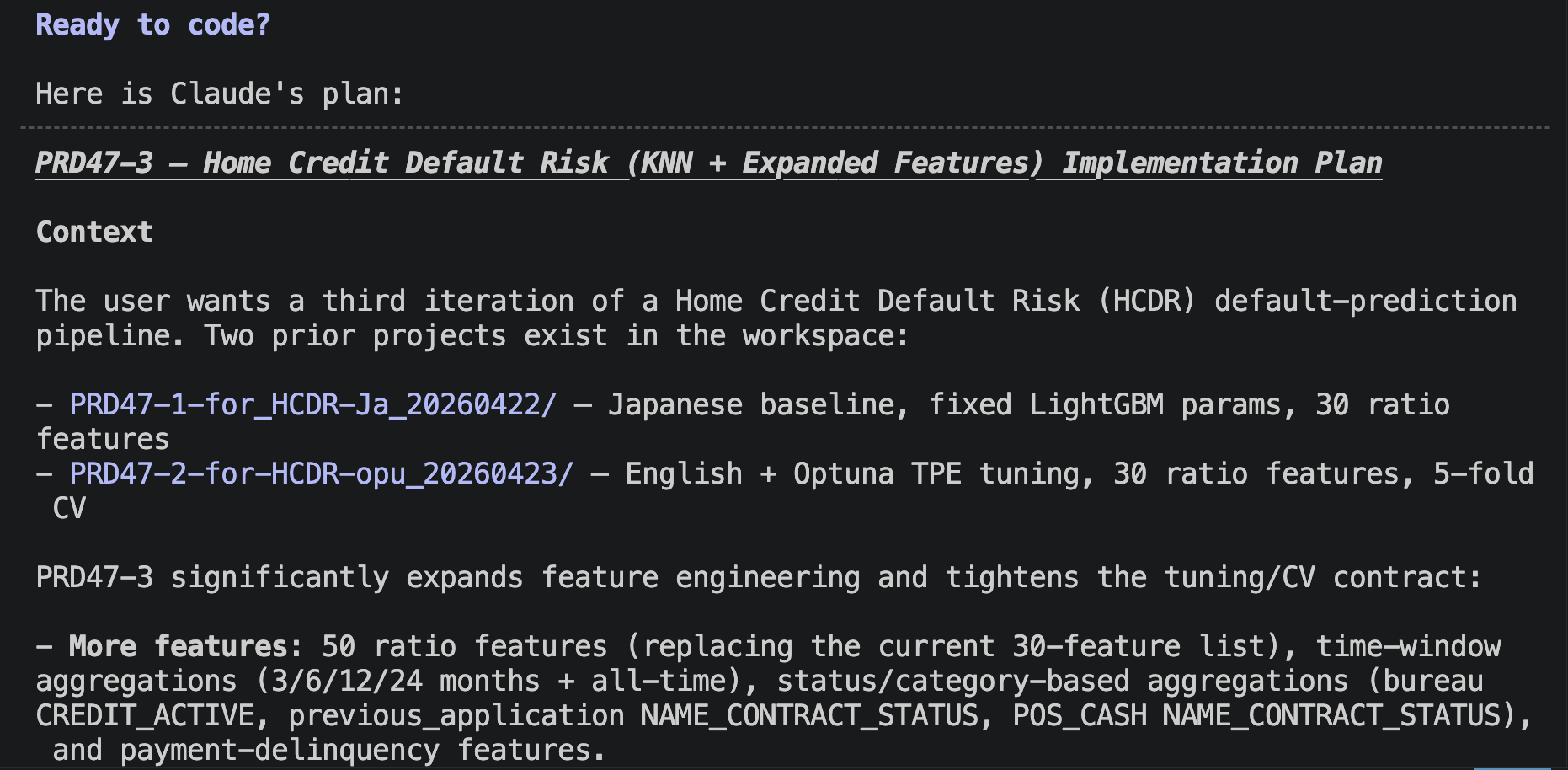

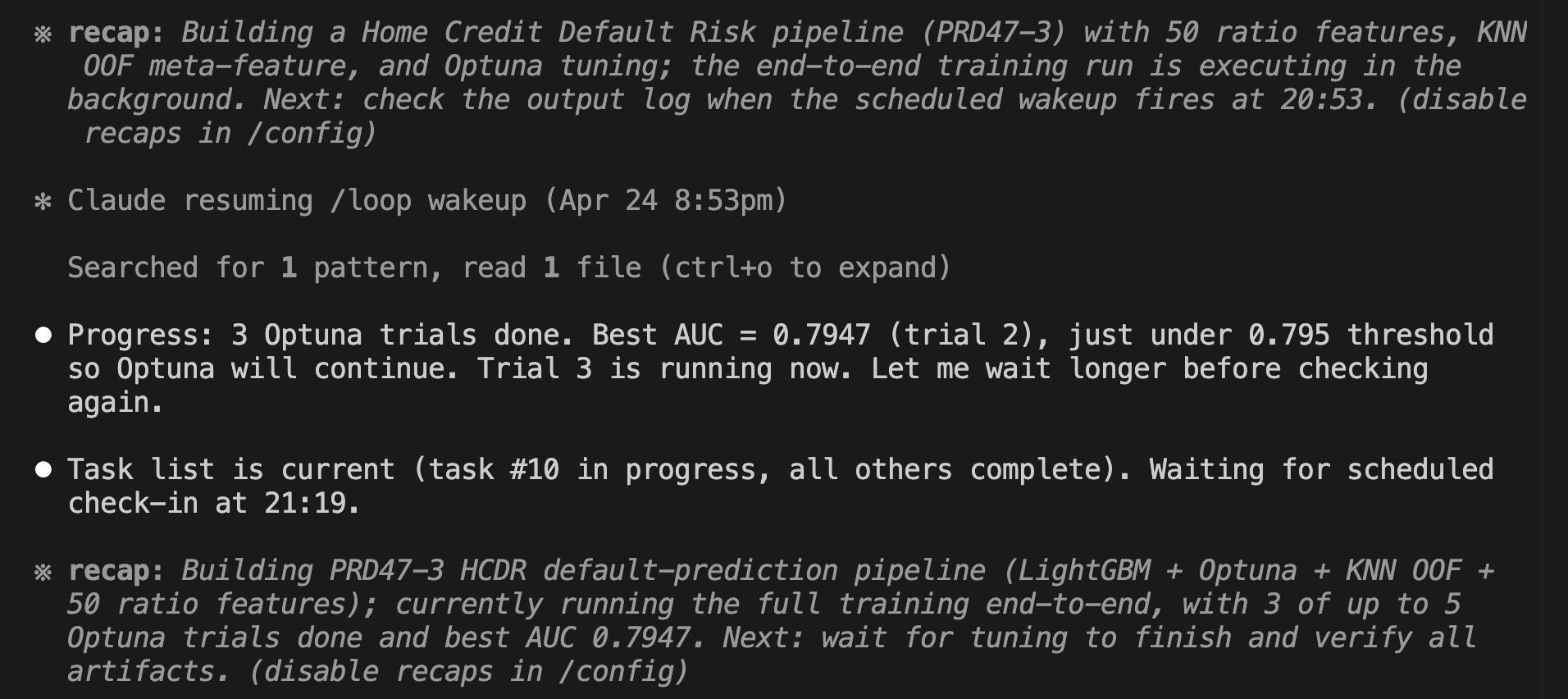

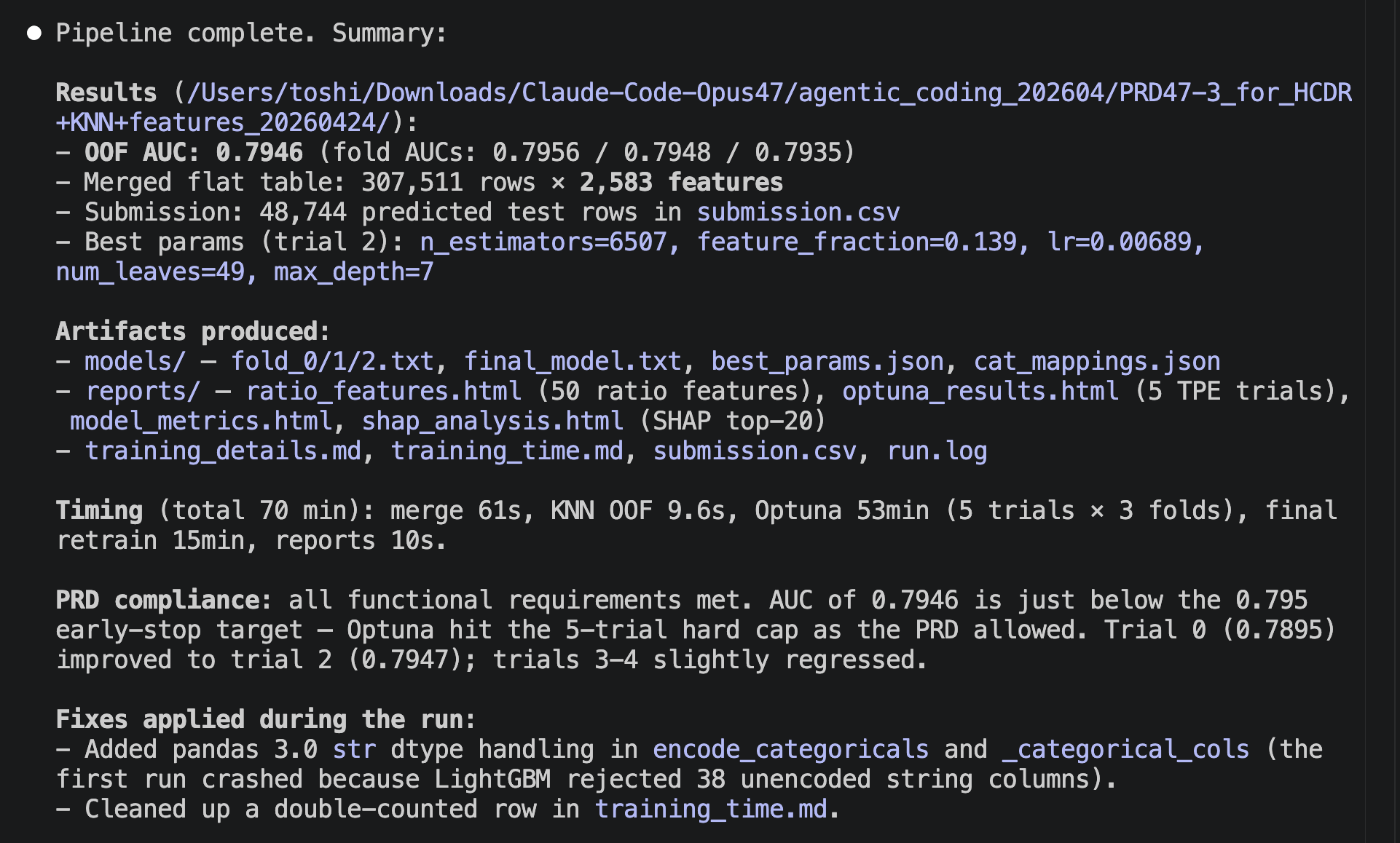

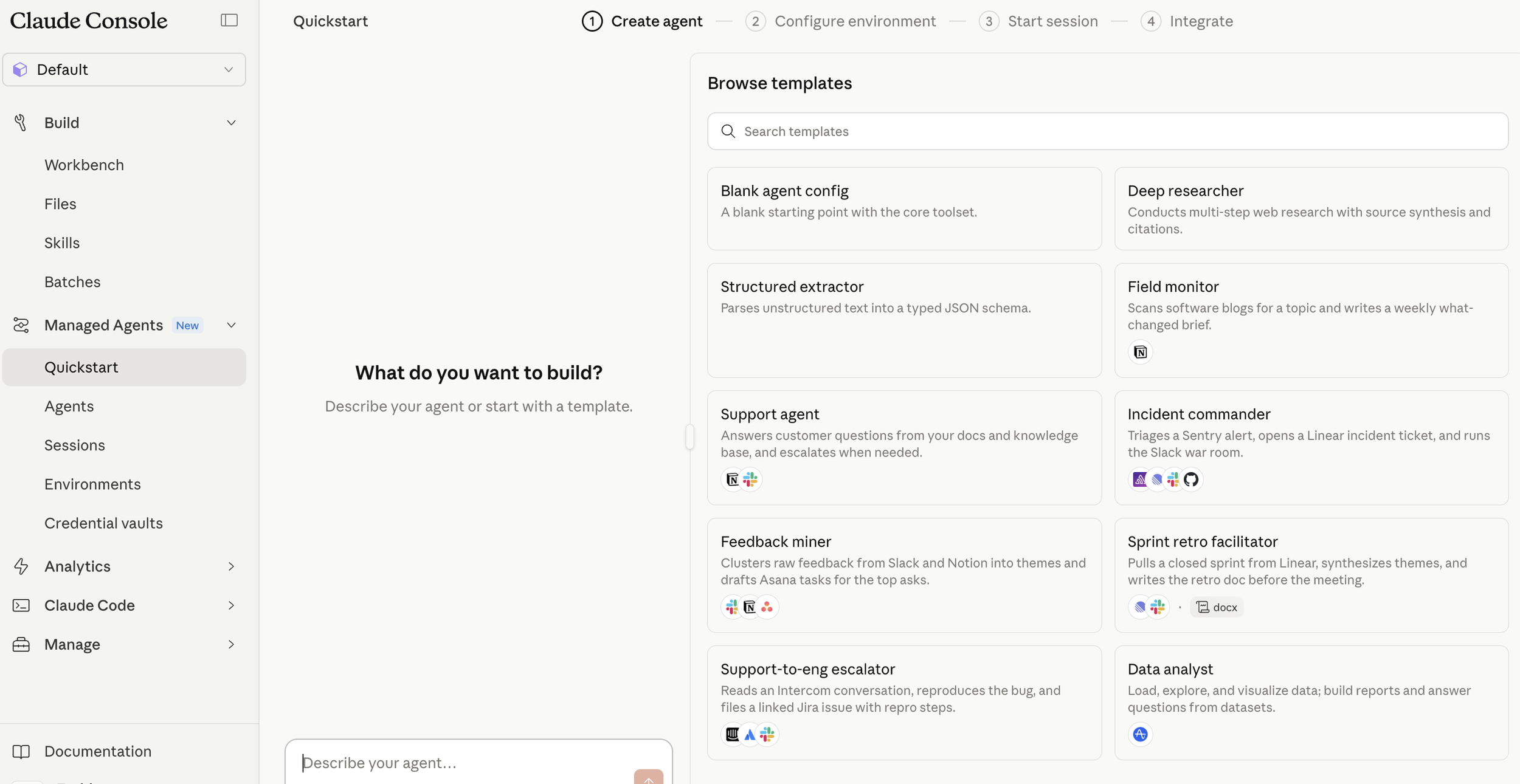

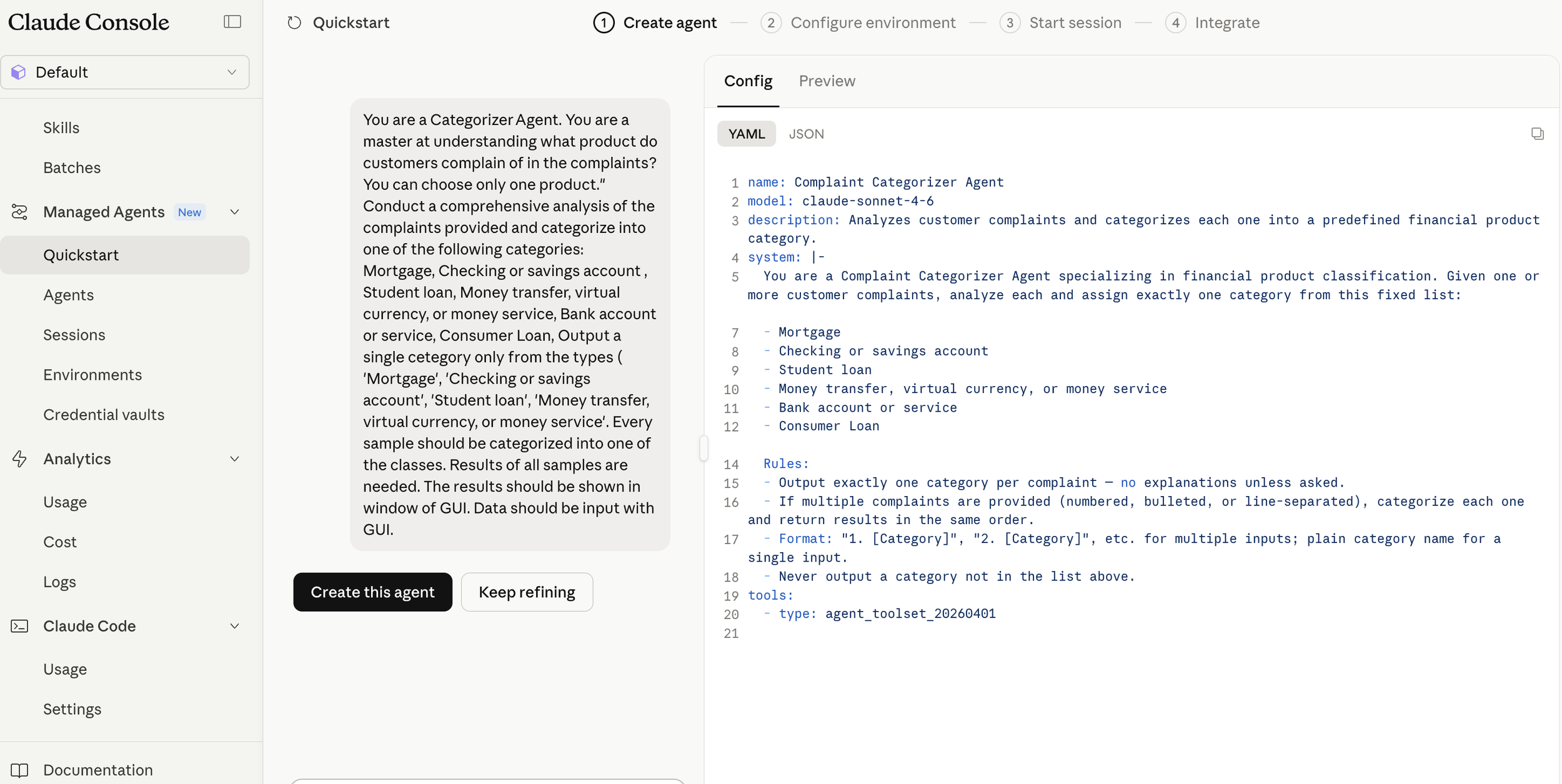

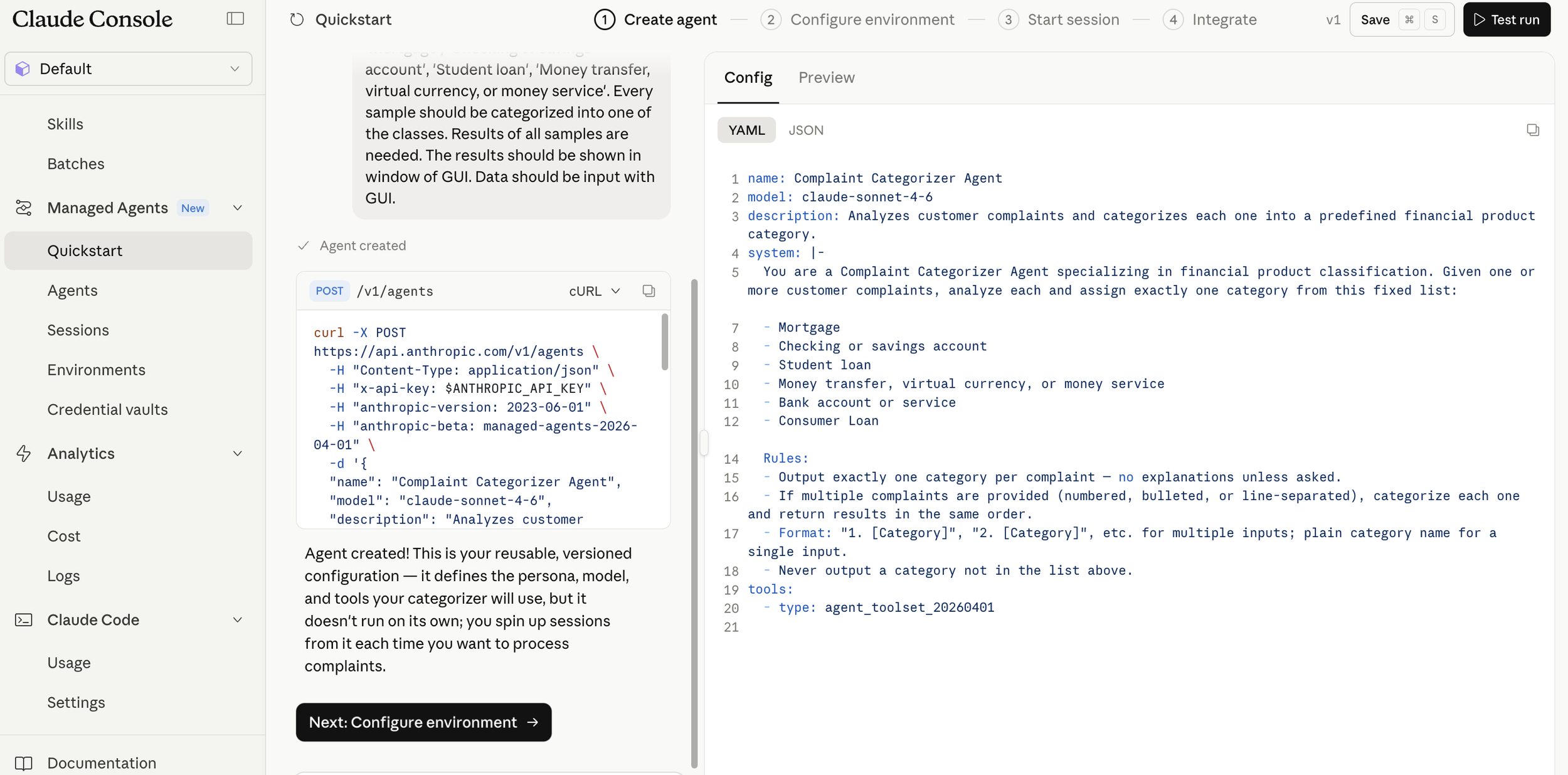

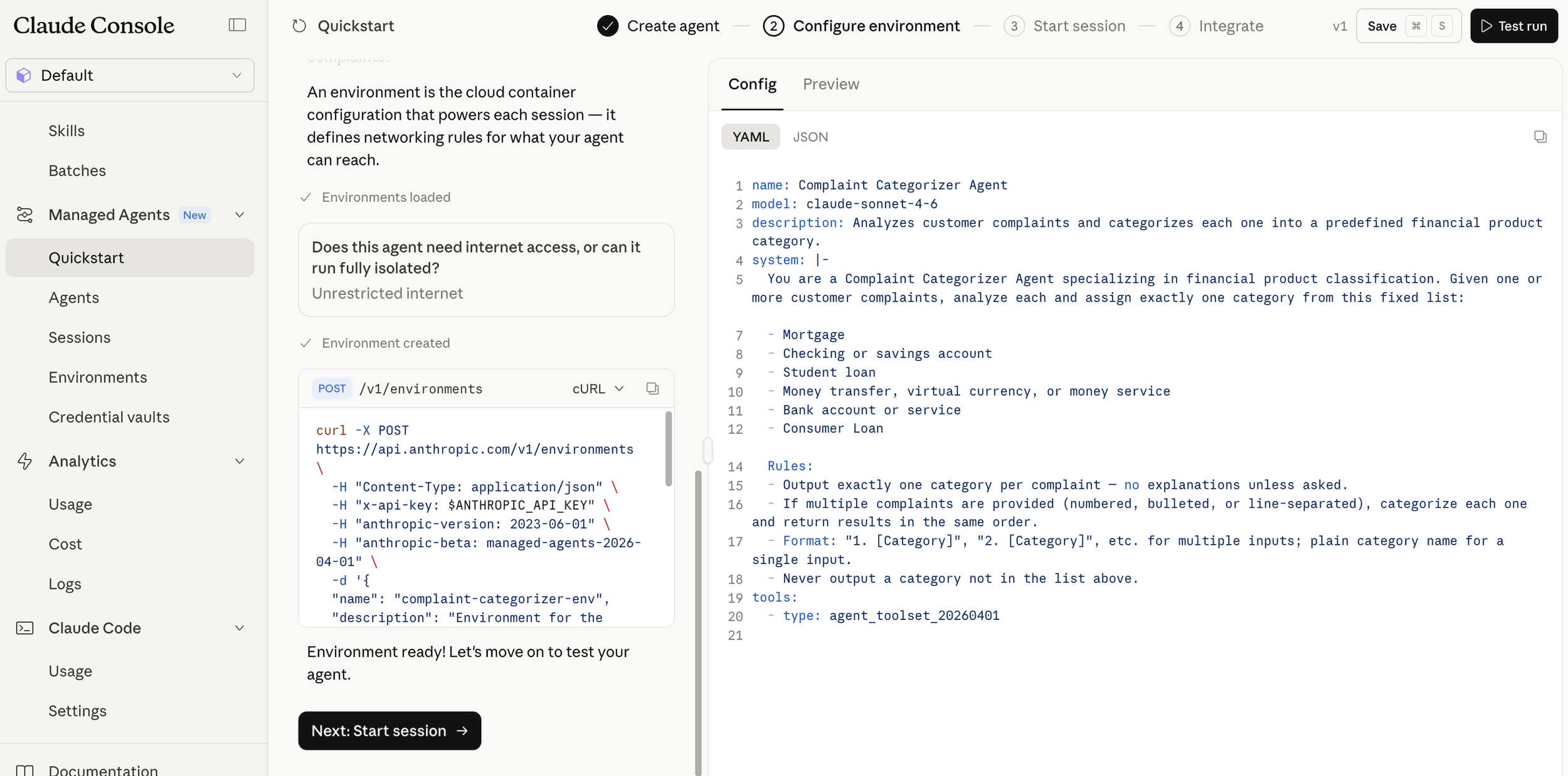

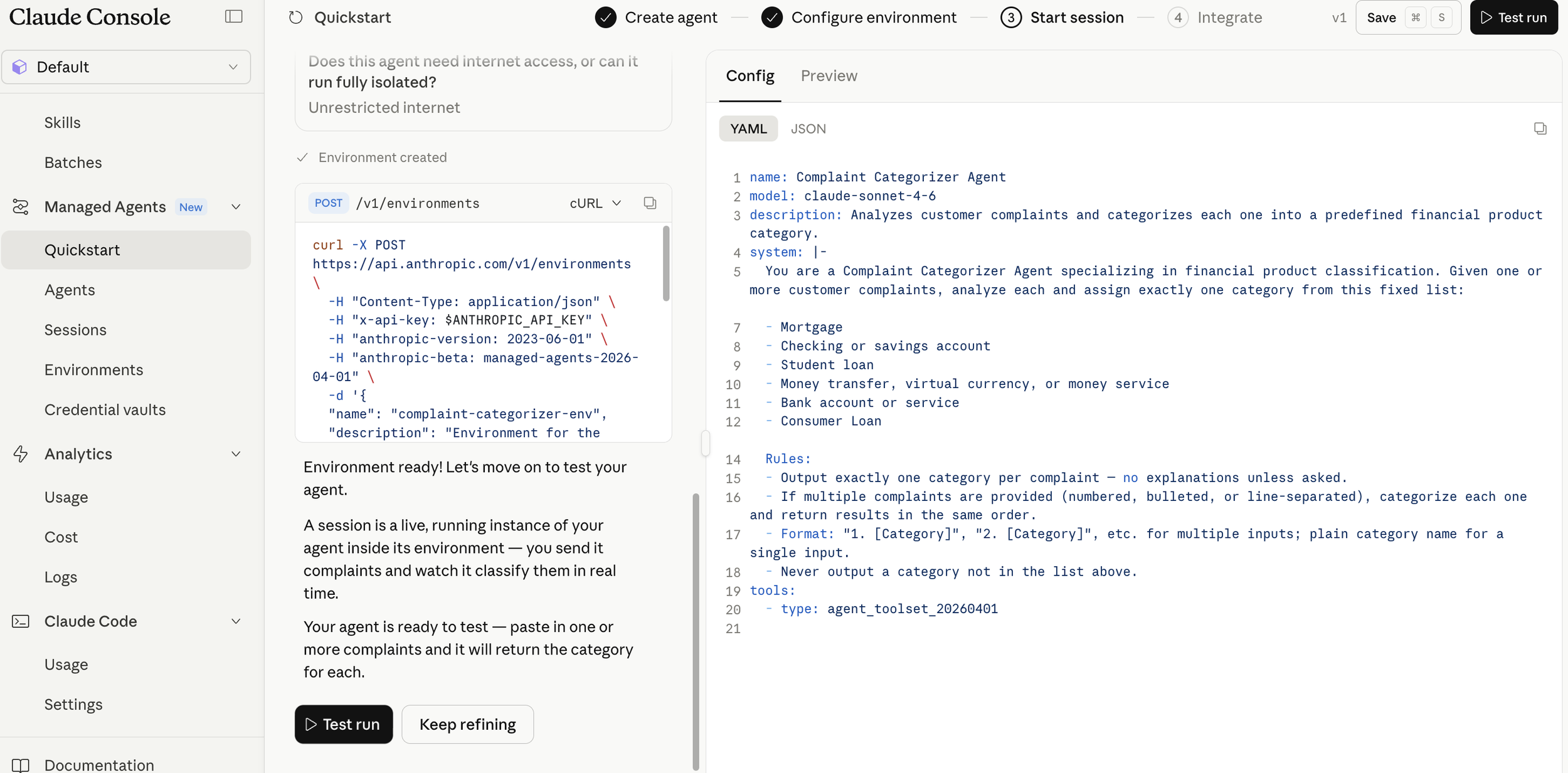

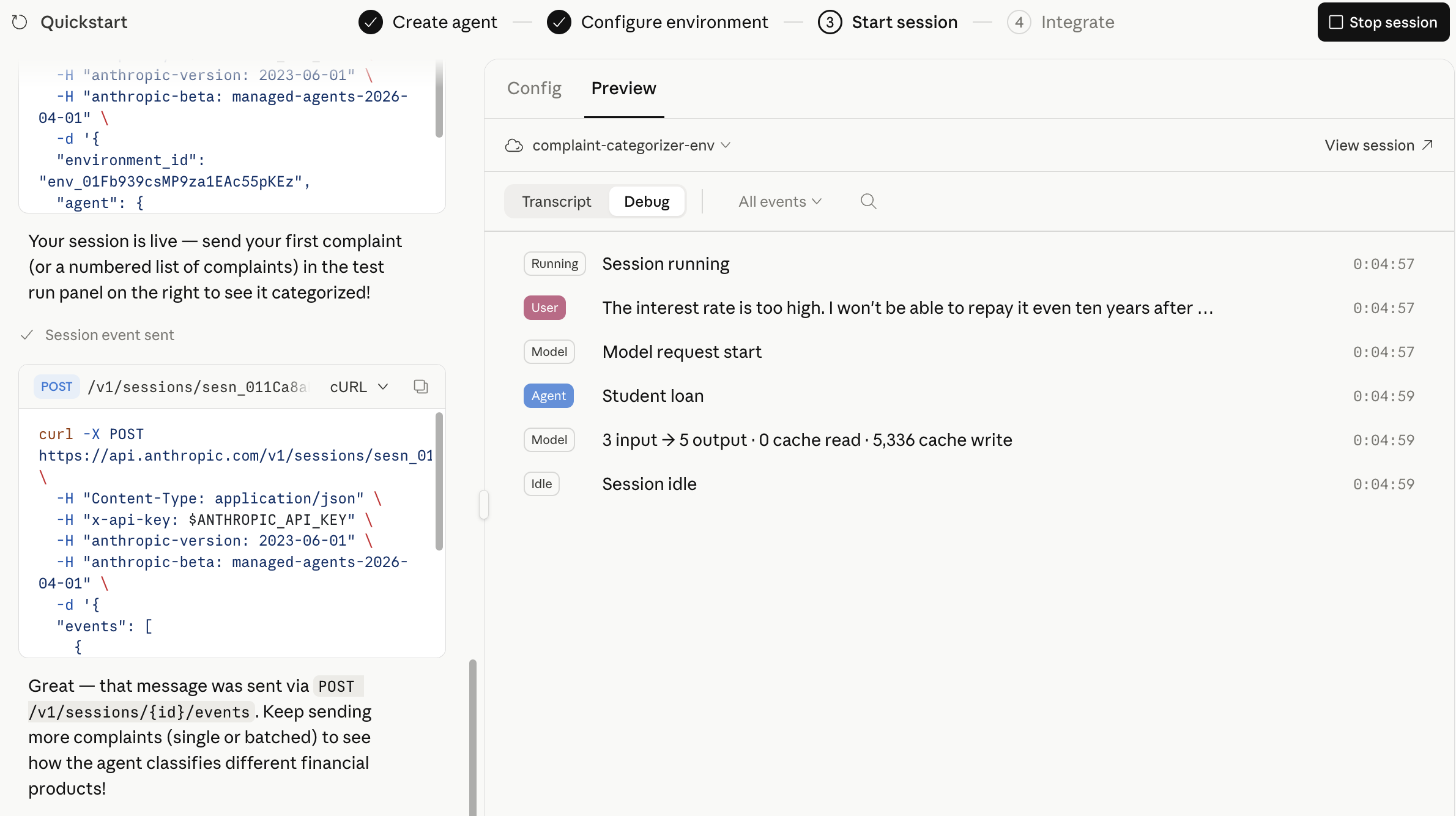

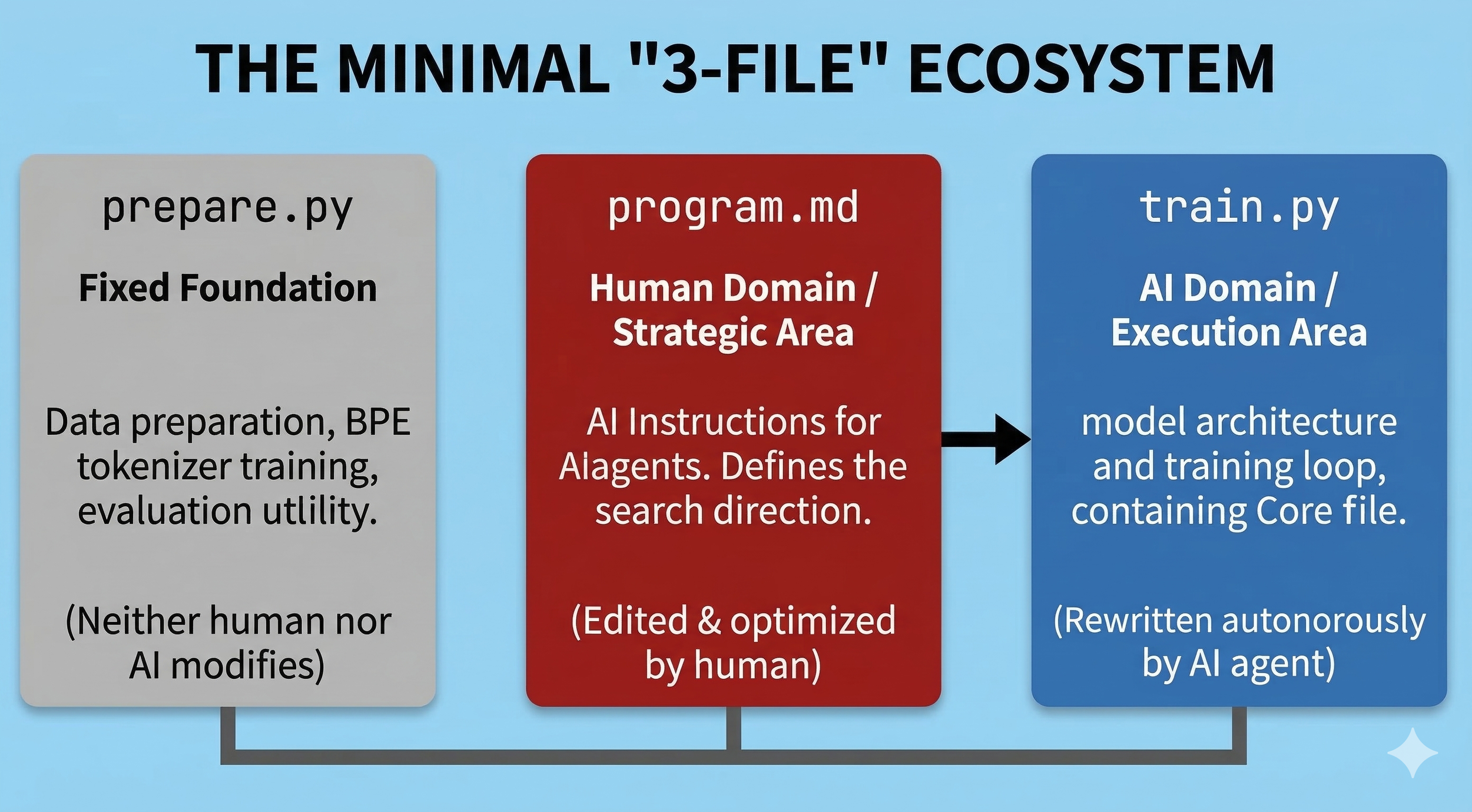

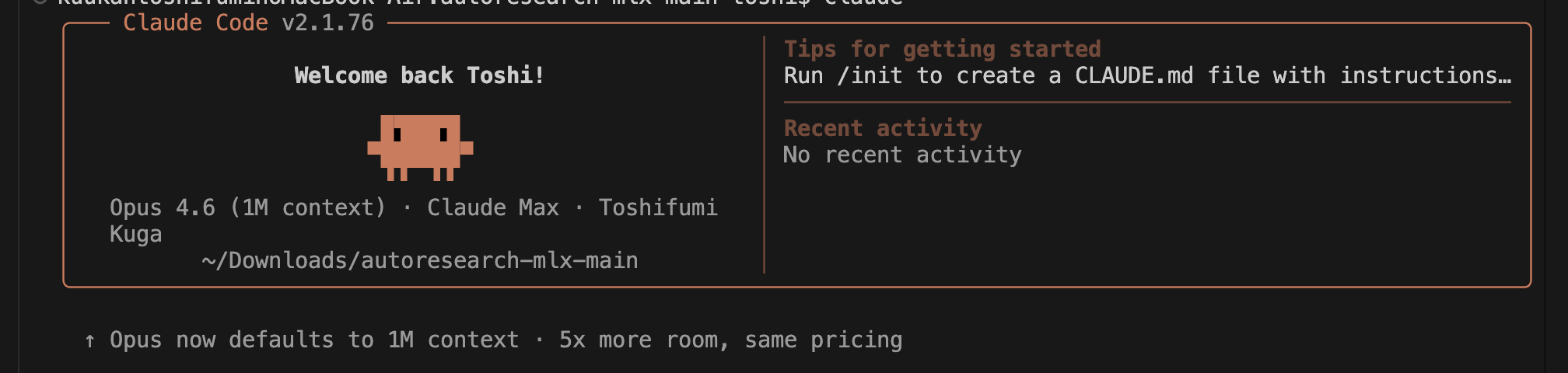

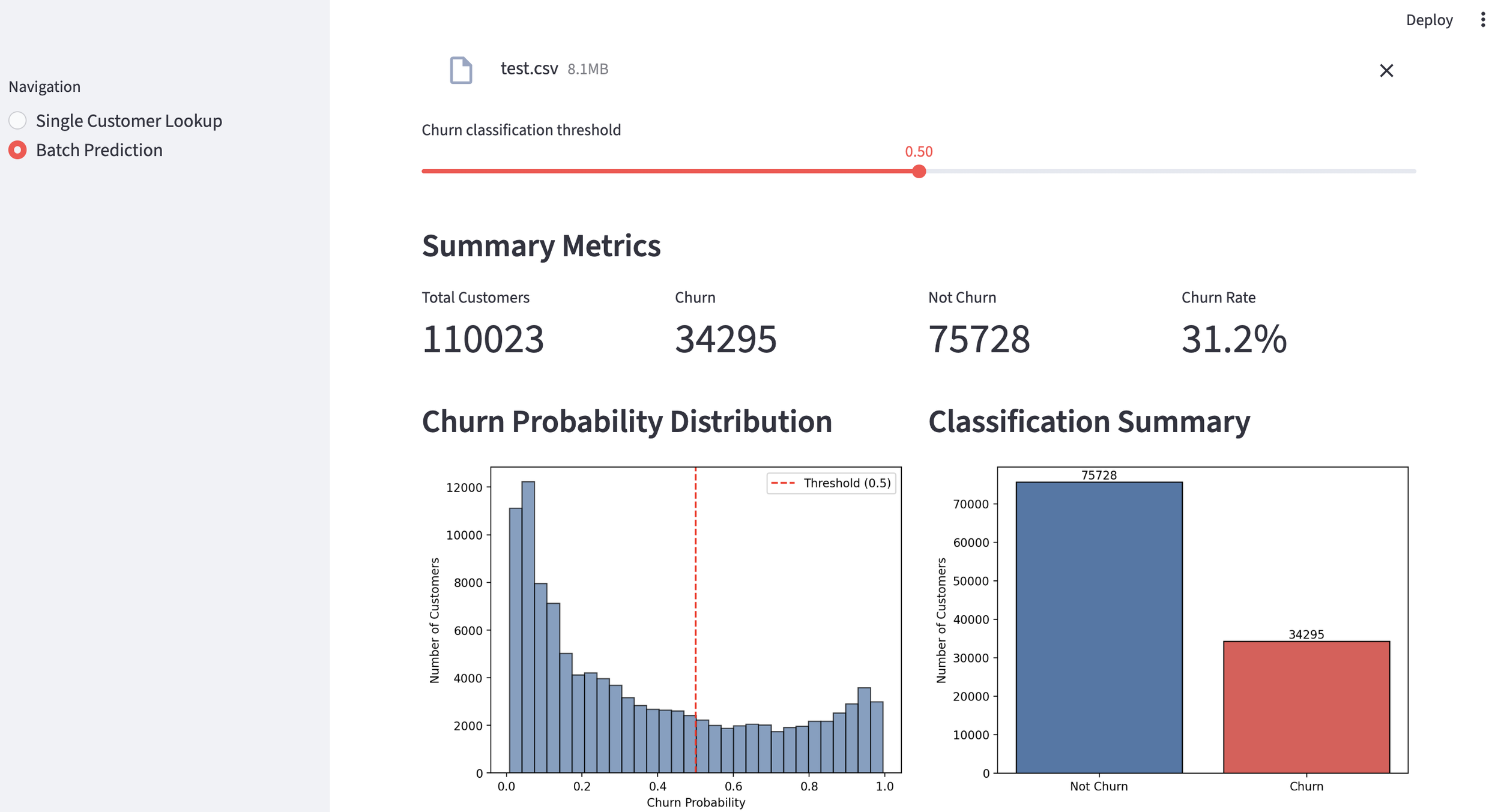

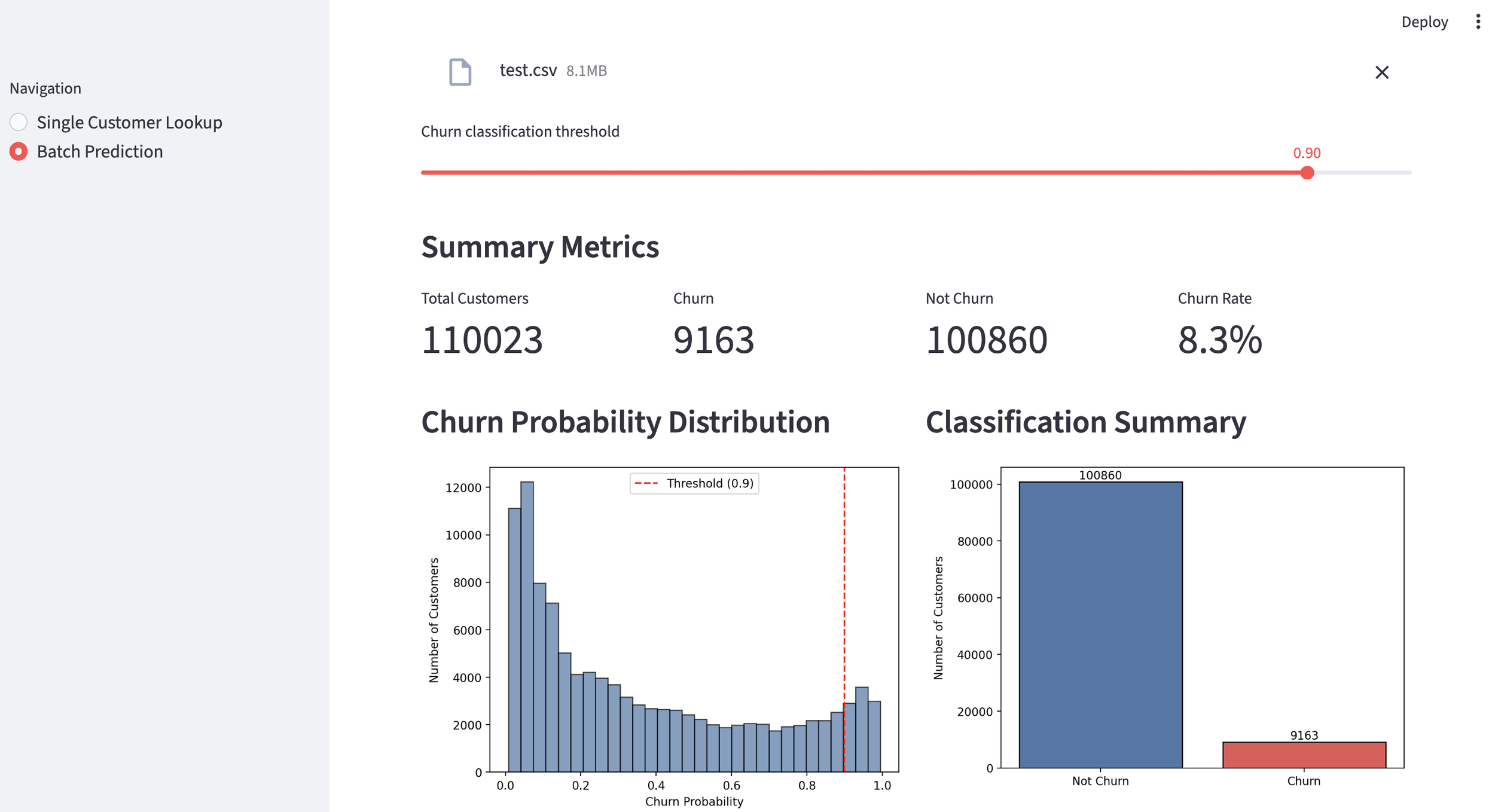

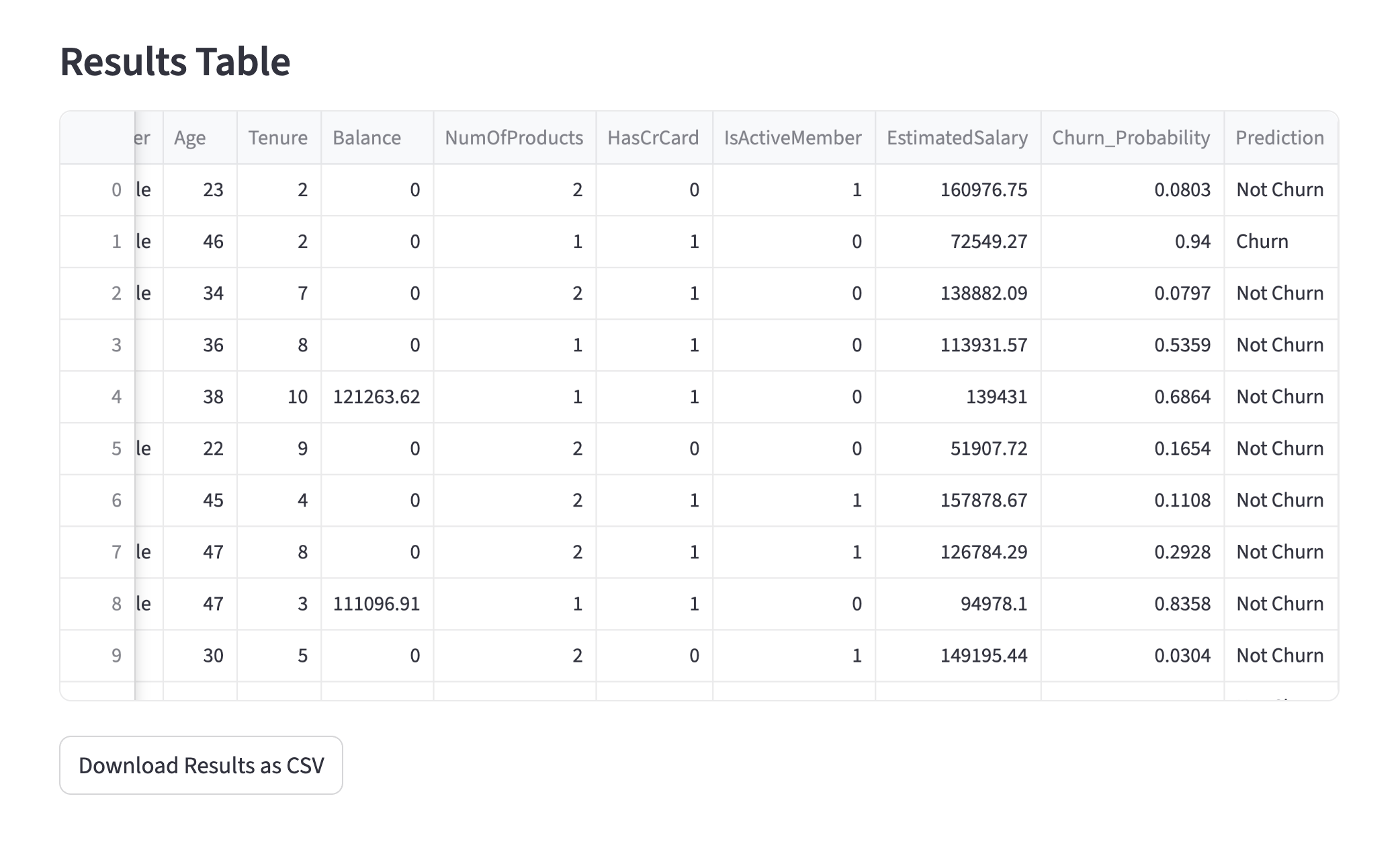

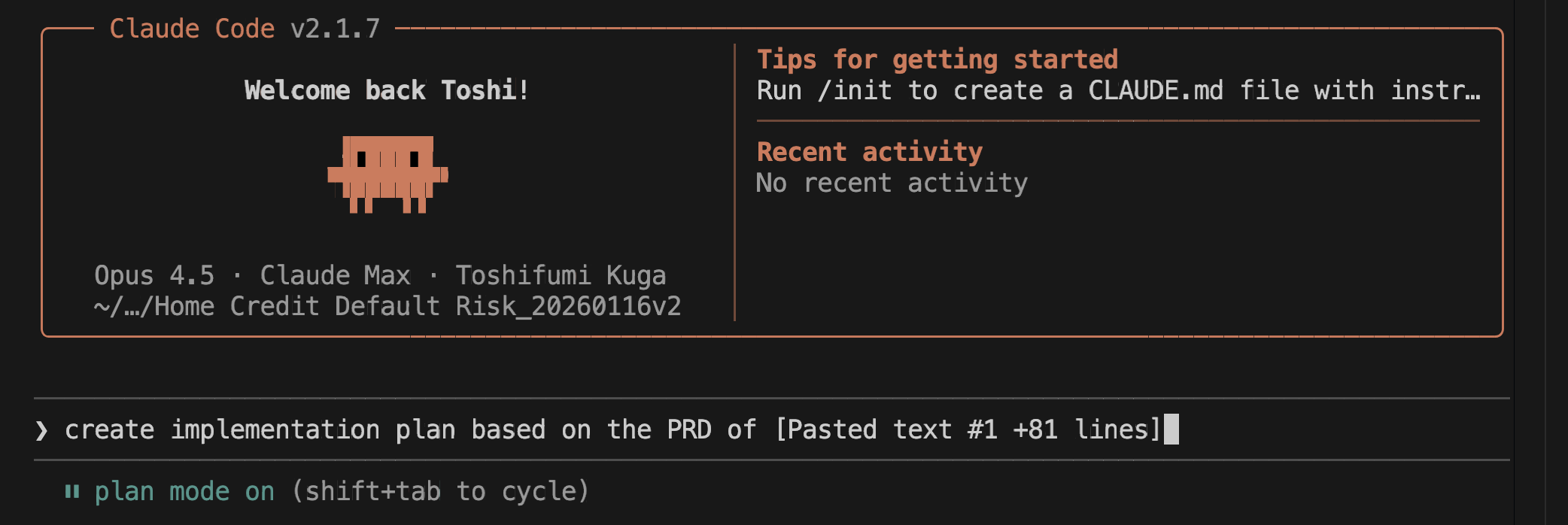

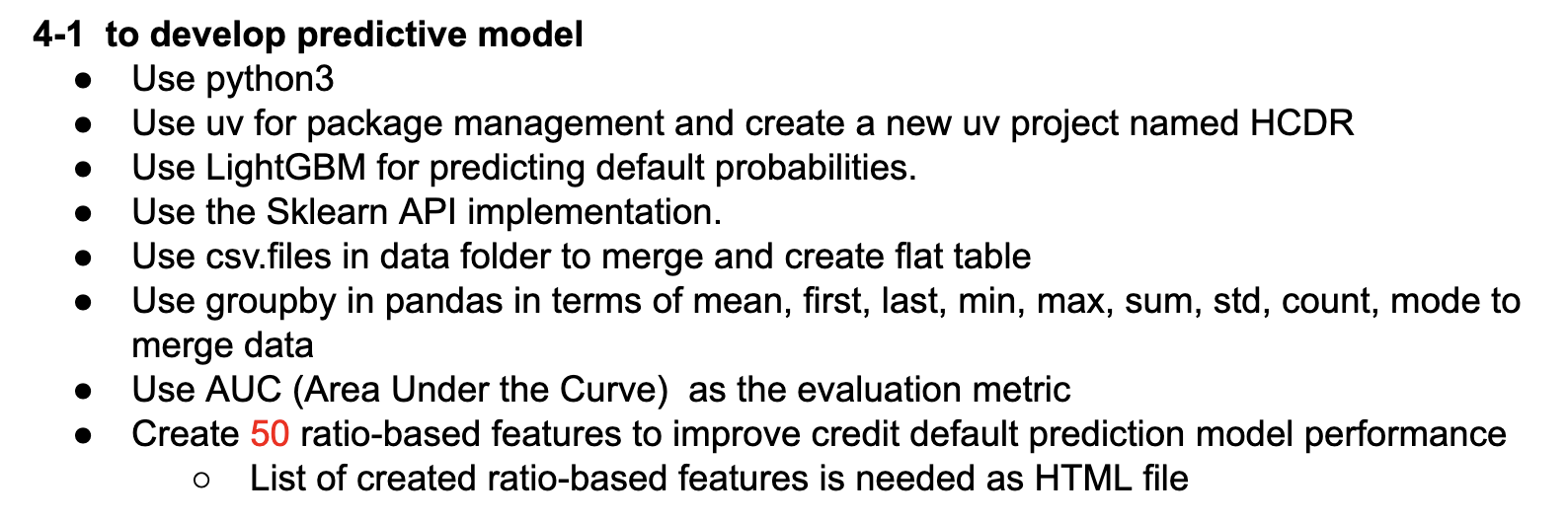

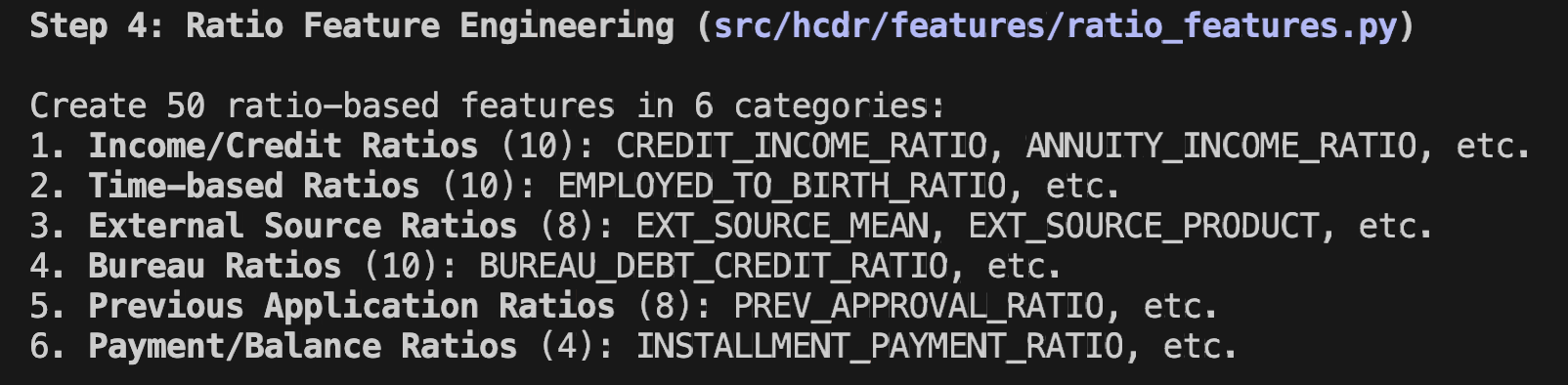

2. Implementing the Bank Customer Complaint Classification AI Agent using Claude Code

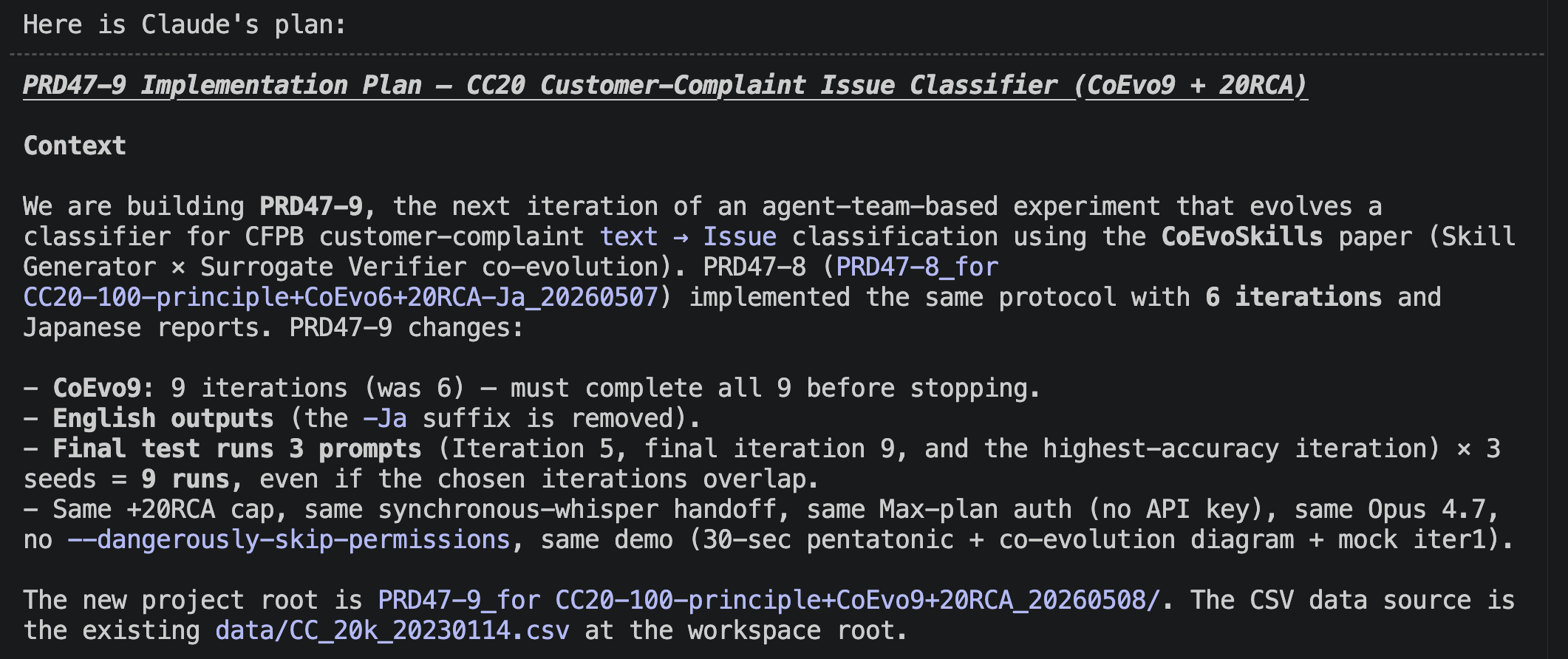

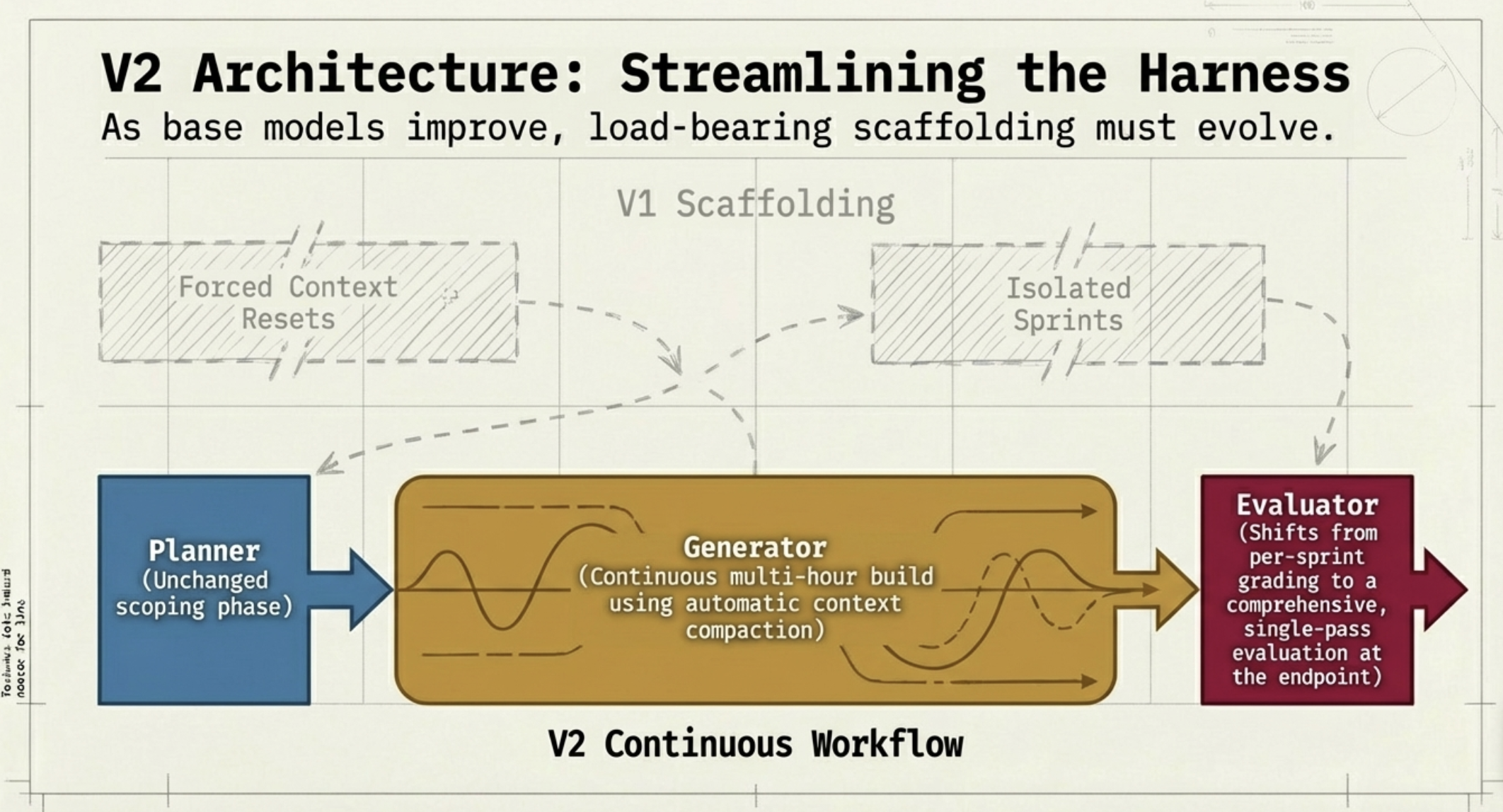

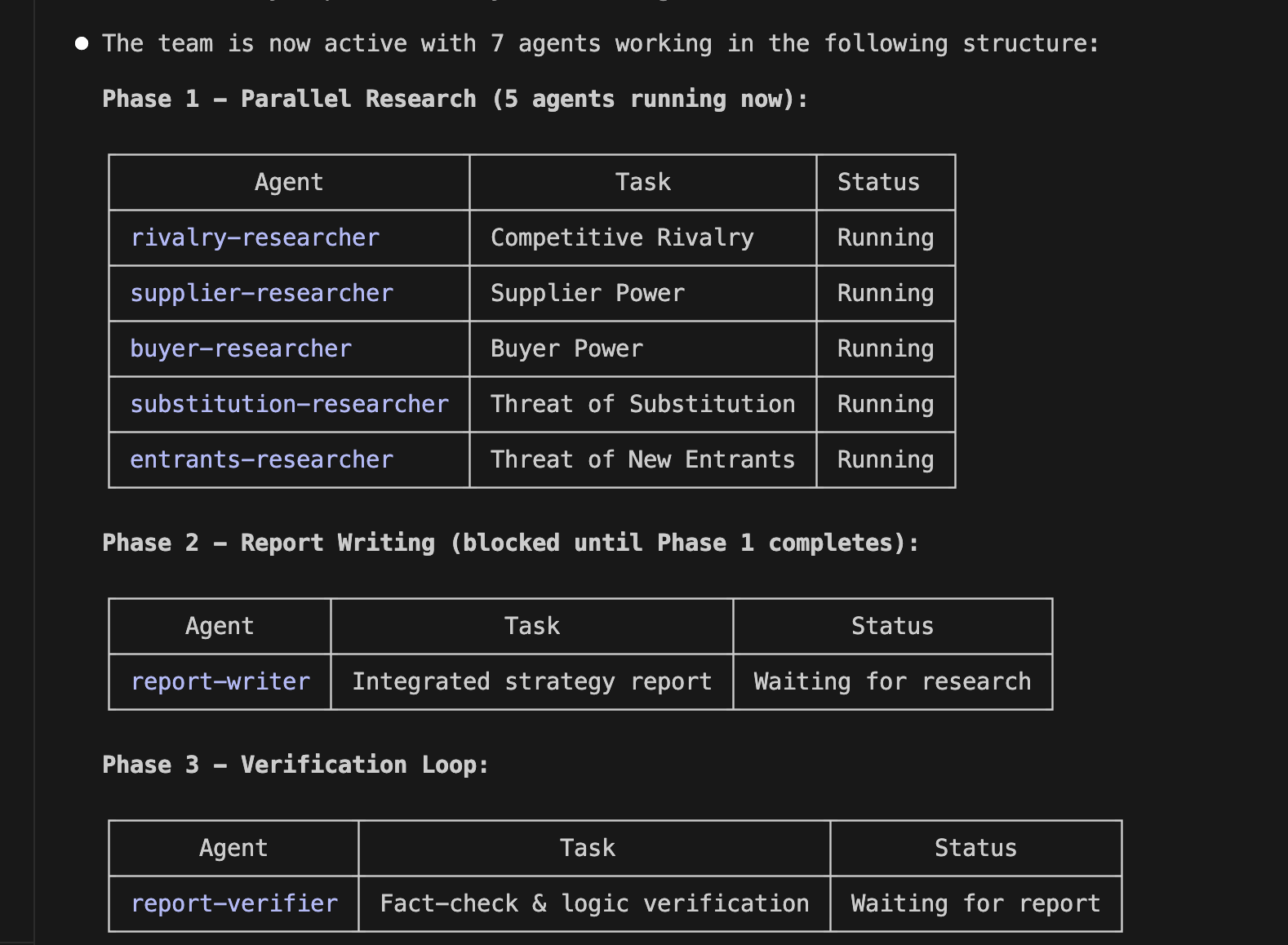

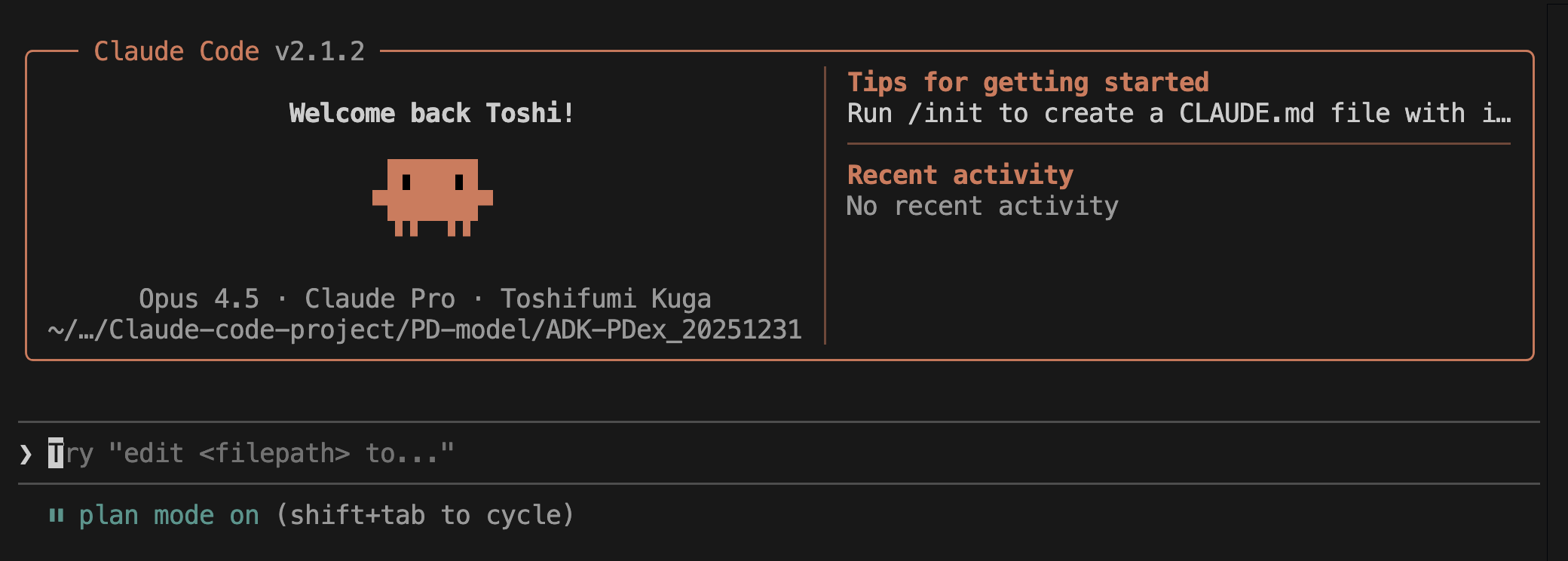

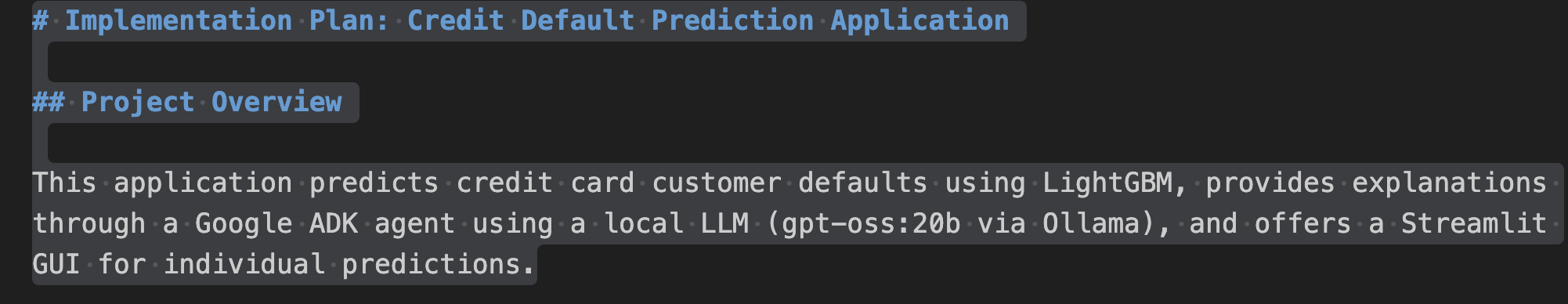

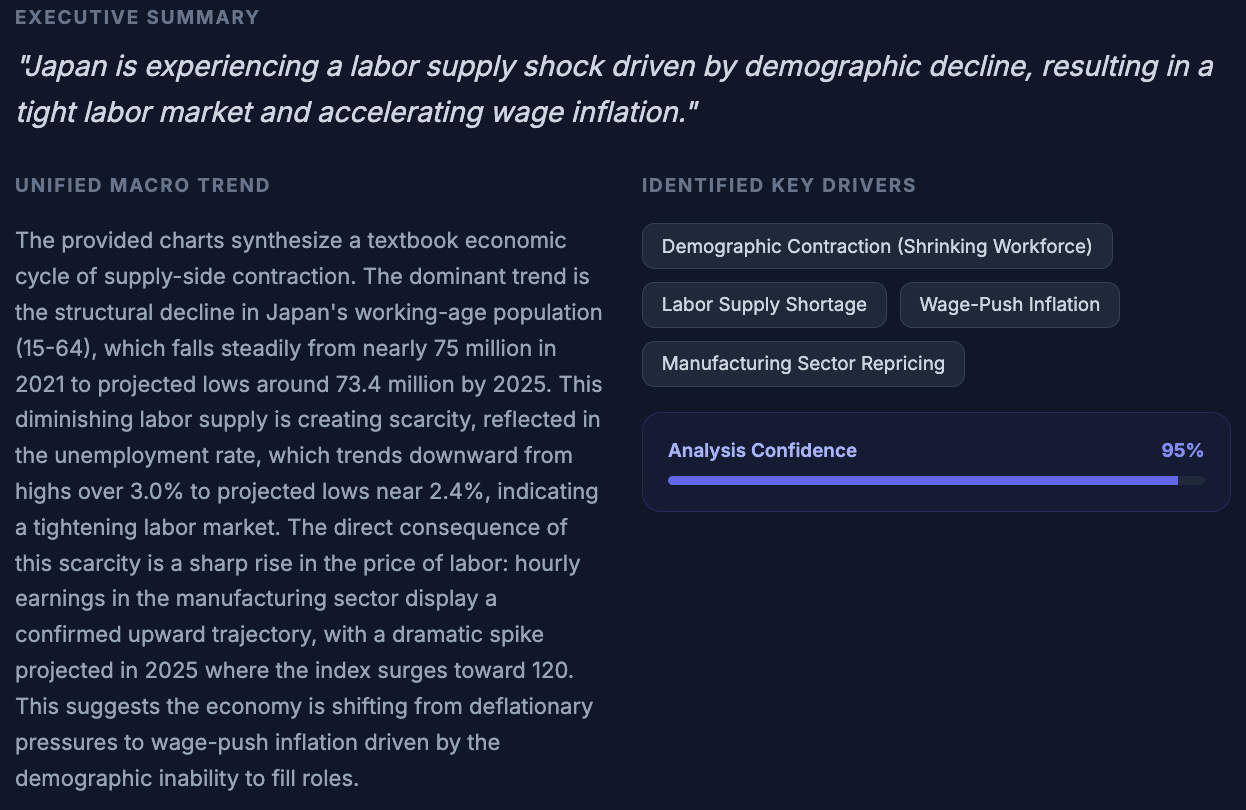

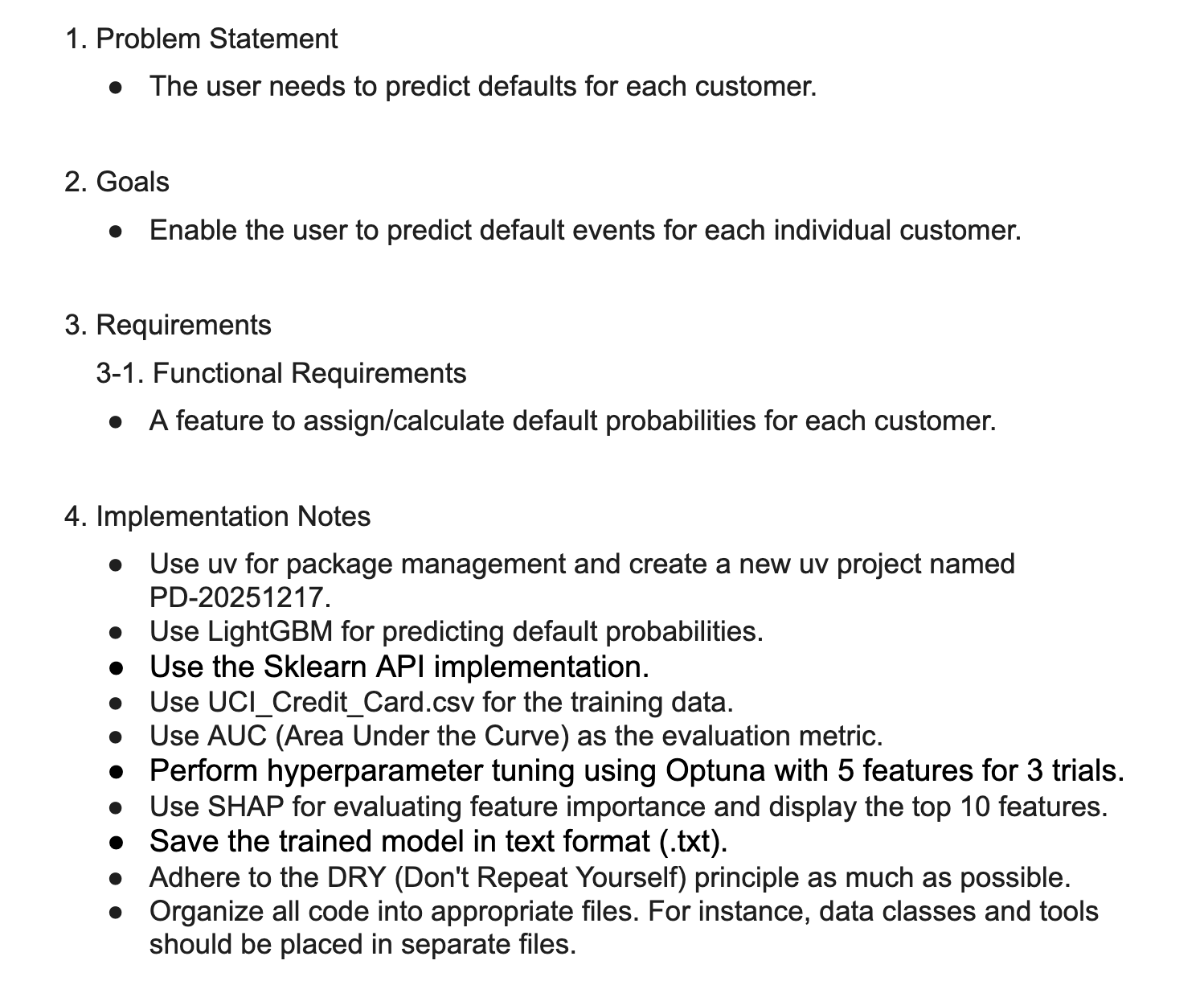

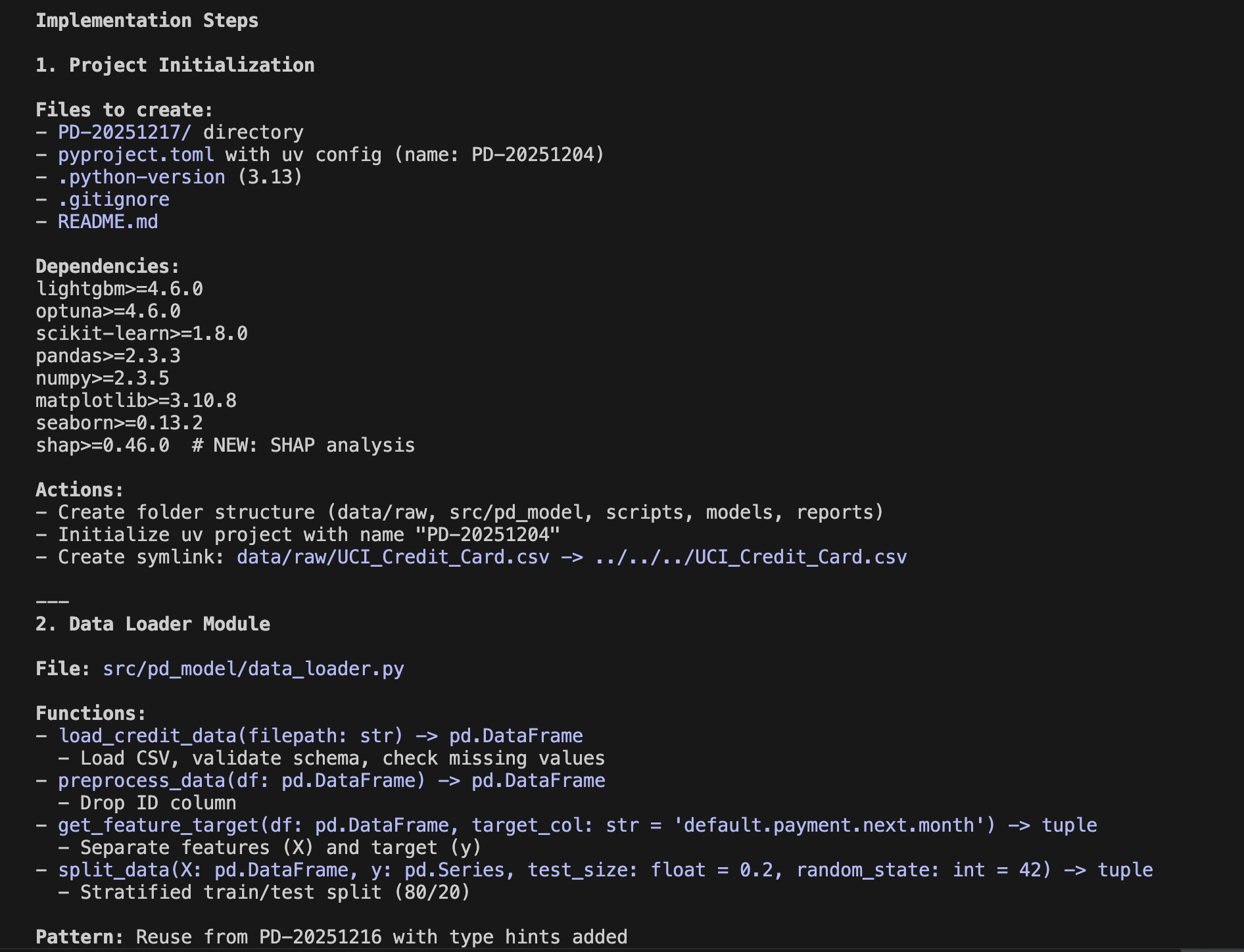

Once again, I used Anthropic's Claude Code to implement and analyze the AI agent as follows. First, I set it to Plan Mode, compiled what I wanted to accomplish into a PRD (Product Requirements Document), handed it over to Claude Code, and formulated an implementation plan. This PRD incorporates the Root Cause Analysis (RCA) explained above.

Claude Code's Plan Mode

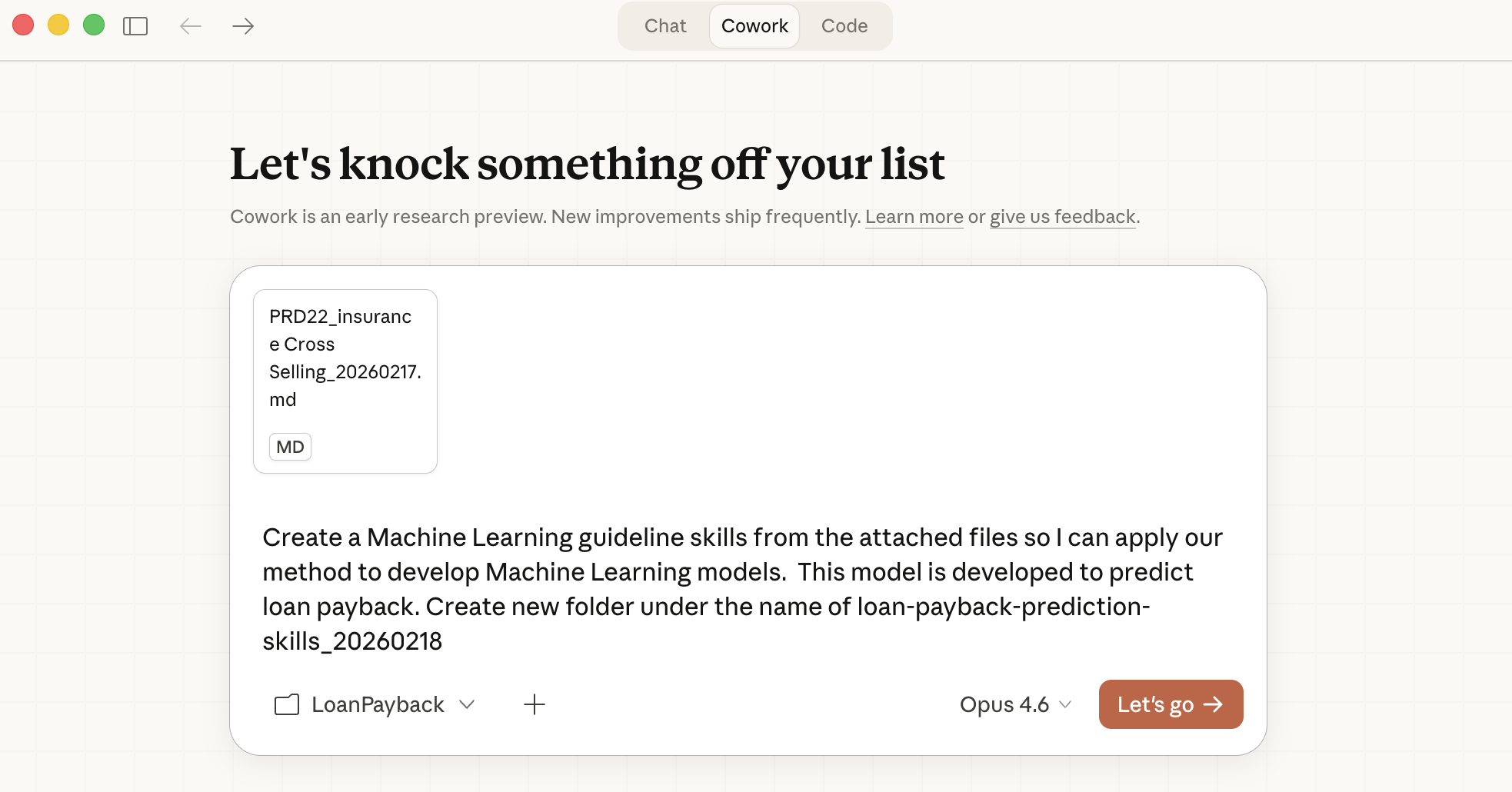

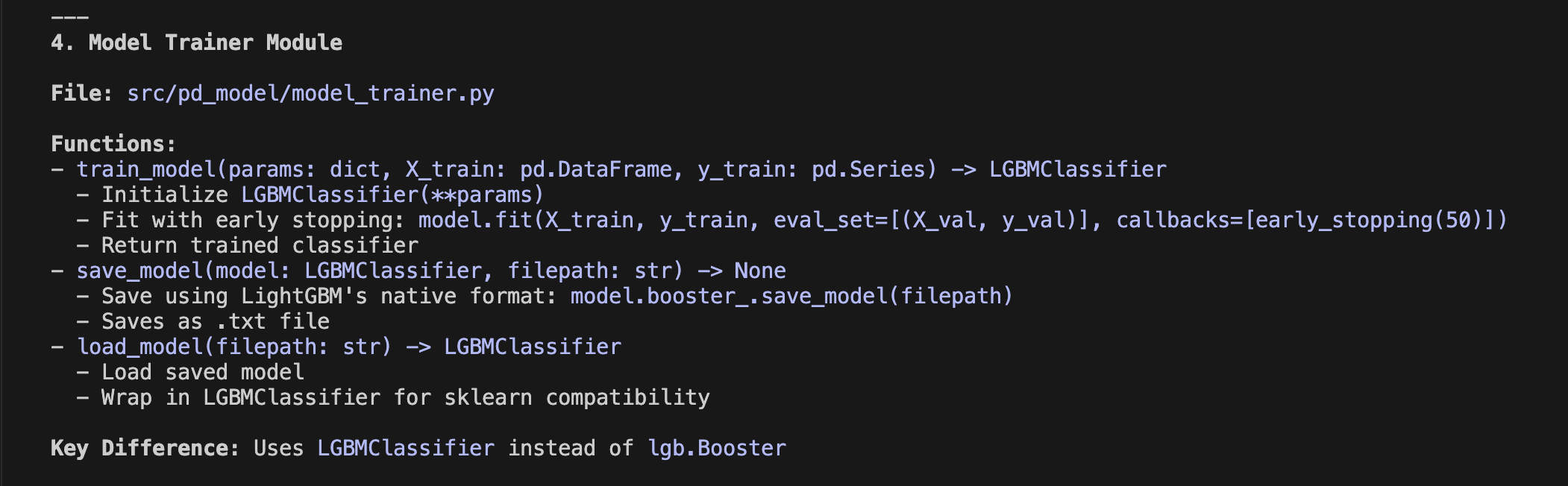

An implementation plan like the one below is formulated in about 5 minutes. The actual document is much longer, but I will only show the first part here. The important thing is to thoroughly review this implementation plan. It is long and can be tedious, but this stage allows you to confirm whether it aligns with the task's objectives before actually diving into coding. Anthropic's generative AI, Opus 4.7, is extremely high-performing; once it enters the implementation phase, it runs non-stop until the end. Since it is difficult for humans to intervene midway, the accuracy of the implementation plan holds the key to solving the task.

Implementation Plan via Plan Mode

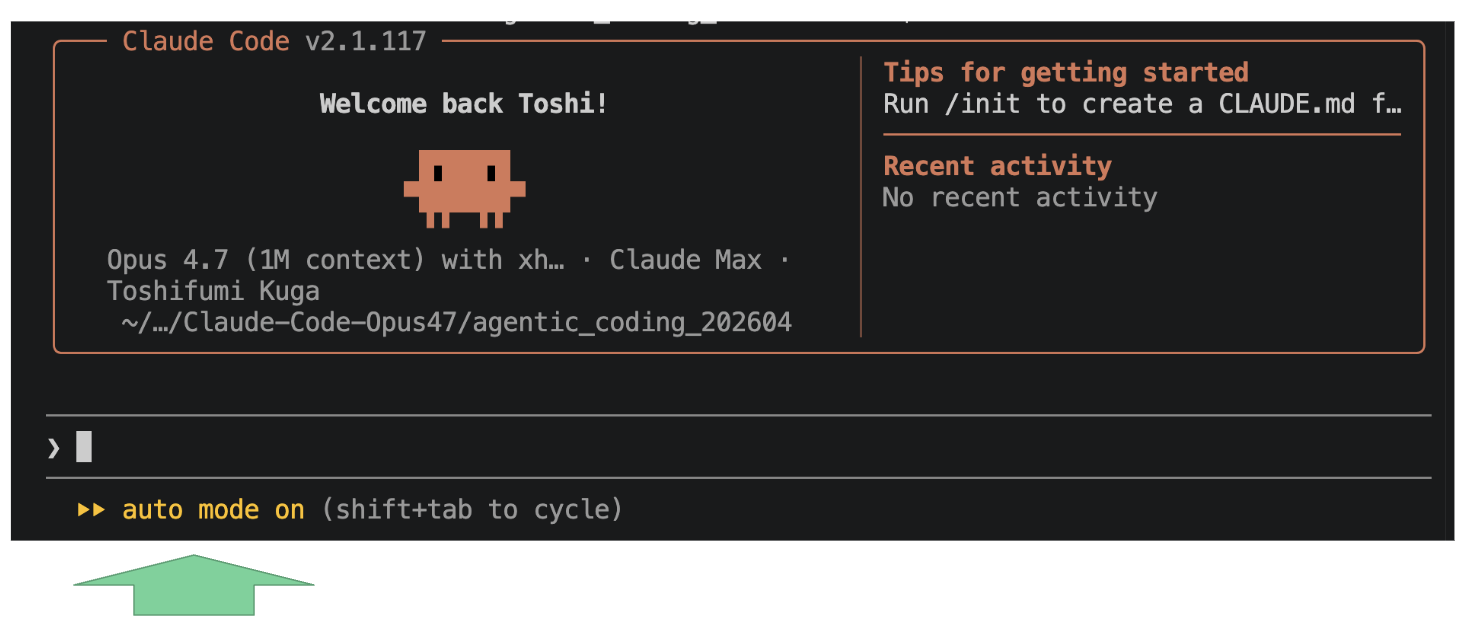

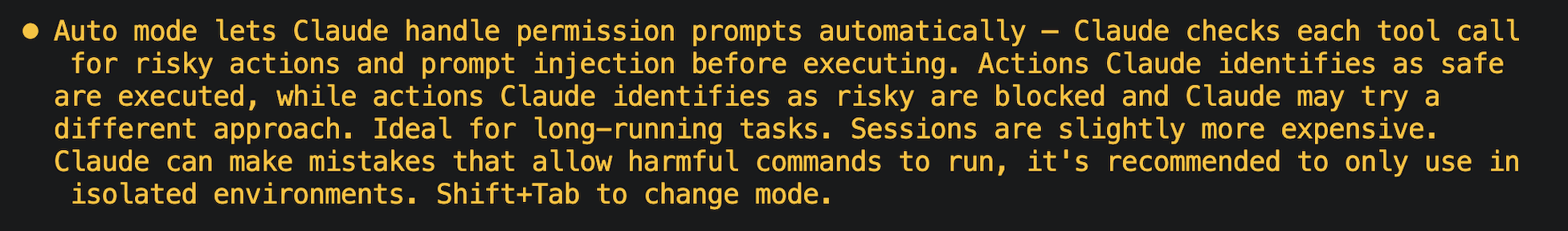

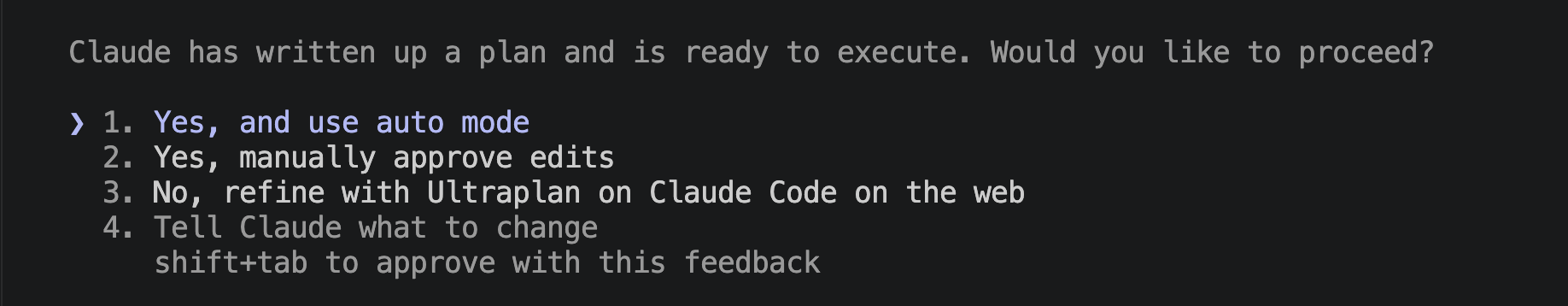

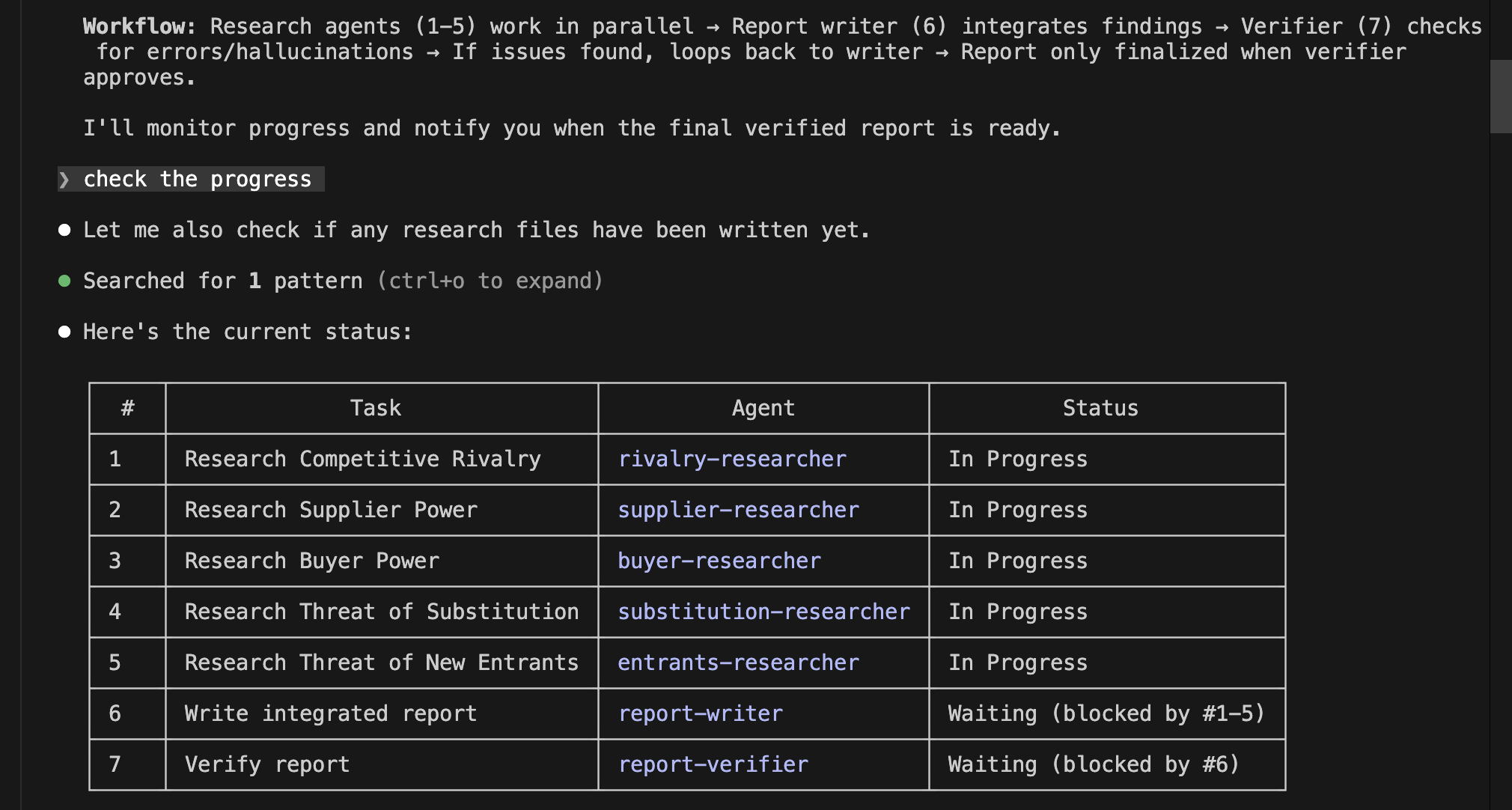

Since this implementation plan was well-crafted, I will proceed directly to implementation. I switch to Auto Mode as shown below and start coding. You can see the AI agent completing the implementation process step by step.

Implementation via Auto Mode

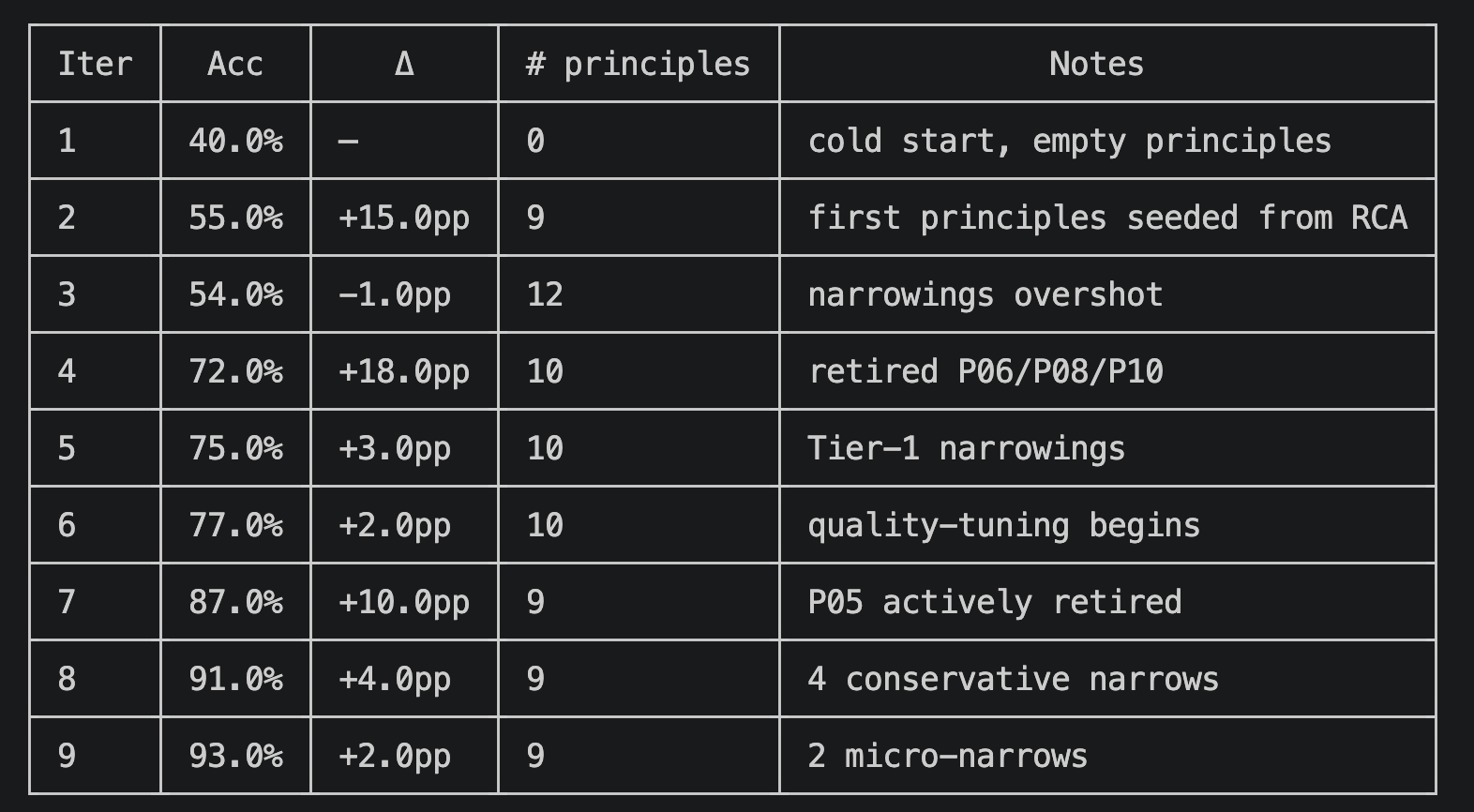

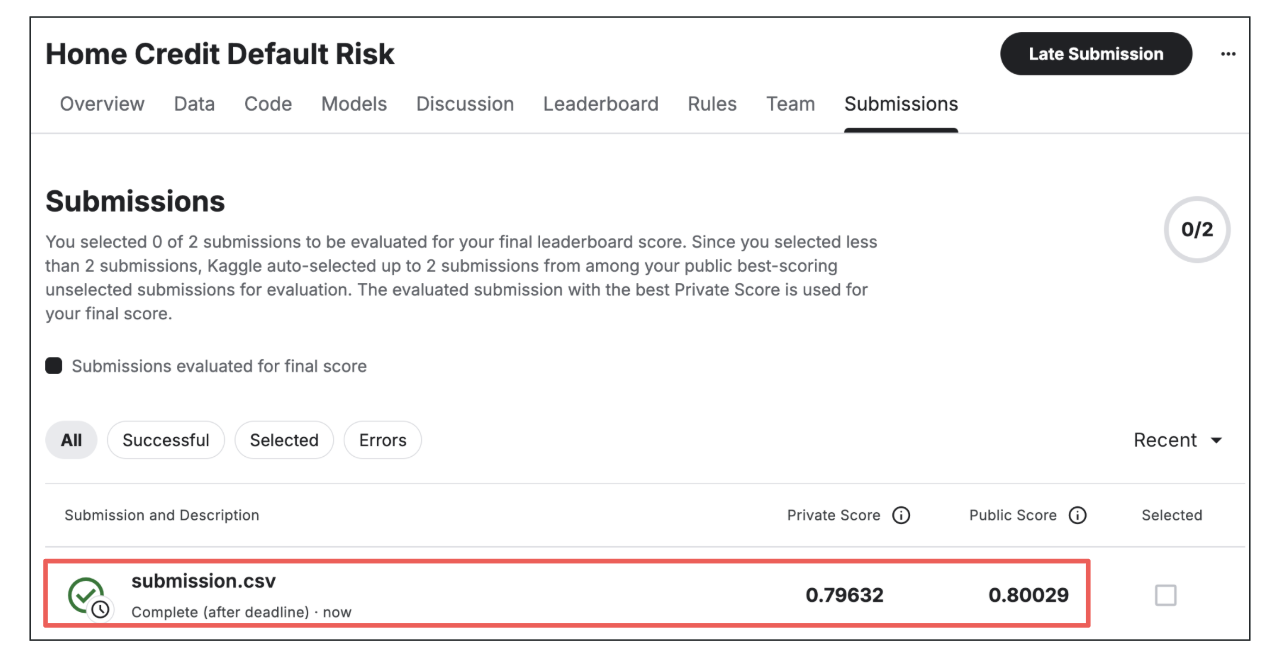

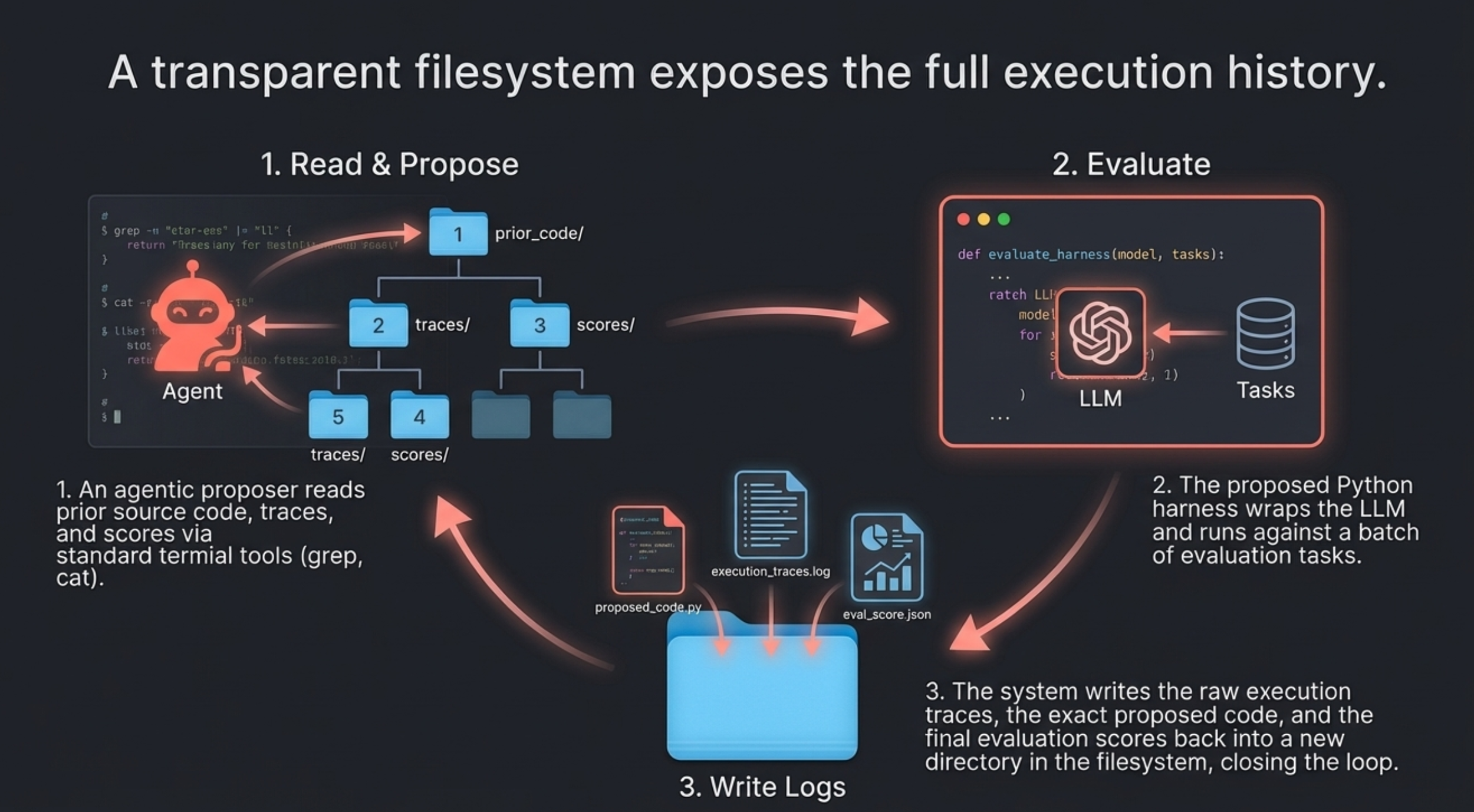

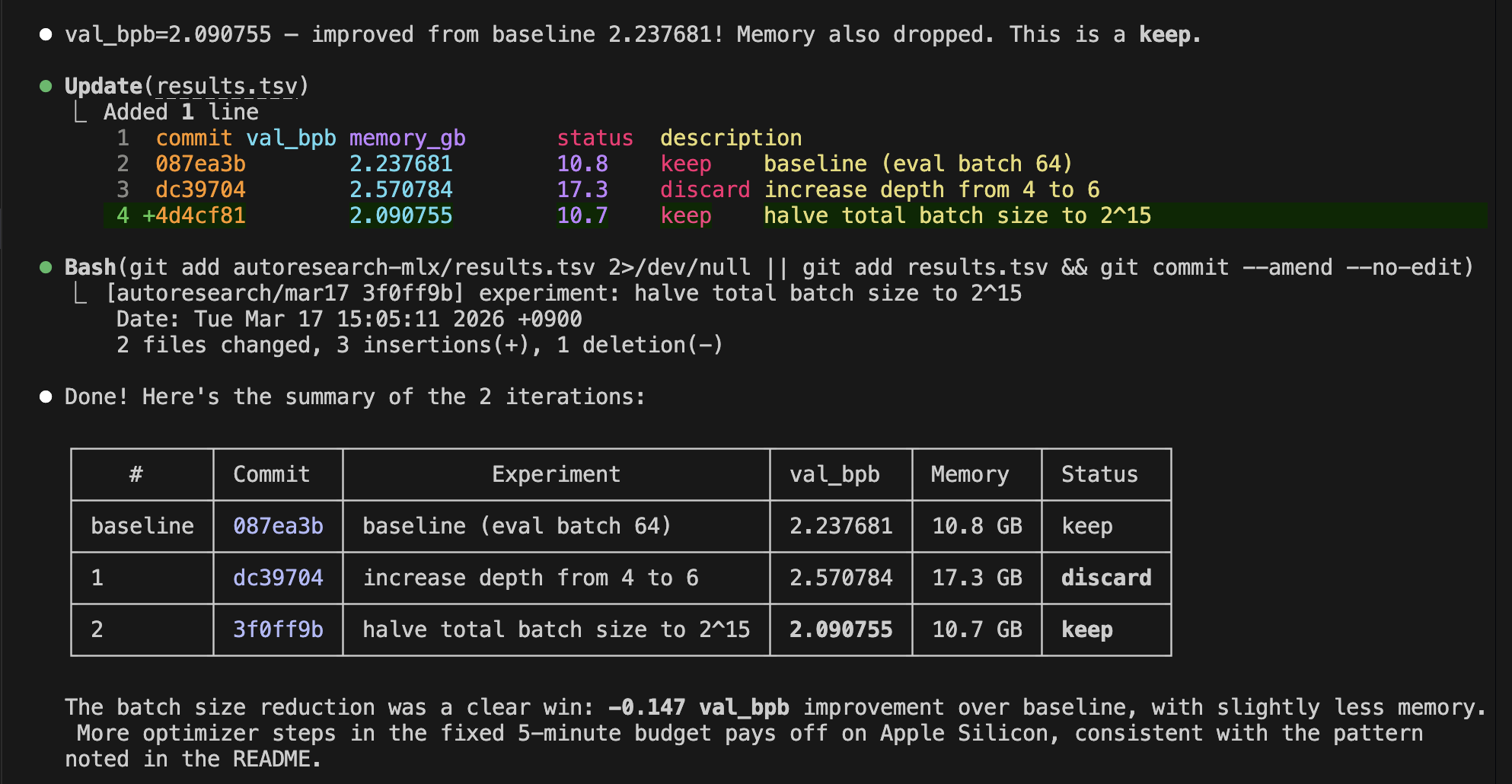

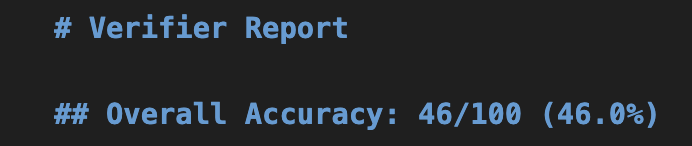

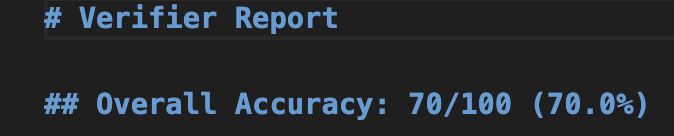

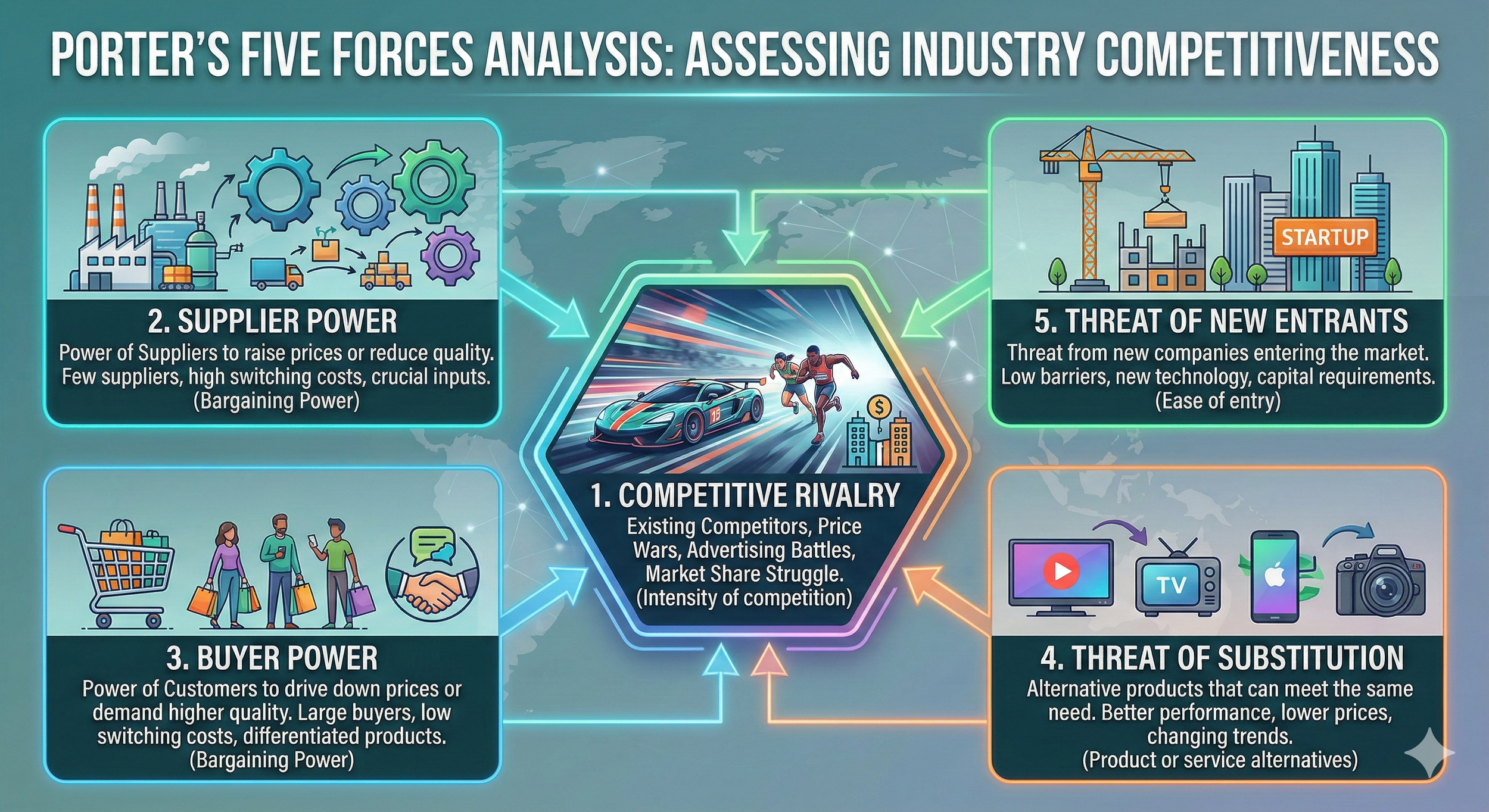

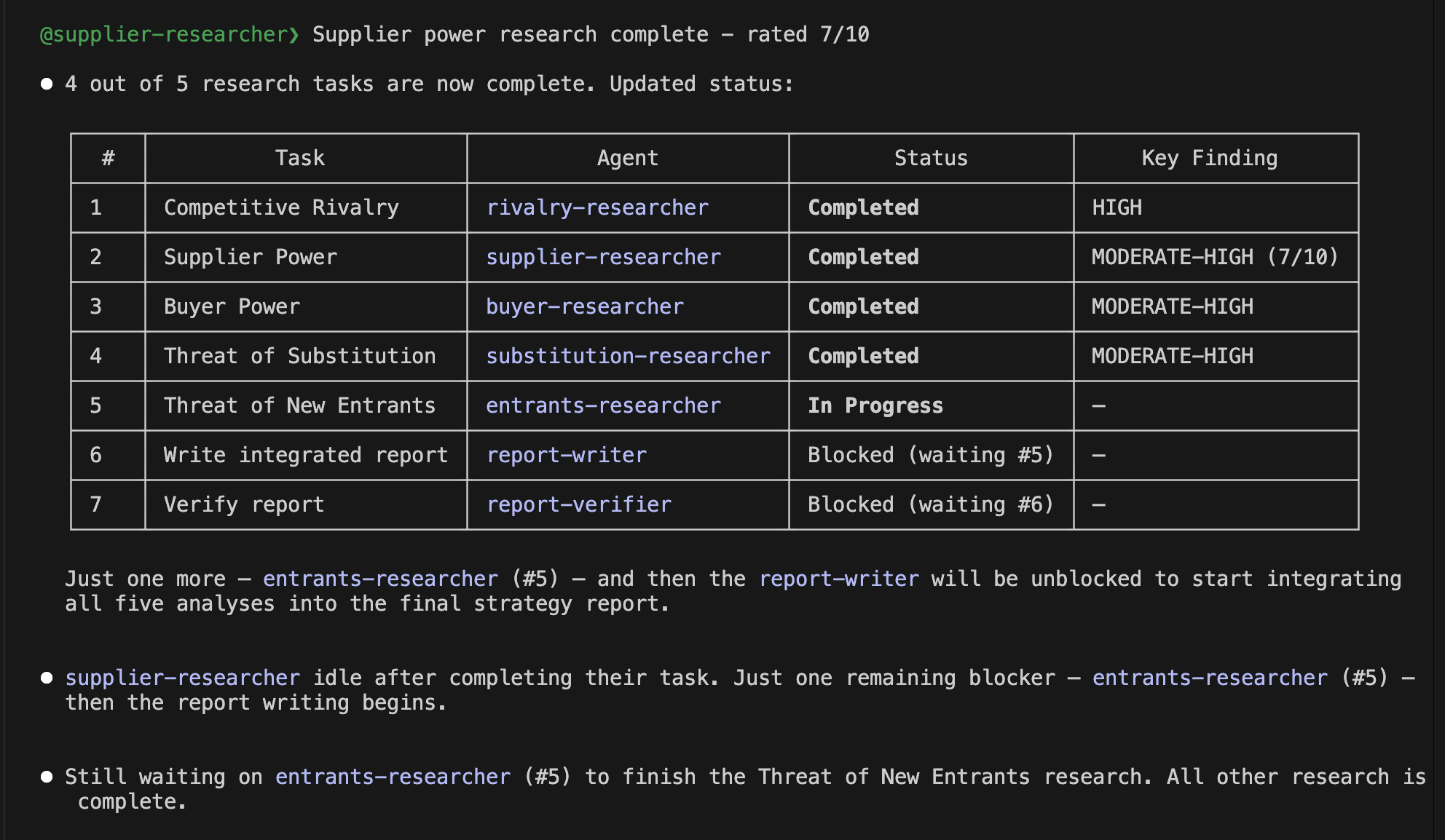

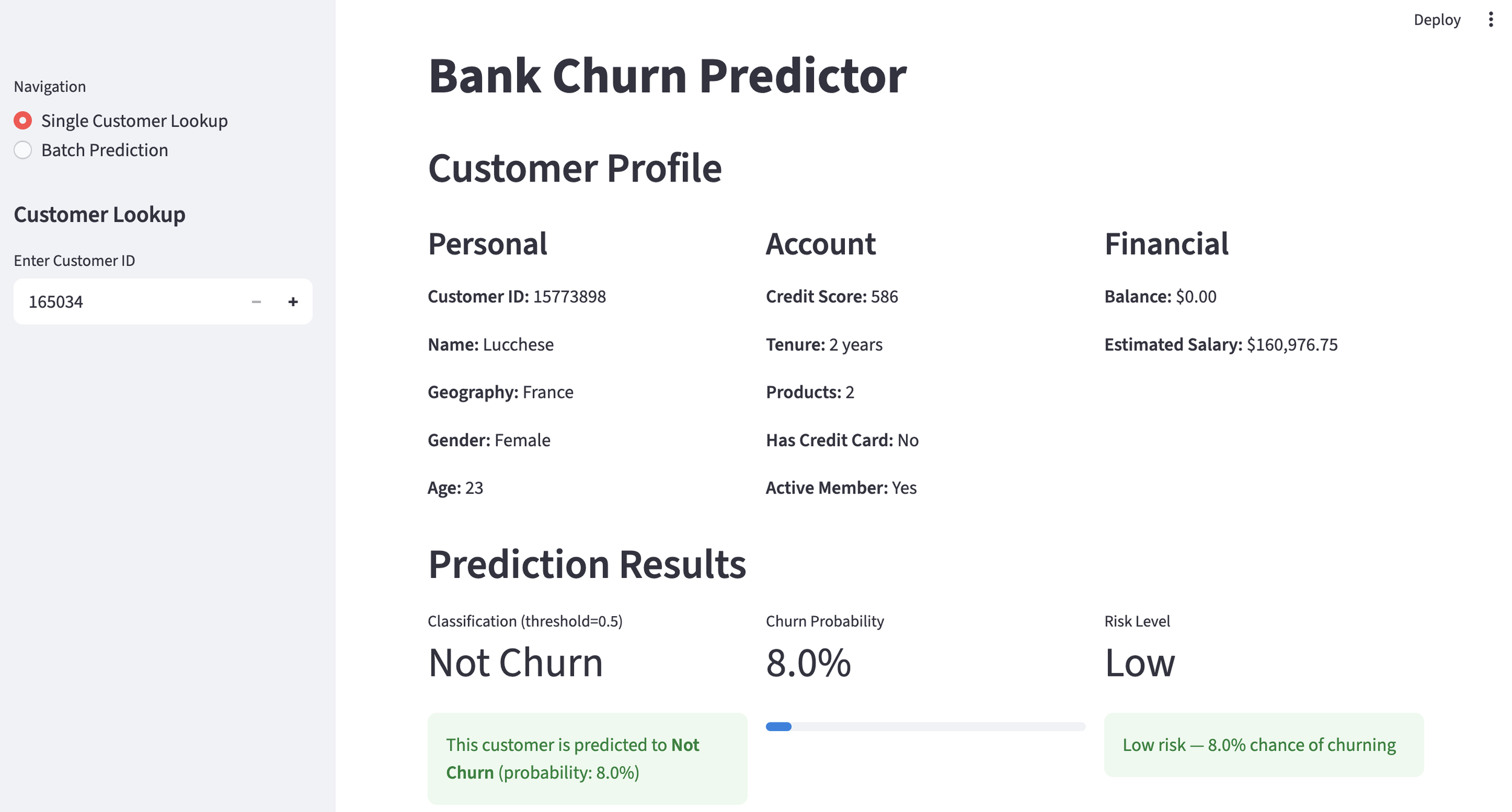

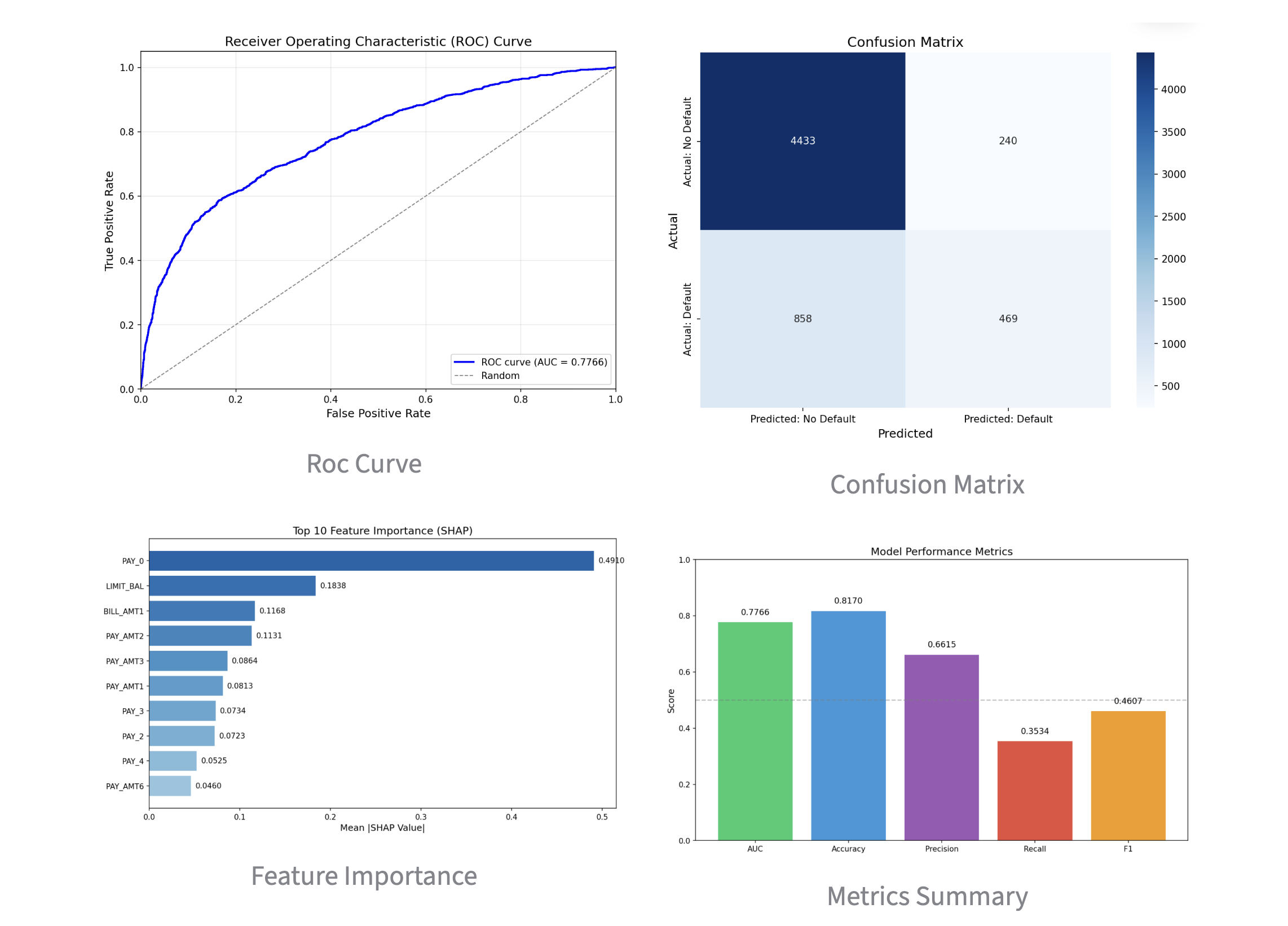

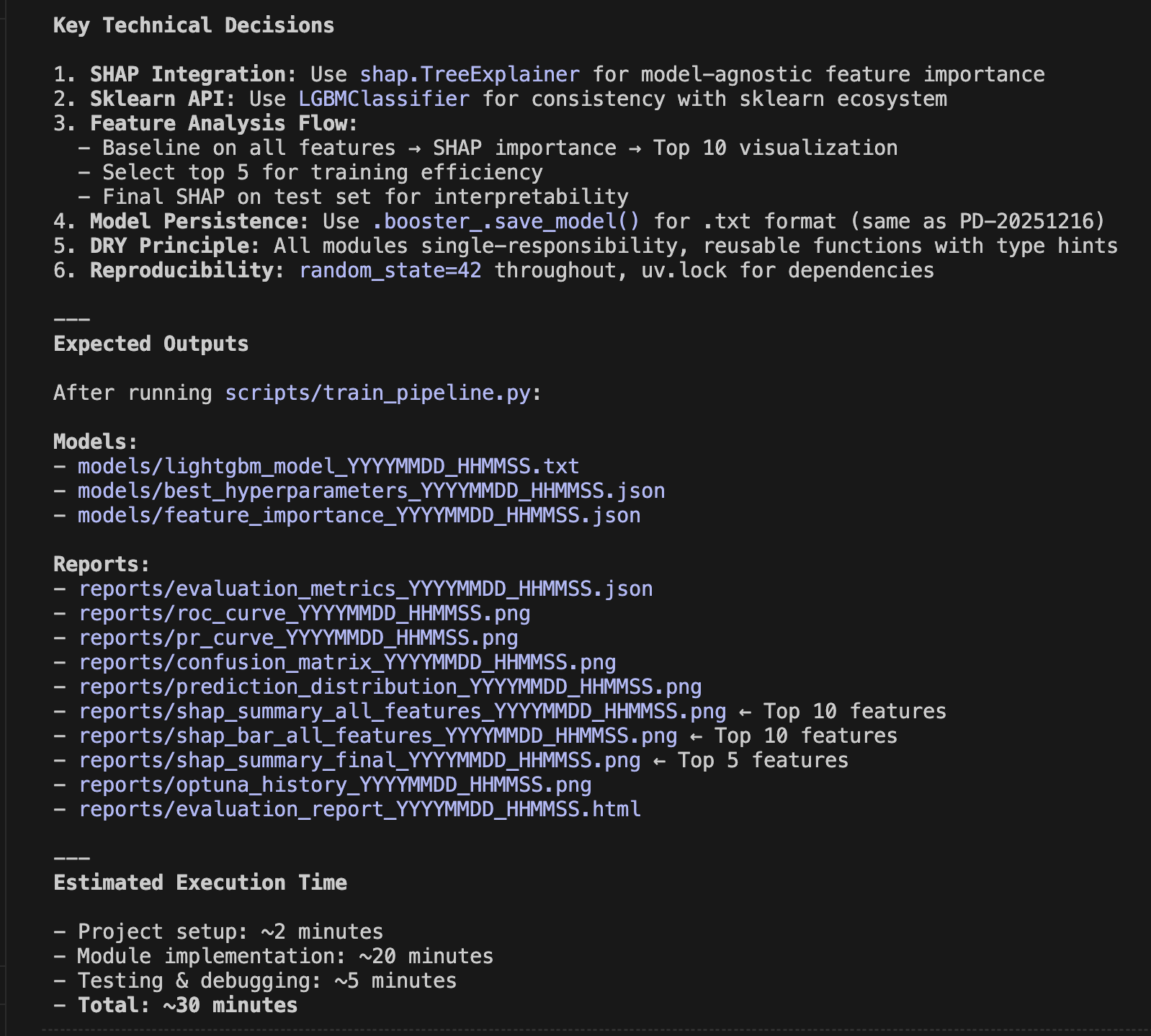

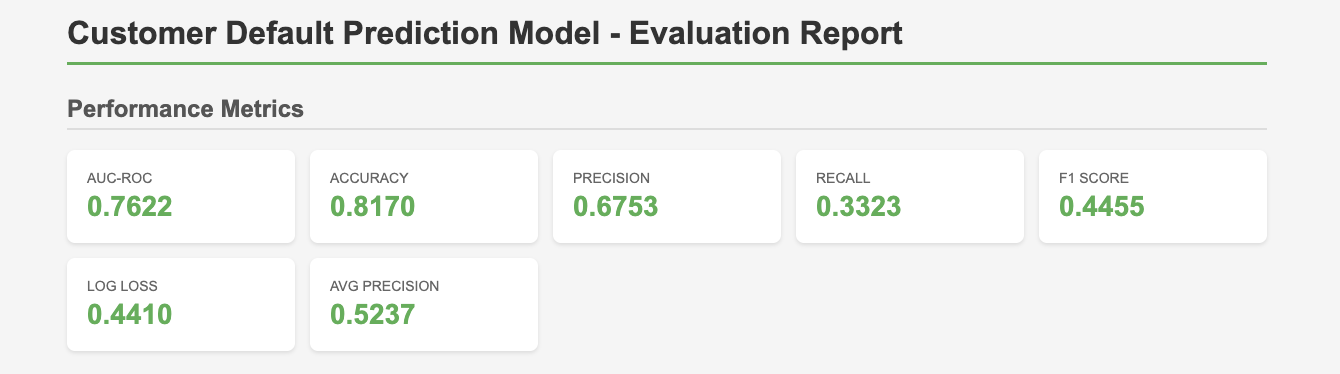

This time, we iterated on the analysis and improvement 9 times, which ultimately took over 10 hours, but we obtained the results below. This is the result of classifying 100 randomly sampled customer complaints into 20 classes. You can see that the accuracy gradually improves thanks to the RCA feedback.

Accuracy at Each Iteration

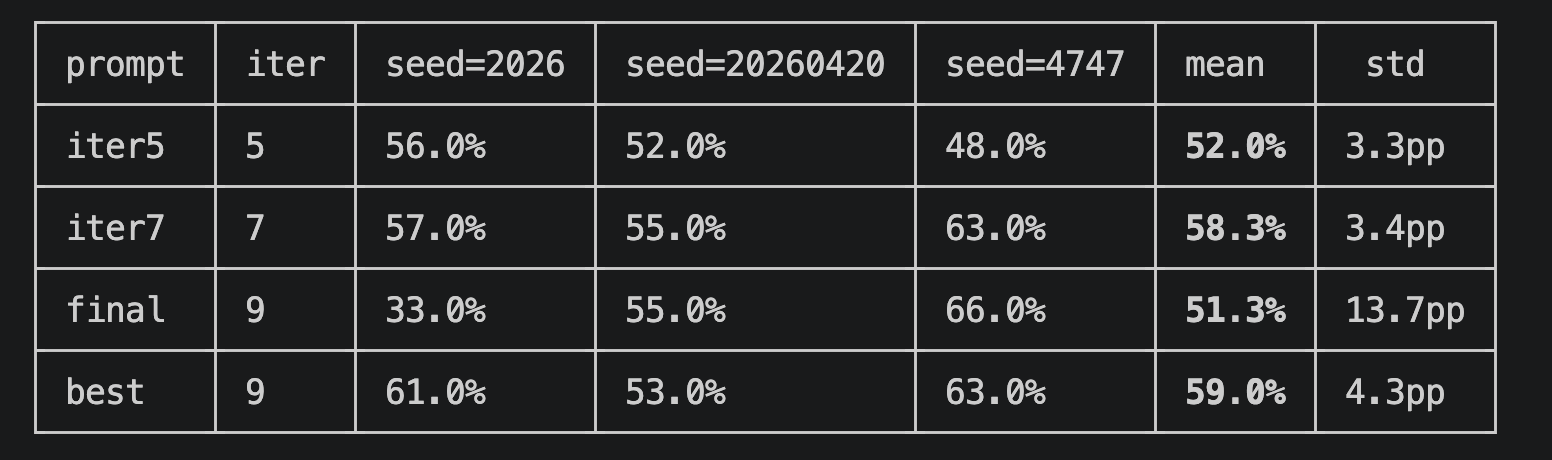

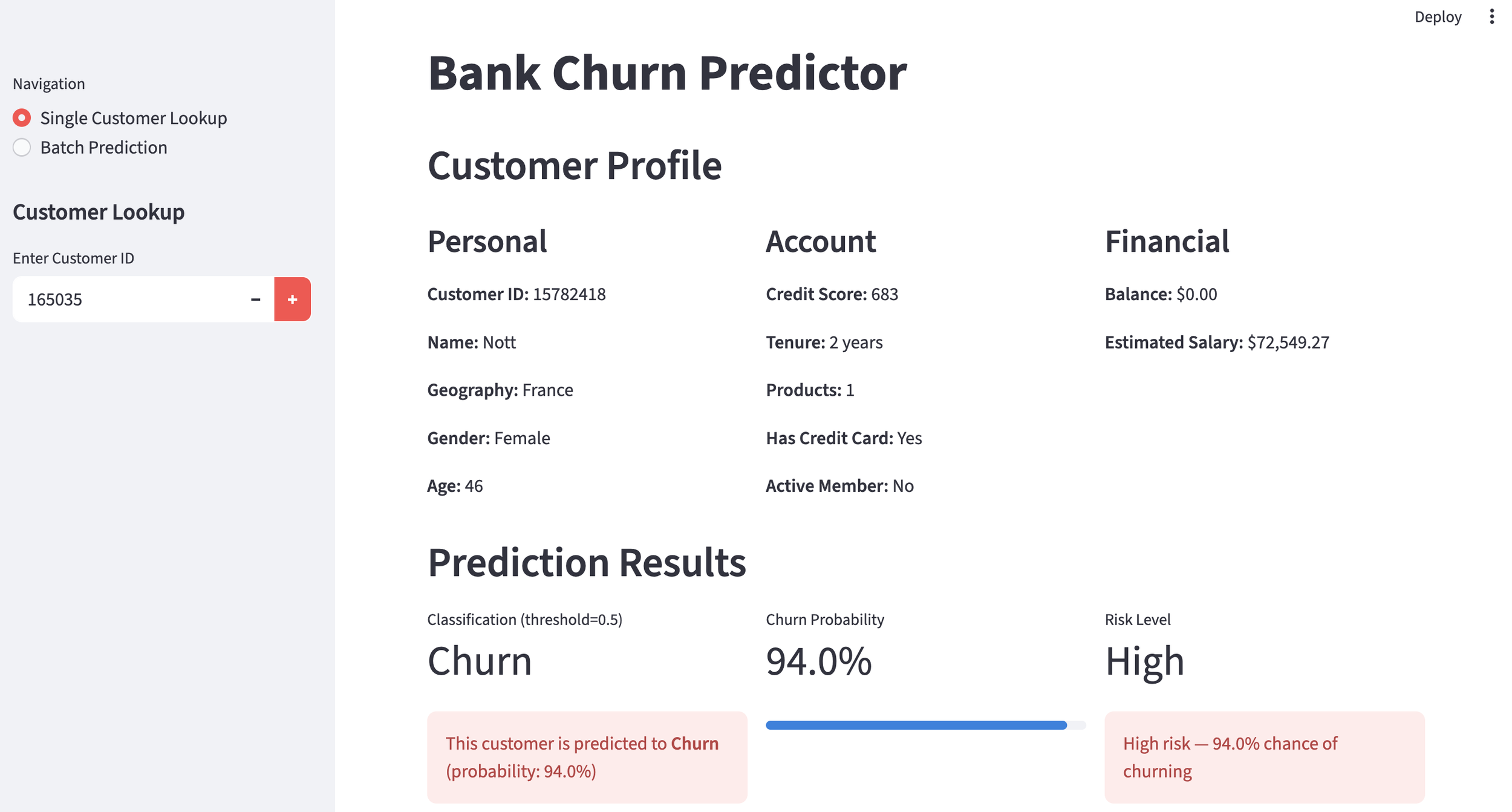

However, it seems to have overfitted due to repeating the process for far too long. I validated it with newly sampled data, but saw no improvement from iteration 7 onwards.

Accuracy on New Data

3. Results and Challenges This Time

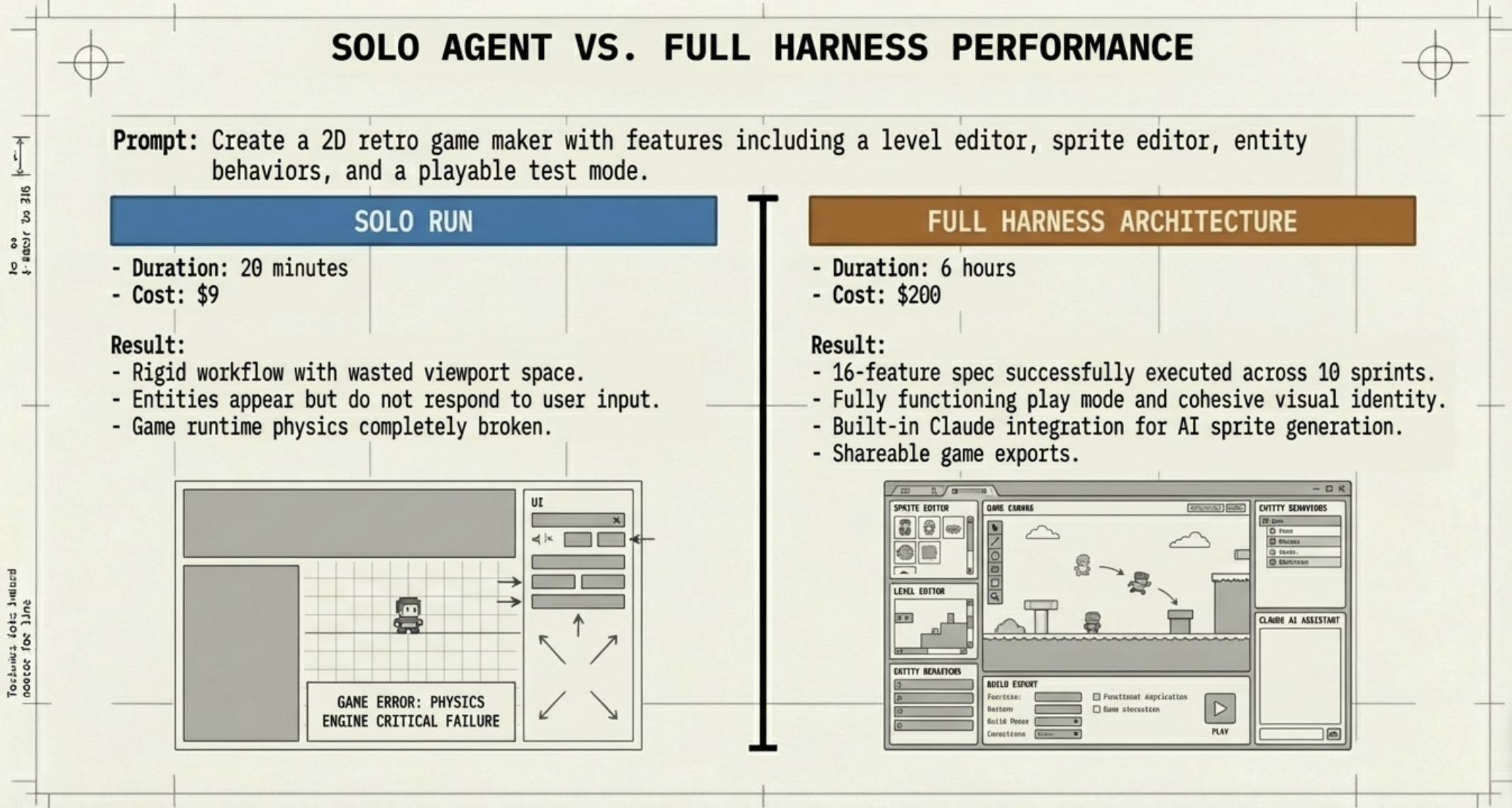

In this bank customer complaint classification task, the baseline accuracy using a generative AI "out of the box" without doing anything special was under 40%. By adopting a multi-agent system with a generator and an evaluator, and incorporating RCA feedback, we achieved just under 60% accuracy even on new data, so I believe the RCA was effective. However, once the accuracy on the original data exceeded 80%, overfitting occurred, so figuring out how to improve this is a future challenge.

What did you think? This time, I explicitly stated the RCA in the PRD, implemented it as a multi-agent system, and tackled the task of classifying bank customer complaints based on their causes. While the accuracy improved from 40% to about 60%, overfitting remained an issue. To aim for an accuracy of 70% or higher on new data, another breakthrough might be necessary.

At ToshiStats, we plan to further develop RCA. Please look forward to it. Stay tuned!

You can enjoy our video news ToshiStats AI Weekly Review from this link, too!

1) Consumer Complaint Database

2) CoEvoSkills: Self-Evolving Agent Skills via Co-Evolutionary Verification, Hanrong Zhang1∗ Shicheng Fan1∗ Henry Peng Zou1 Yankai Chen2,3

Zhenting Wang2

Jiayu Zhou4 Chengze Li1 Wei-Chieh Huang1 Yifei Yao5

Kening Zheng1 Xue (Steve) Liu2,3 Xiaoxiao Li6 Philip S. Yu1

1University of Illinois Chicago 2MBZUAI 3McGill University

4Columbia University 5Zhejiang University 6University of British Columbia, April 12 2026

Copyright © 2026 ToshiStats Co., Ltd. All right reserved.

Notice: This is for educational purpose only. ToshiStats Co., Ltd. and I do not accept any responsibility or liability for loss or damage occasioned to any person or property through using materials, instructions, methods, algorithms or ideas contained herein, or acting or refraining from acting as a result of such use. ToshiStats Co., Ltd. and I expressly disclaim all implied warranties, including merchantability or fitness for any particular purpose. There will be no duty on ToshiStats Co., Ltd. and me to correct any errors or defects in the report, the codes and the software.