"It would be wonderful to have a system where you could give instructions to an AI agent before going to bed, and while you sleep, the AI agent executes the program so that a finished product is ready by the time you wake up in the morning." This is not a story about the future. It is an application called "autoresearch" (1) released on March 6, 2026, and anyone can use it for free. Let’s take a look right away.

1. What is "autoresearch"?

This is a project by the renowned AI researcher Andrej Karpathy. According to his GitHub, it is described as "AI agents running research on single-GPU nanochat training automatically," meaning he has created AI agents that automatically train nanochat (2). Nanochat is a small yet high-performance large language model (LLM) that he developed. Usually, he trains nanochat while manually tuning it, but this is a very ambitious project to automate that process using "autoresearch." According to him, even though it has just begun, "autoresearch" has worked very well. For details, please see his post on X (3).

2. Simple is Best

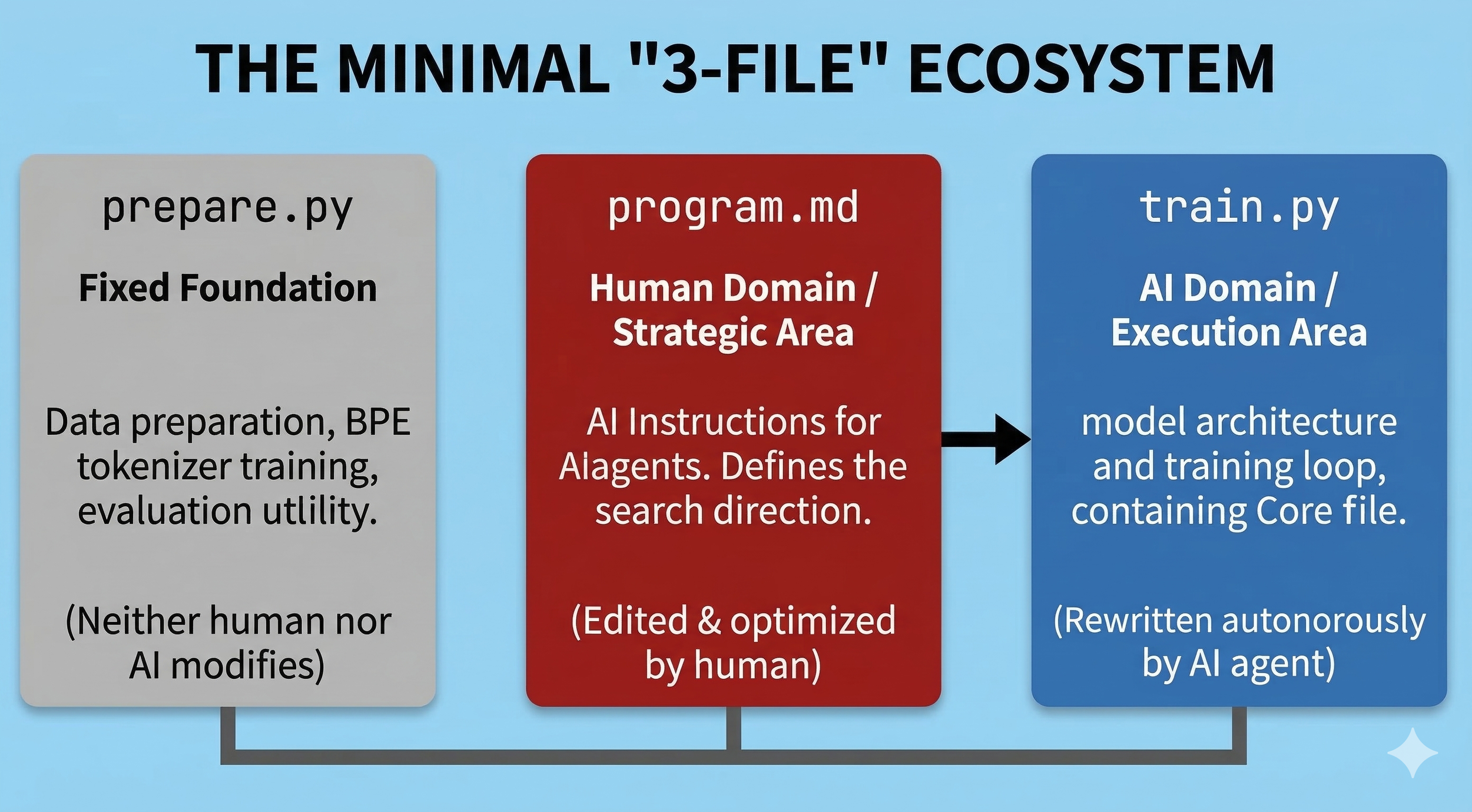

When you hear about automating the training of a large language model, you might imagine a very complex system, but there are only three basic files. Furthermore, the only file a human needs to write directly is program.md. In this file, you write in natural language, such as English or Japanese, "what kind of research team we want to form by launching multiple AI agents and what we want them to do." No programming is required. The AI agent that receives these instructions autonomously writes code in train.py to improve the accuracy of nanochat. The final file, prepare.py, is never updated during training. It serves as the foundation for the experiment, so it remains the same until the end. It is a very simple structure. I highly recommend checking Andrej Karpathy’s GitHub for the contents of each file; it will be very informative. I have summarized the overview briefly below.

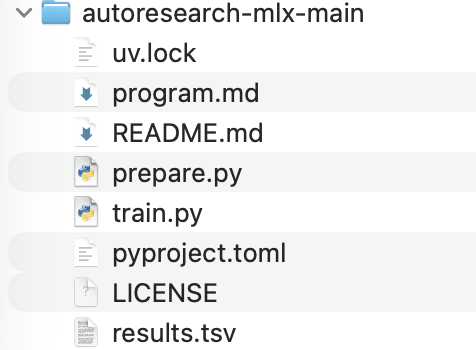

This is the autoresearch repository for Mac that I executed this time. You can certainly see the three files I introduced. The file structure is extremely simple, and I believe anyone can handle it.

3. Running on a MacBook Air

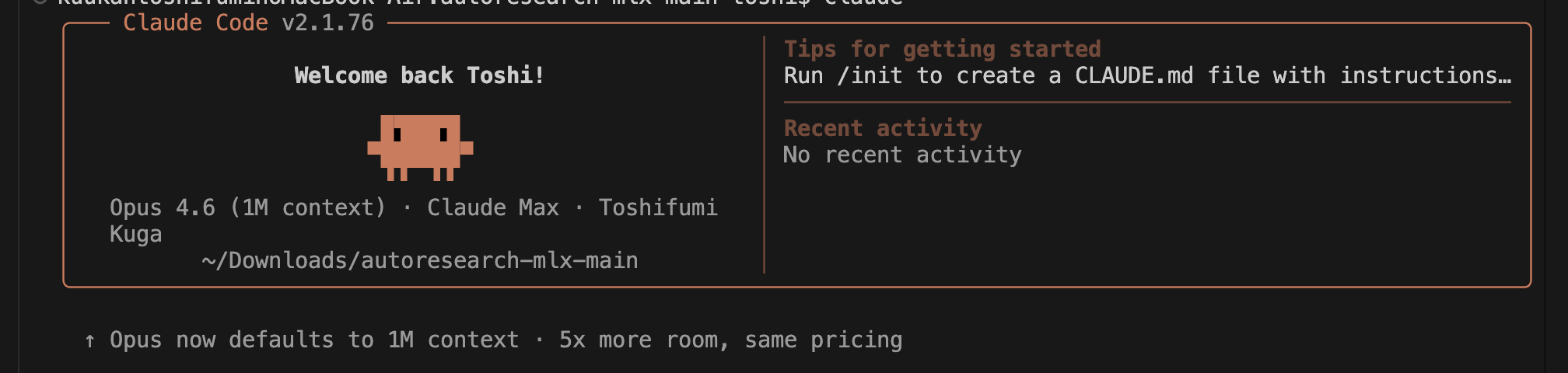

Now, let's run it on my MacBook Air. This Mac was purchased exactly one year ago and is equipped with an M4 chip and 24GB of RAM. Claude Code is active as the development environment once again. It is on duty at our company almost every day.

Claude Code

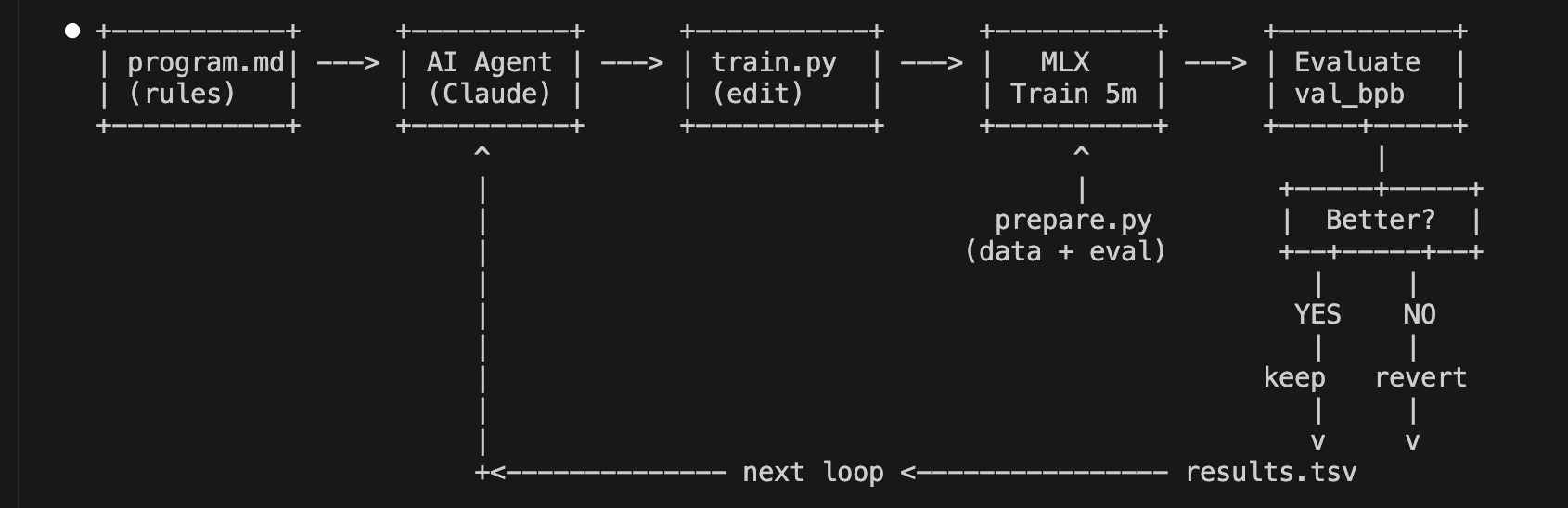

When I asked Claude Code to draw a diagram, it looked like the one below. It is simple and easy to understand. On the second from the right, it says MLX Train 5m, which means repeating a 5-minute training session many times. It can be executed about 12 times in one hour. On the far right, Evaluate val_bpb means "evaluate the metric val_bpb (validation bits per byte) and check if the value is steadily decreasing." If the value decreases, it means the accuracy is improving. If not, that session is discarded, and training continues from the previous state. If you let this run while you sleep, you can conduct 100 experiments in a single night.

autoresearch Training Process

Andrej Karpathy describes this design as follows: ‘Self-contained. No external dependencies beyond PyTorch and a few small packages. No distributed training, no complex configs. One GPU, one file, one metric.‘

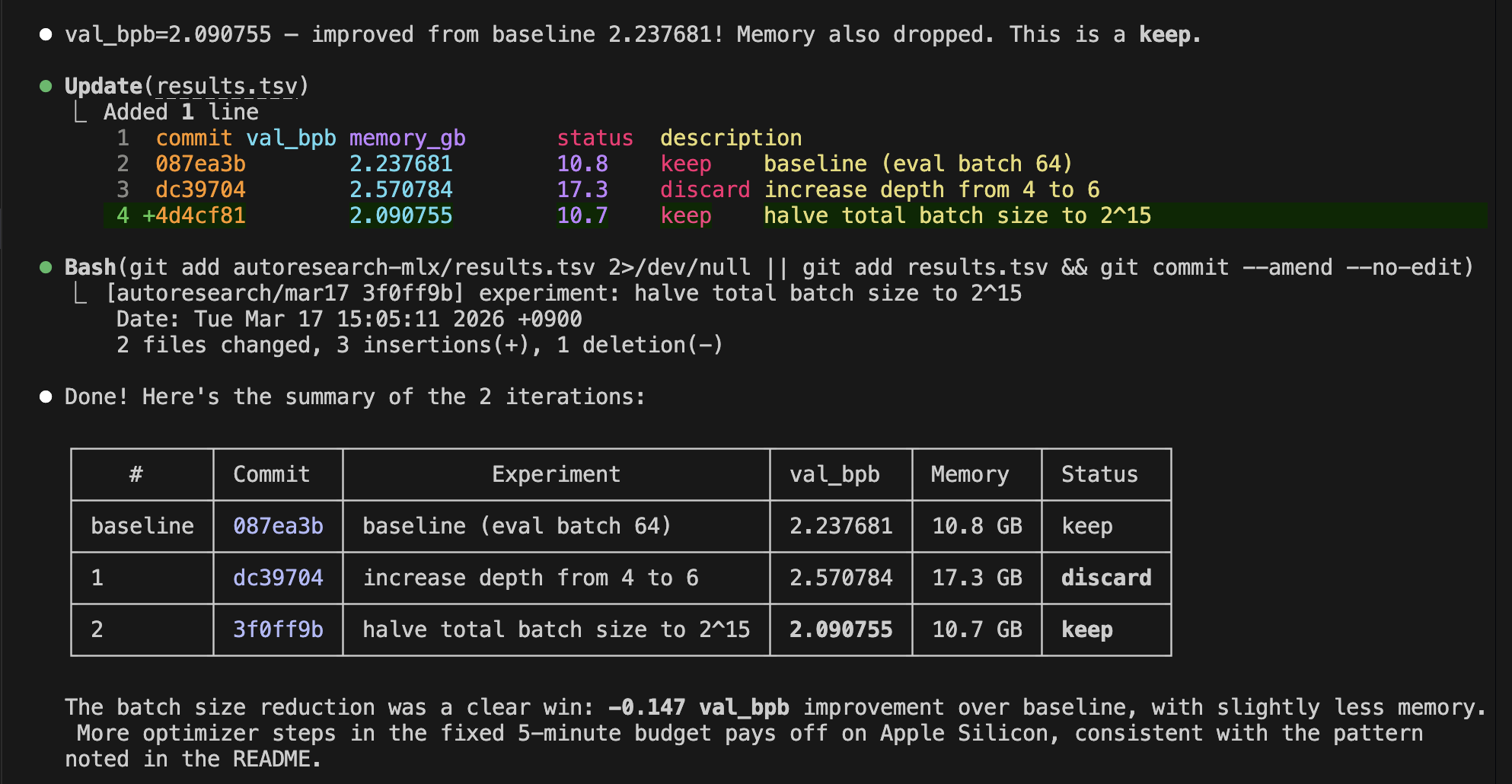

Since I wanted to confirm if it would work properly this time, I ran the loop only three times. As seen below, the evaluation metric did indeed decrease, showing that the training progressed smoothly. During this time, I gave no instructions at all. It’s amazing. It truly is "autoresearch"!

Trends in Evaluation Metric Values

What did you think? Andrej Karpathy stated on his X (3) account:

“All LLM frontier labs will do this. “,

“any metric you care about that is reasonably efficient to evaluate (or that has more efficient proxy metrics such as training a smaller network) can be autoresearched by an agent swarm. It's worth thinking about whether your problem falls into this bucket too.”

You, too, might be able to create your own AI lab using a Mac. It is a wonderful thing. At ToshiStats, we will continue to conduct experiments incorporating cutting-edge technology. Stay tuned!.

You can enjoy our video news ToshiStats AI Weekly Review from this link, too!

1) autoresearch, Andrej karpathy, March 6, 2026

2) nanochat, Andrej karpathy, Oct13,2025

3) https://x.com/karpathy/status/2031135152349524125

Notice: This is for educational purpose only. ToshiStats Co., Ltd. and I do not accept any responsibility or liability for loss or damage occasioned to any person or property through using materials, instructions, methods, algorithms or ideas contained herein, or acting or refraining from acting as a result of such use. ToshiStats Co., Ltd. and I expressly disclaim all implied warranties, including merchantability or fitness for any particular purpose. There will be no duty on ToshiStats Co., Ltd. and me to correct any errors or defects in the report, the codes and the software.