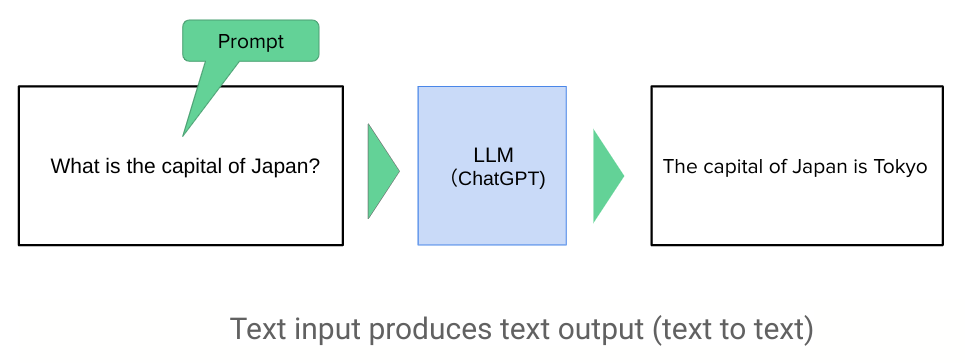

Since the debut of ChatGPT at the end of November 2022, the way we give instructions to computers has completely changed. Previously, programming languages like Python were necessary, but with ChatGPT, it's now possible to give instructions using the "natural languages" we use every day, such as English and Japanese. These natural language instructions are called "prompts." It has been about two and a half years since prompts came into use, and many people are likely experimenting with various prompts daily. As this is a new technology, systematically learning it can be challenging. However, Google has released a free white paper (1) of over 60 pages on the topic, so let's explore it for some hints. Let's begin!

1. Grasping the Basic Concepts

We often see simple prompt guides like "The Top 20 Prompts You Need to Know." However, it's impossible to effectively interact with a generative AI, which holds a vast amount of knowledge, with just about 20 prompts. While it may seem like a shortcut, memorizing a recommended list of 20 prompts each time is laborious and inefficient. Various studies are being conducted on how to write prompts, and the theoretical background is being investigated. While it's difficult for the average person to grasp everything, Google's white paper summarizes it concisely as follows:

Zero-shot prompting

Few-shot prompting

System prompting

Role prompting

Contextual prompting

Step-back prompting

Chain of thought

Self-consistency

Tree of thoughts

For example, the second method, "Few-shot prompting," is a technique to elicit more accurate answers from a generative AI by providing it with specific examples in "question and answer pairs." The other methods also have their own theoretical backgrounds and wide ranges of application. Rather than rote memorization, it's important to first understand the concepts and then apply them. I cannot explain them all here, so I encourage you to read the original document. I recommend taking your time to learn them one by one.

2. Memorize Useful Words

That said, taking the first step to actually write a prompt can be quite daunting. Google has provided a list of recommended verbs, which I'd like to introduce here. Choosing from these verbs to craft your prompts might help you create good ones, so it's worth a try.

Act, Analyze, Categorize, Classify, Contrast, Compare, Create, Describe, Define, Evaluate, Extract, Find, Generate, Identify, List, Measure, Organize, Parse (especially for sentences and data grammatically), Pick, Predict, Provide, Rank, Recommend, Return, Retrieve (information, etc.), Rewrite, Select, Show, Sort, Summarize, Translate, Write

When you're unsure what to write, these verbs might give you a hint. This list includes many that I frequently use myself.

3. Finding Hints from Actual Examples

When you actually try out prompts, you'll find that some cases work well while others don't. The white paper summarizes these into 15 Best Practices. Here, I'll introduce an example from page 56.

Be specific about the output

Be specific about the desired output. A concise instruction might not guide the LLM enough

or could be too generic. Providing specific details in the prompt (through system or context

prompting) can help the model to focus on what’s relevant, improving the overall accuracy.

Examples:

DO:

Generate a 3 paragraph blog post about the top 5 video game consoles.

The blog post should be informative and engaging, and it should be

written in a conversational style.

DO NOT:

Generate a blog post about video game consoles.

Indeed, we tend to write simple prompts like the bad example. However, if we can add a bit more information and write like the good example, the information we receive will be better tailored to our needs. Just knowing this can change how you write prompts from now on. This white paper is full of such examples, so I highly recommend you read it for yourself.

How was that? I hope this serves as a reference for your prompt learning journey. Prompt engineering is still in its infancy, making it a great time to start learning. Let's conclude with a message from Google: "You don’t need to be a data scientist or a machine learning engineer – everyone can write a prompt. (1)"

Stay tuned!

Copyright © 2025 Toshifumi Kuga. All right reserved

1) , "Prompt Engineering”, Google, Feb 2025

Notice: ToshiStats Co., Ltd. and I do not accept any responsibility or liability for loss or damage occasioned to any person or property through using materials, instructions, methods, algorithms or ideas contained herein, or acting or refraining from acting as a result of such use. ToshiStats Co., Ltd. and I expressly disclaim all implied warranties, including merchantability or fitness for any particular purpose. There will be no duty on ToshiStats Co., Ltd. and me to correct any errors or defects in the codes and the software.