Recently, discussions on how to significantly improve AI agent performance by optimizing "what information is provided to the agent and at what timing" have been gaining momentum. In this post, based on a recent research paper, we will explore the possibility of "Recursive Self-Improvement of AI Agents," where agents improve their own performance. Let’s dive in.

1. Meta-Harness: A New Methodology for Harness Construction

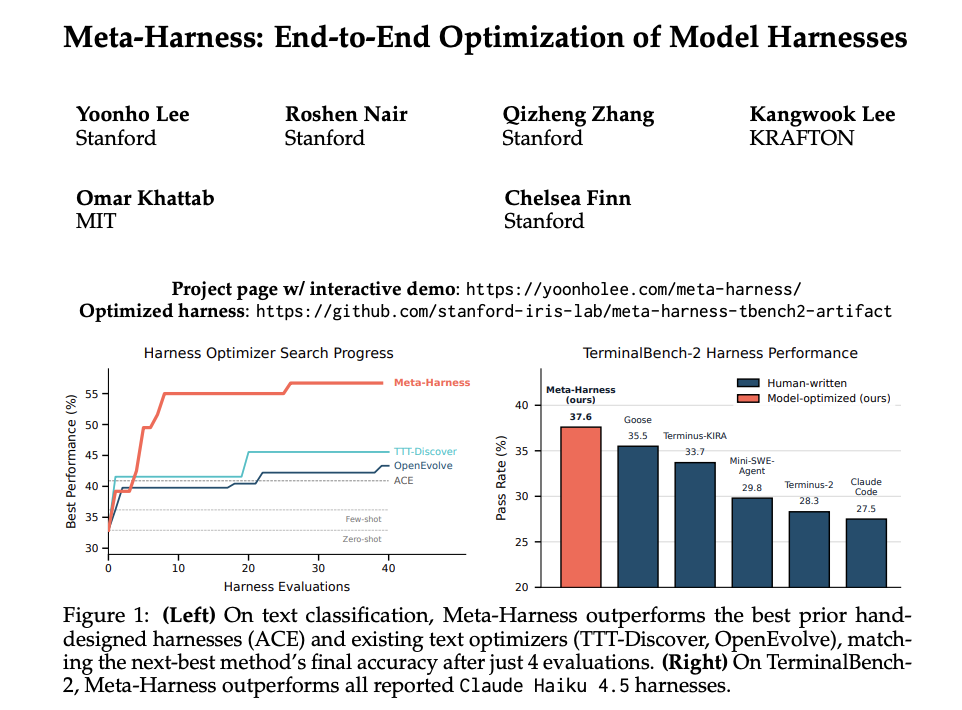

A paper (1) from Stanford University has introduced a novel approach that significantly boosts accuracy. I believe the two major features are as follows:

Full access to past information

Adoption of Claude Code

The paper defines a "harness" as follows:

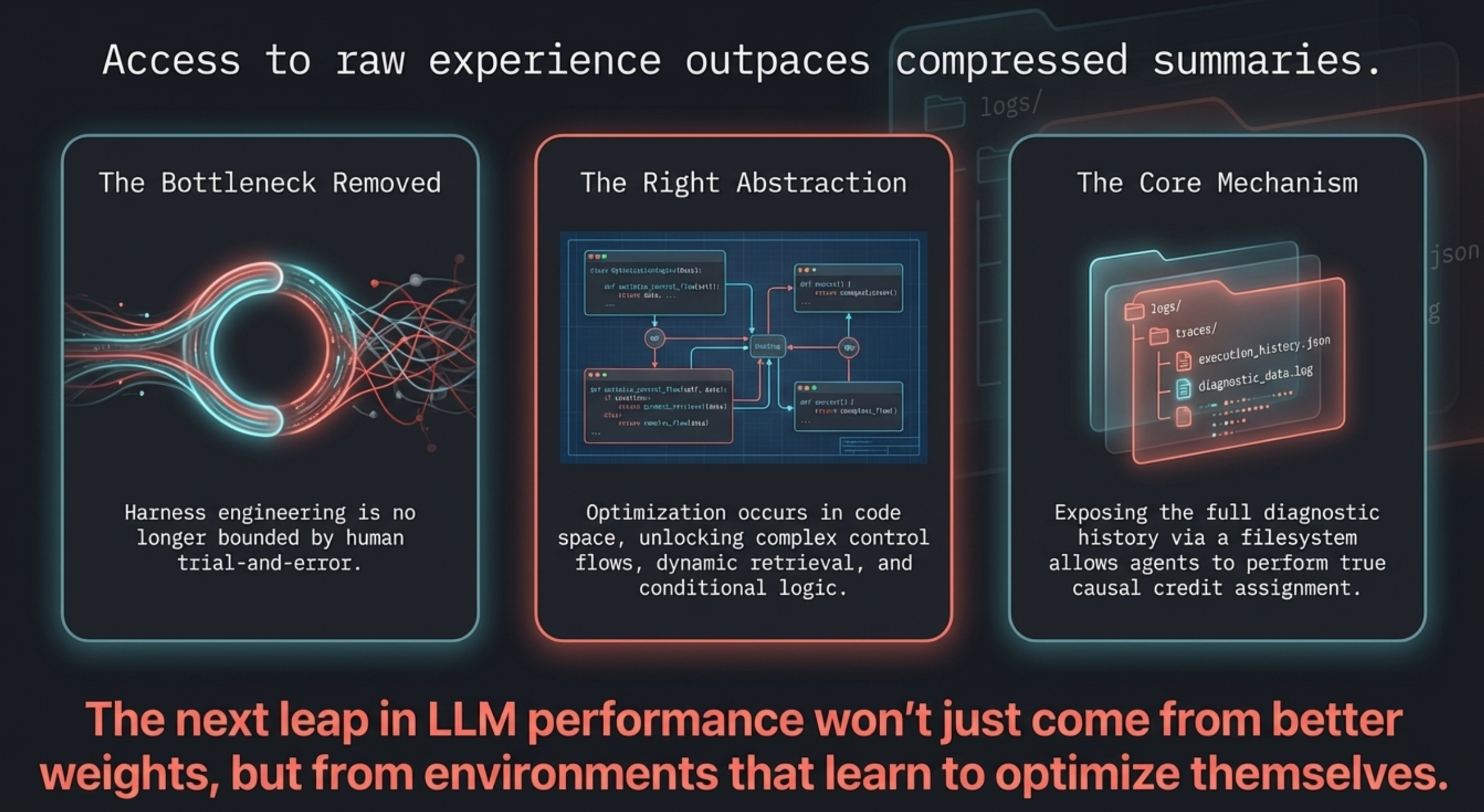

The performance of large language model (LLM) systems depends not only on model weights, but also on their harness: the code that determines what information to store, retrieve, and present to the model.

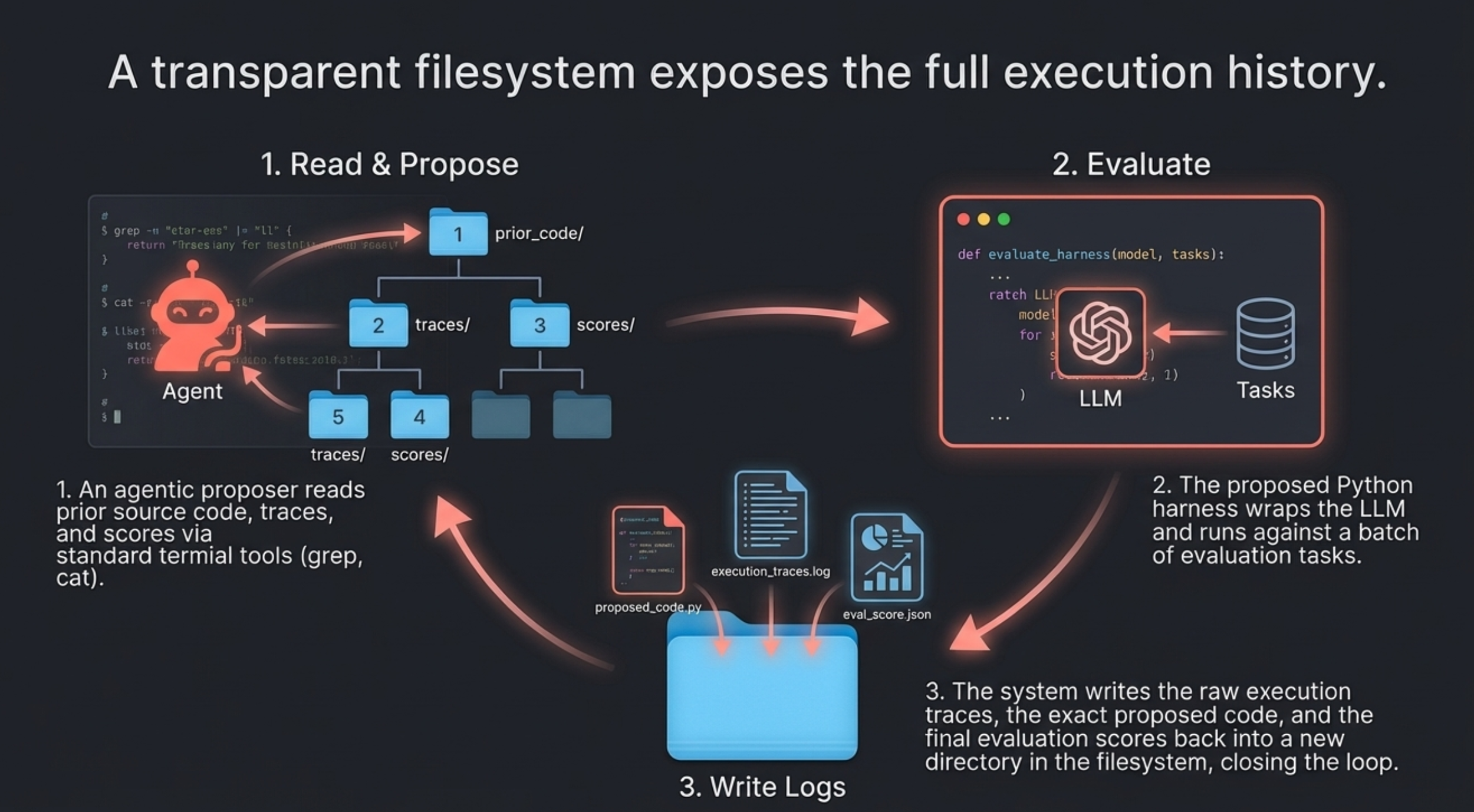

Simply put, a harness is the mechanism surrounding the generative AI that controls data to maximize its performance. To build this harness using an AI agent, it seems that maximum data access is required.

By running a loop as shown below, "Recursive Self-Improvement"—where the agent learns from past failures to improve itself—becomes possible.

Meta-Harness

2. Full Access to Information: The Secret to Improved Accuracy

Previously, there were various methods for constructing harnesses, but humans had to summarize or compress large amounts of information in some form. Consequently, critical information was often lost during the process, creating a bottleneck when aiming for higher accuracy.

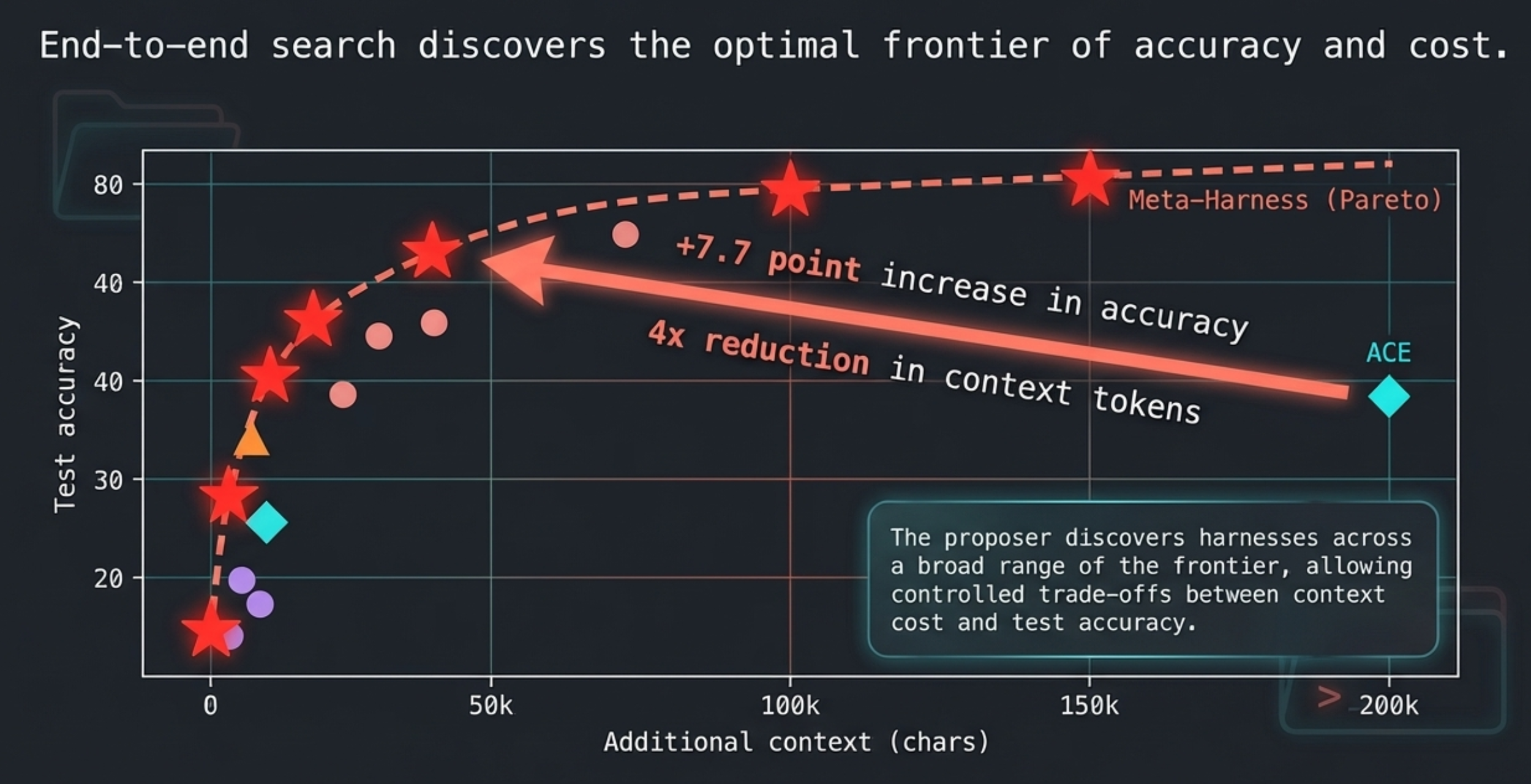

"Meta-Harness" addresses this by granting the proposer access to all past logs and files. By allowing the agent to see all information without concealment, this structure eliminates the bottleneck. As a result, it achieved excellent performance on the Pareto frontier, as shown below.

Pareto Frontier

This graph illustrates the relationship between additional information (context) and accuracy. The closer a point is to the top-left, the higher the accuracy achieved with less information, which signifies superior performance.

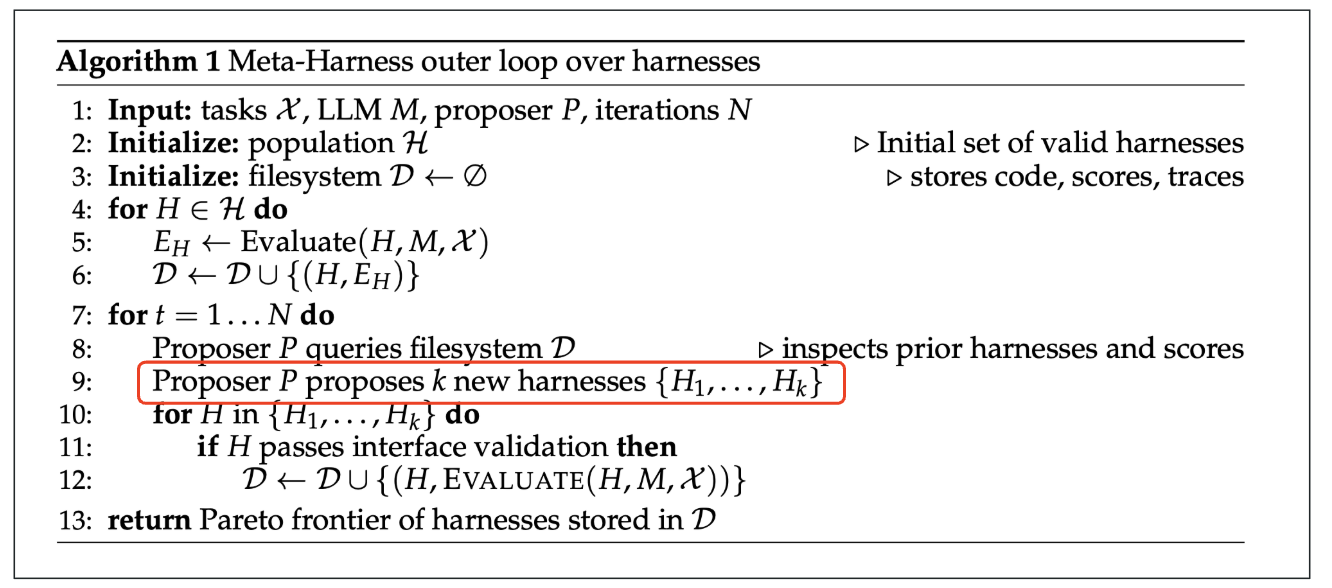

3. The Emergence of Claude Code

The proposer plays a central role in "Meta-Harness." Let’s look at the details through pseudo-code, where P represents the proposer. Looking at the section outlined in red, we can see that a new harness is being created by the proposer.

Pseudo-code

In this context, the proposer specifically refers to Claude Code. In other words, the new harness is created based on the latent capabilities of Claude Code. While Claude Code is proving active in various fields, it appears here again in a leading role. It is truly impressive. This demonstrates that future AI research will be driven by AI agents like Claude Code at its core. We are truly at the cutting edge of the era.

Conclusion

As we have seen, providing Claude Code with maximum information access enables the construction of high-performance harnesses. Of course, detailed tuning is necessary, so I highly recommend reading the full paper.

At ToshiStats, we will continue to cover harness design, which is the key to improving AI agent accuracy. Stay tuned!

You can enjoy our video news ToshiStats AI Weekly Review from this link, too!

1) Meta-Harness: End-to-End Optimization of Model Harnesses, Yoonho Lee, Roshen Nair, Qizheng Zhang, Kangwook Lee, Omar Khattab, Chelsea Finn, Mar 30, 2026

Notice: This is for educational purpose only. ToshiStats Co., Ltd. and I do not accept any responsibility or liability for loss or damage occasioned to any person or property through using materials, instructions, methods, algorithms or ideas contained herein, or acting or refraining from acting as a result of such use. ToshiStats Co., Ltd. and I expressly disclaim all implied warranties, including merchantability or fitness for any particular purpose. There will be no duty on ToshiStats Co., Ltd. and me to correct any errors or defects in the report, the codes and the software.