Anthropic has released the frontier generative AI model, Opus 4.7. This update comes just over two months after the release of Opus 4.6, highlighting the accelerating pace of technological progress. In this article, I will dive deep into the remarkable new feature added alongside Opus 4.7, "Auto Mode," by utilizing it to build a machine learning model for credit default prediction.

1. What is Auto Mode?

Boris Cherney, the developer of Claude Code—an Agentic coding development environment—commented on "Auto Mode" as follows:

Auto mode = no more permission prompts

In the past, you either had to babysit the model while it did these sorts of long tasks, our use--dangerously-skip-permissions.We recently rolled out auto mode as a safer alternative. In this mode, permission prompts are routed to a model-based classifier to decide whether the command is safe to run. If it'ssafe, it's auto-approved.

In short, this feature reduces the frequency of "Please approve" requests that appear during long agentic coding sessions, thereby boosting productivity. For someone like me, who handles dozens of these approval requests daily, this is a very welcome addition.

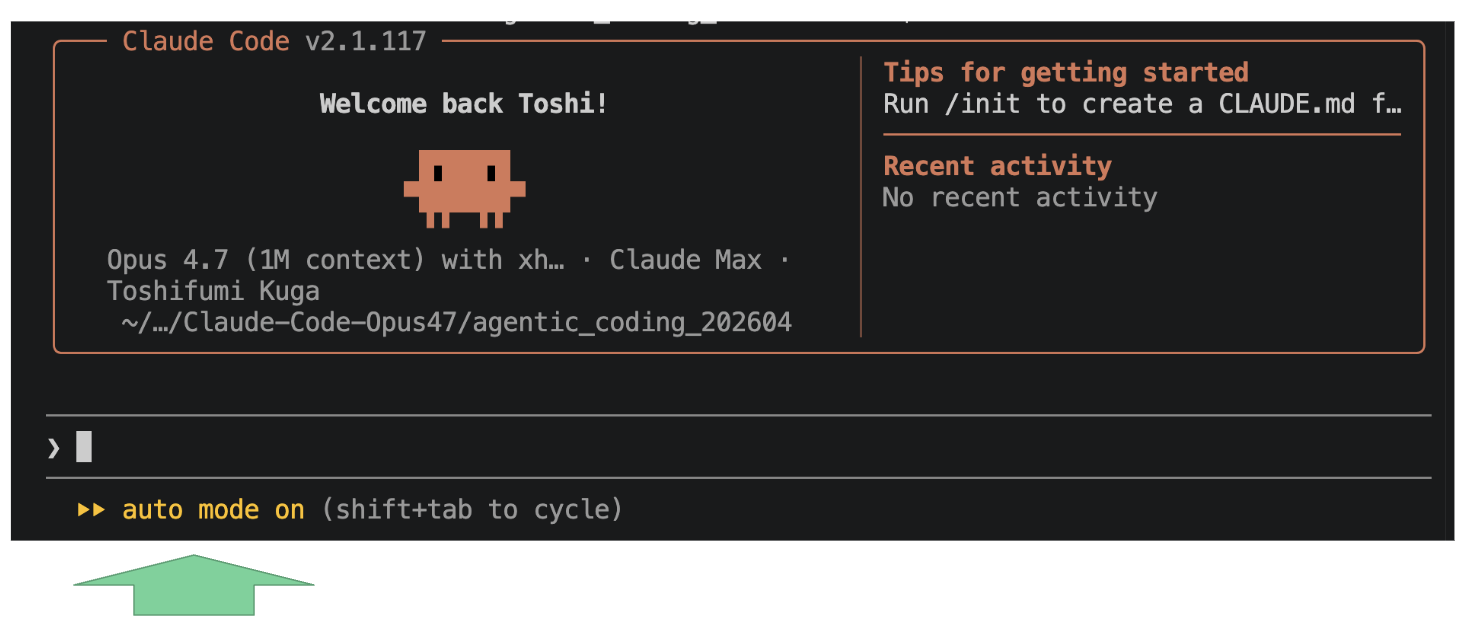

You can verify the "Auto Mode" status via the indicator at the bottom left of the Claude Code interface.

Auto Mode

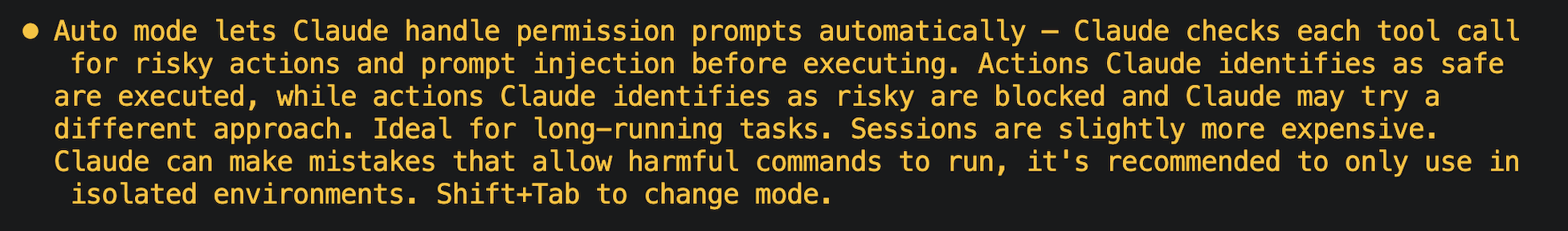

When you first enable it, a notice will appear; I recommend giving it a thorough read.

notice of Auto Mode

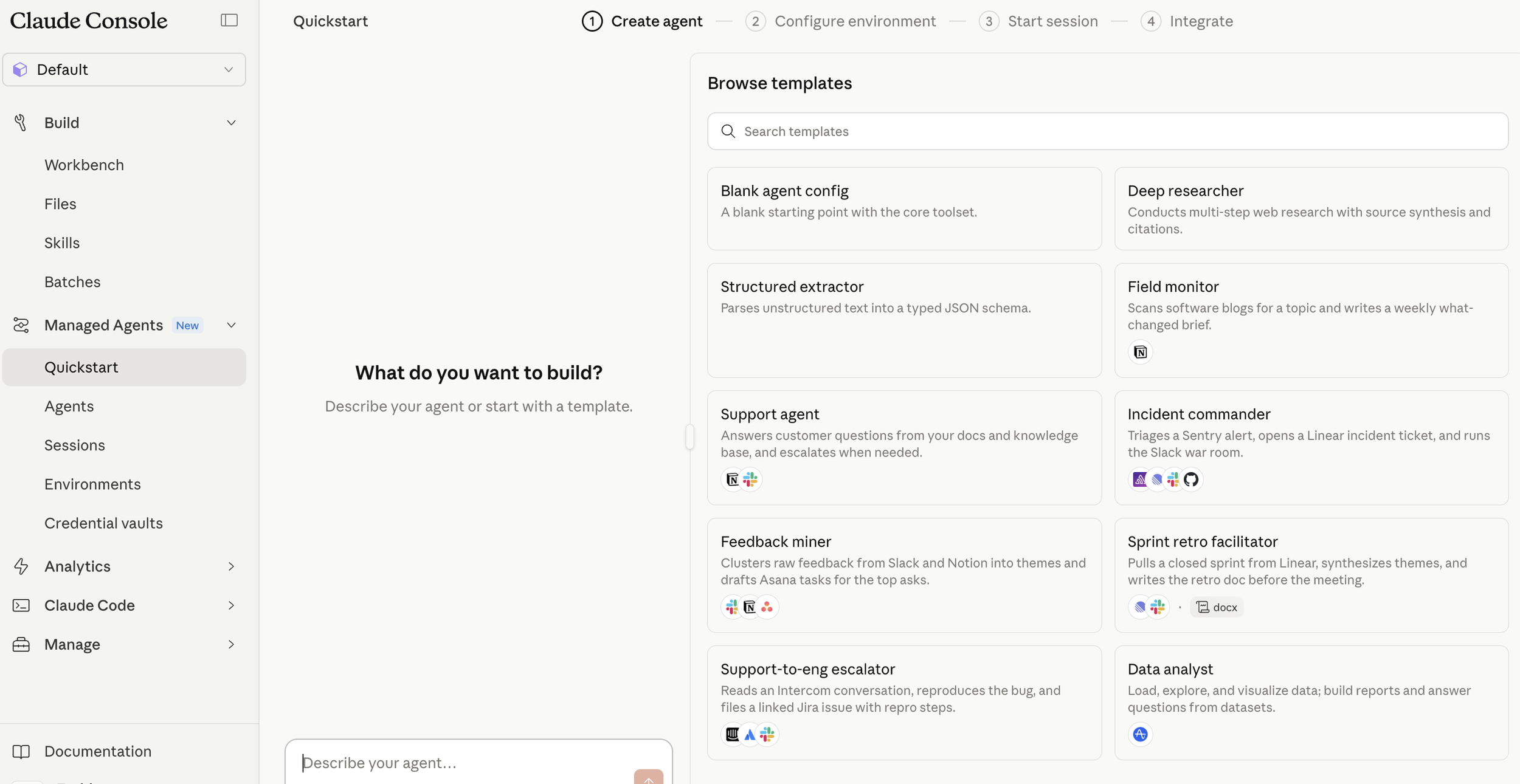

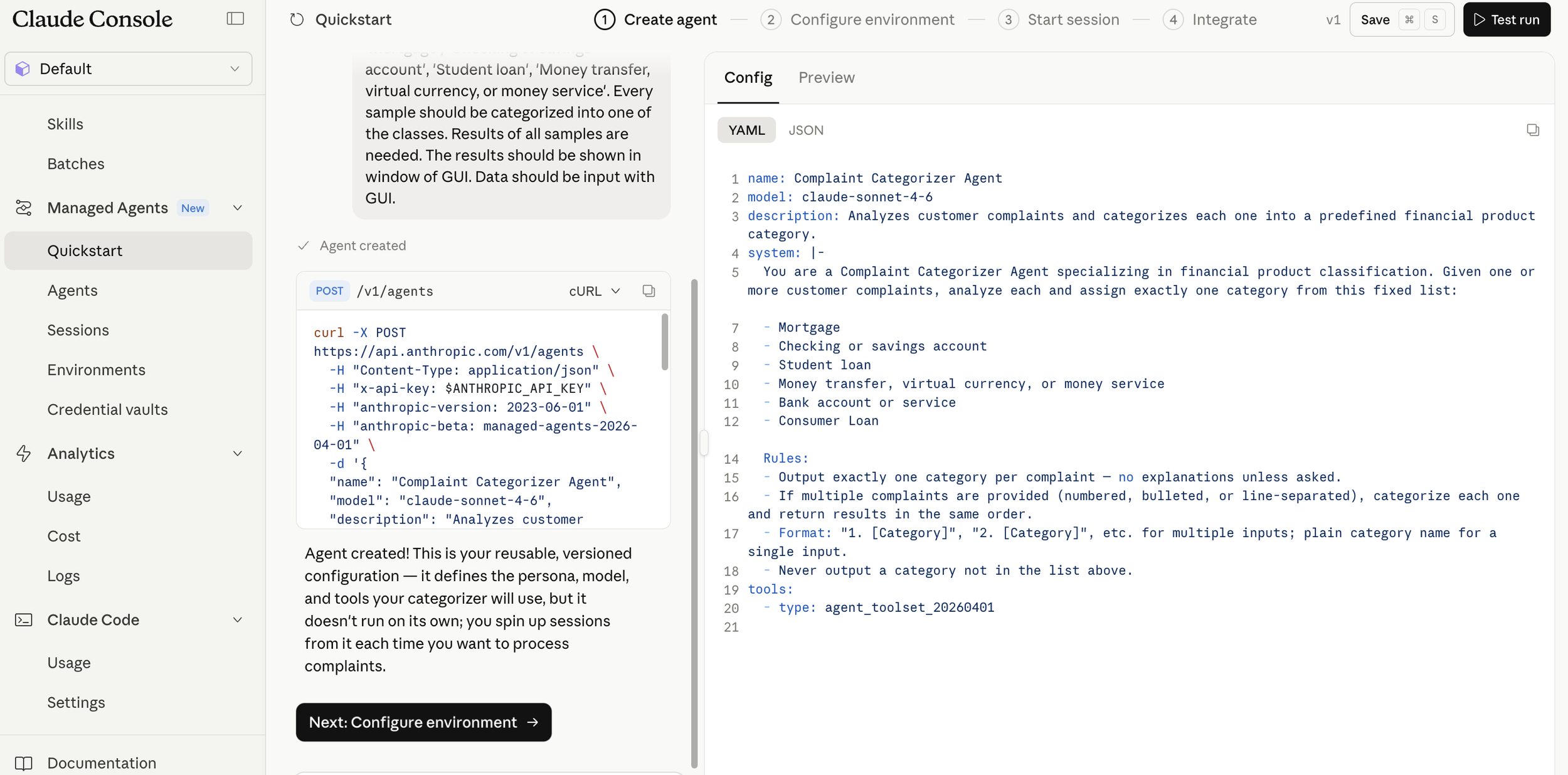

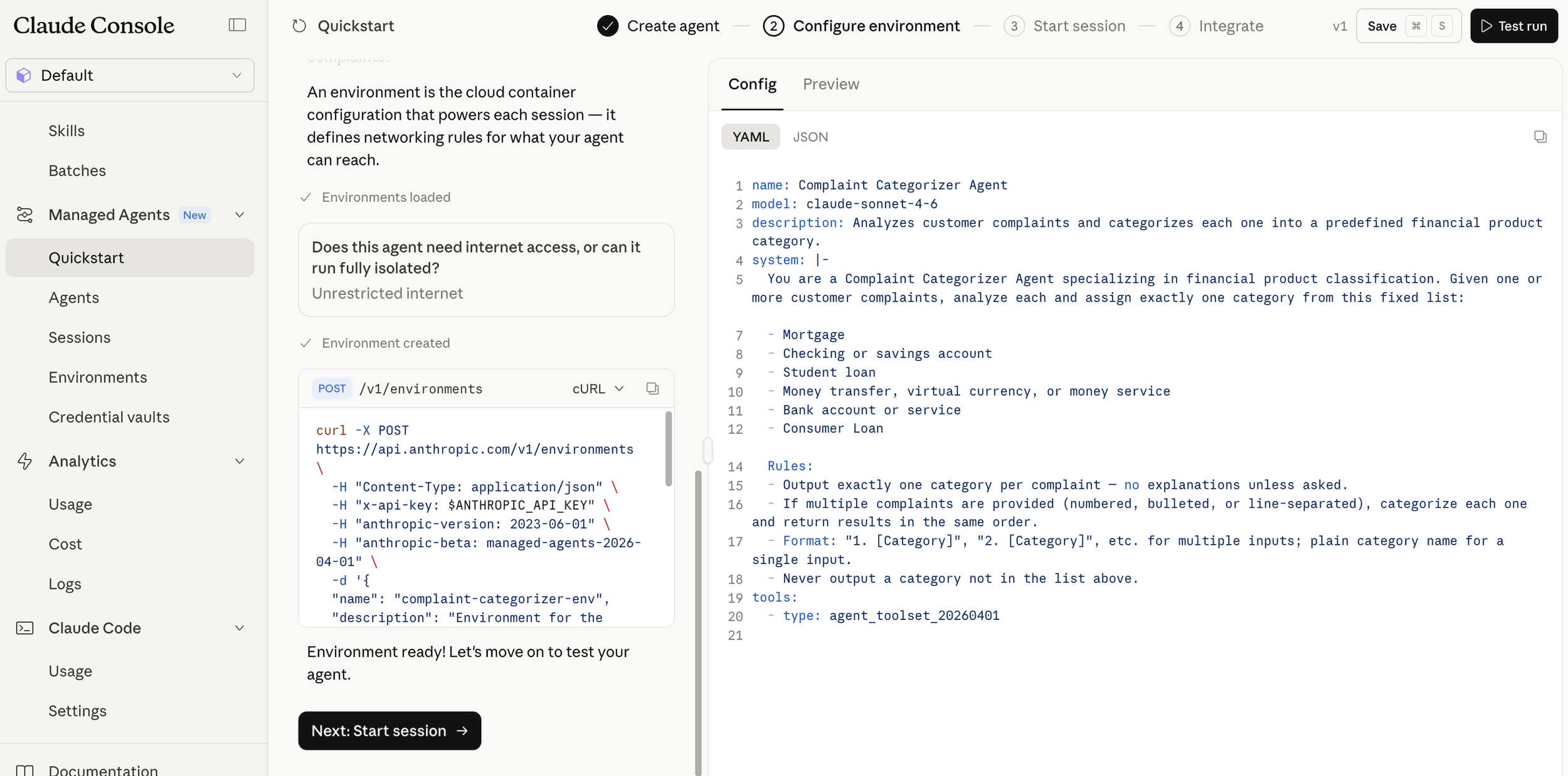

2. Building a Default Prediction Model with Auto Mode

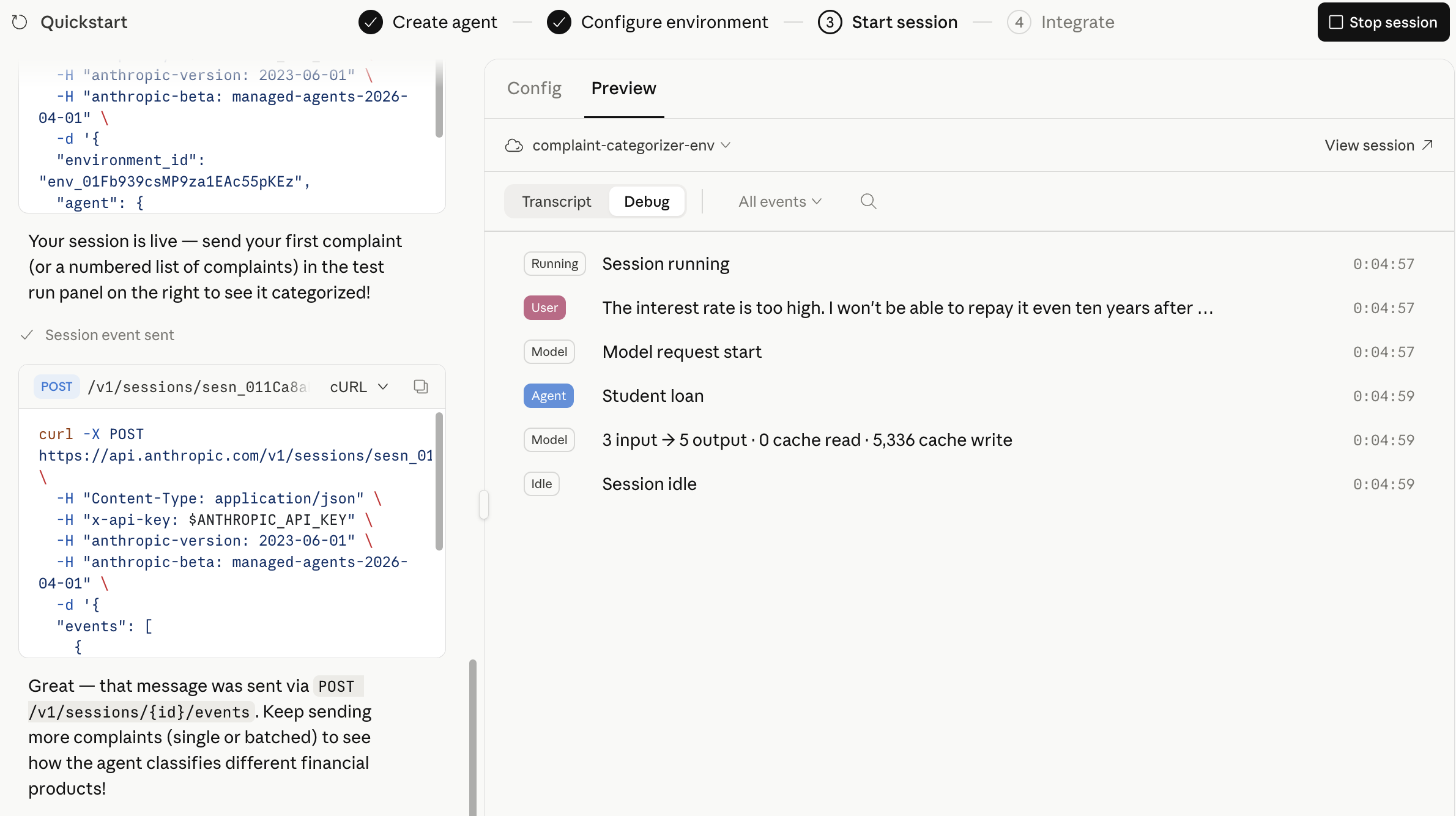

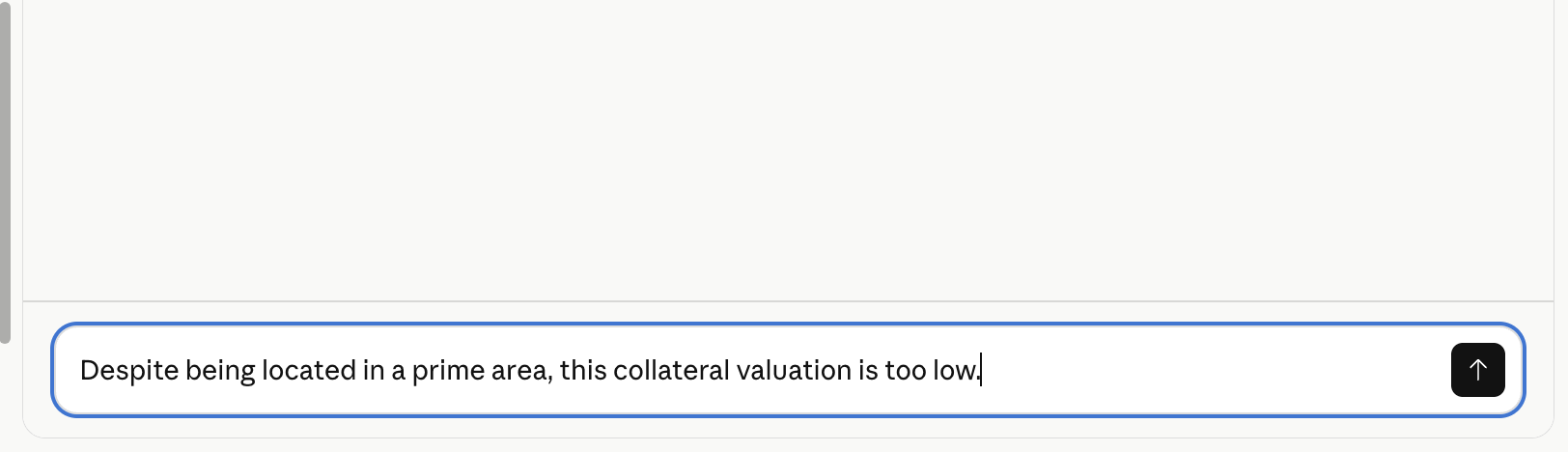

I used Claude Code’s "Auto Mode" to actually build a default prediction model. For this project, I used data from Home Credit Default Risk competition(2) at Kaggle .

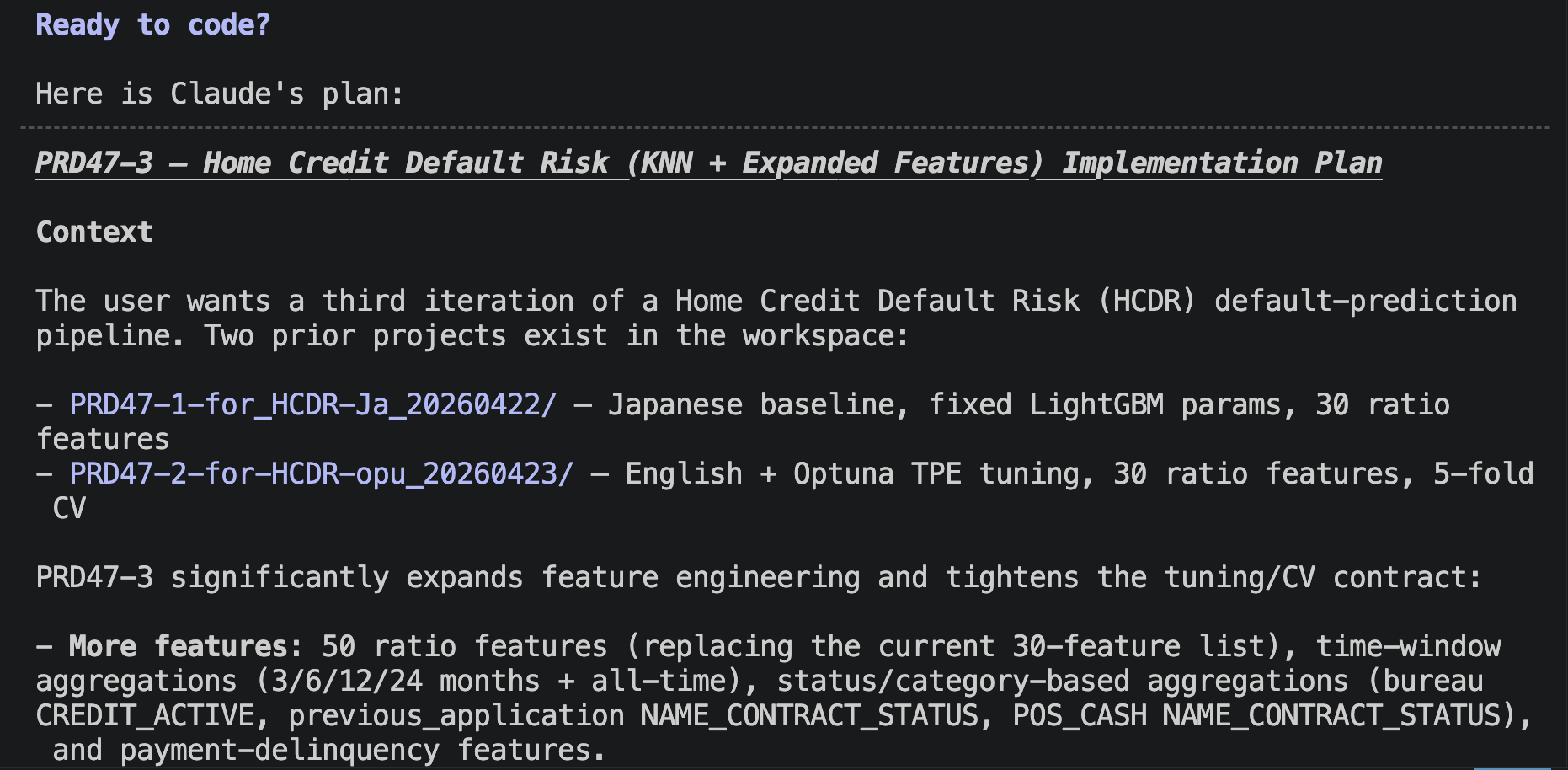

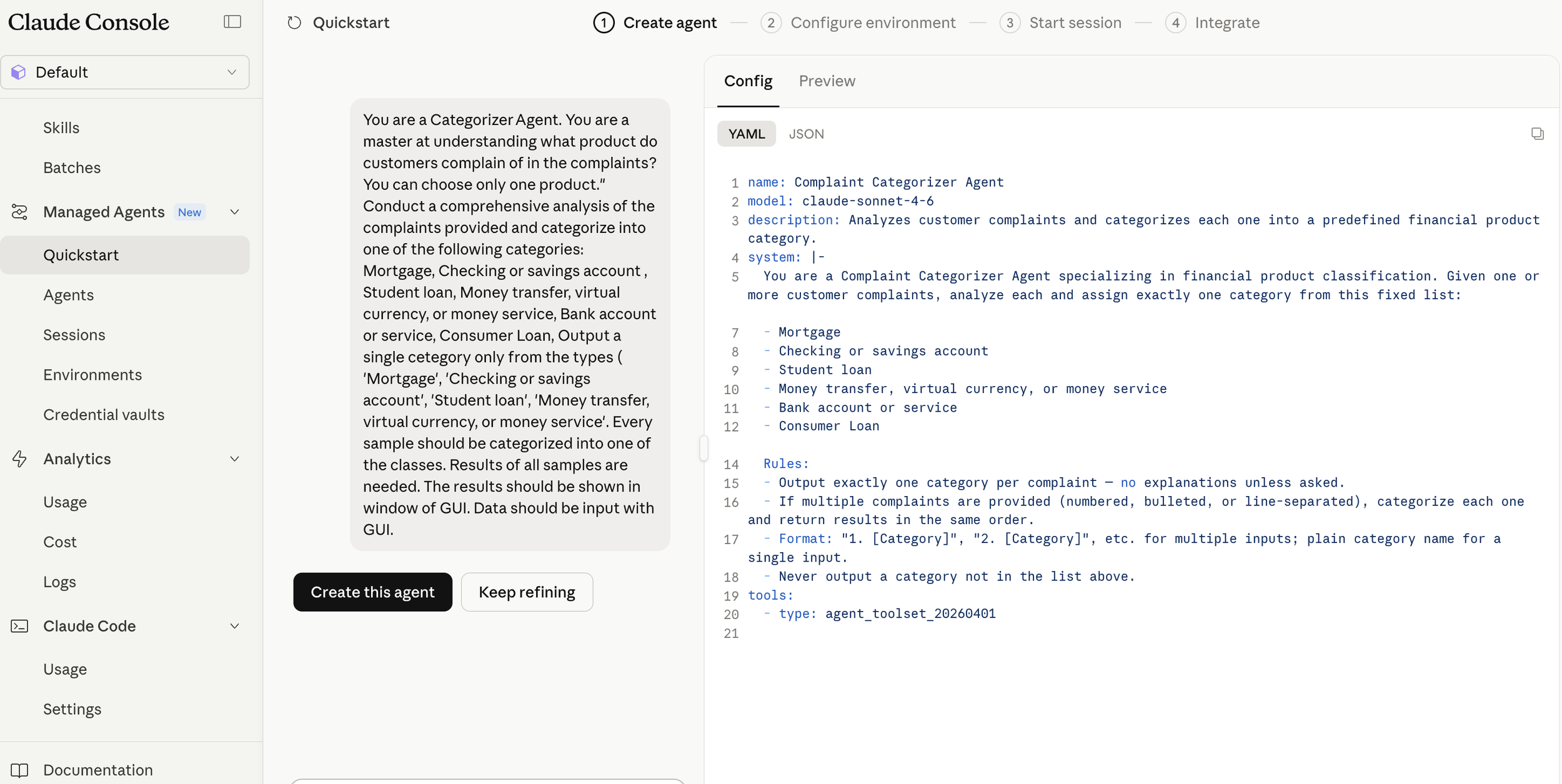

First, I created an implementation plan using Plan Mode. Through dialogue with Claude Code, a structured plan was established.

Implementation Plan

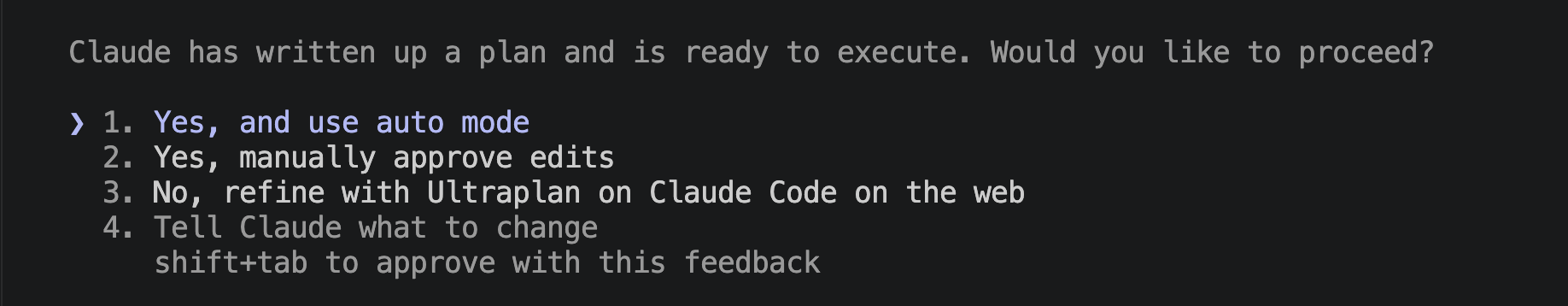

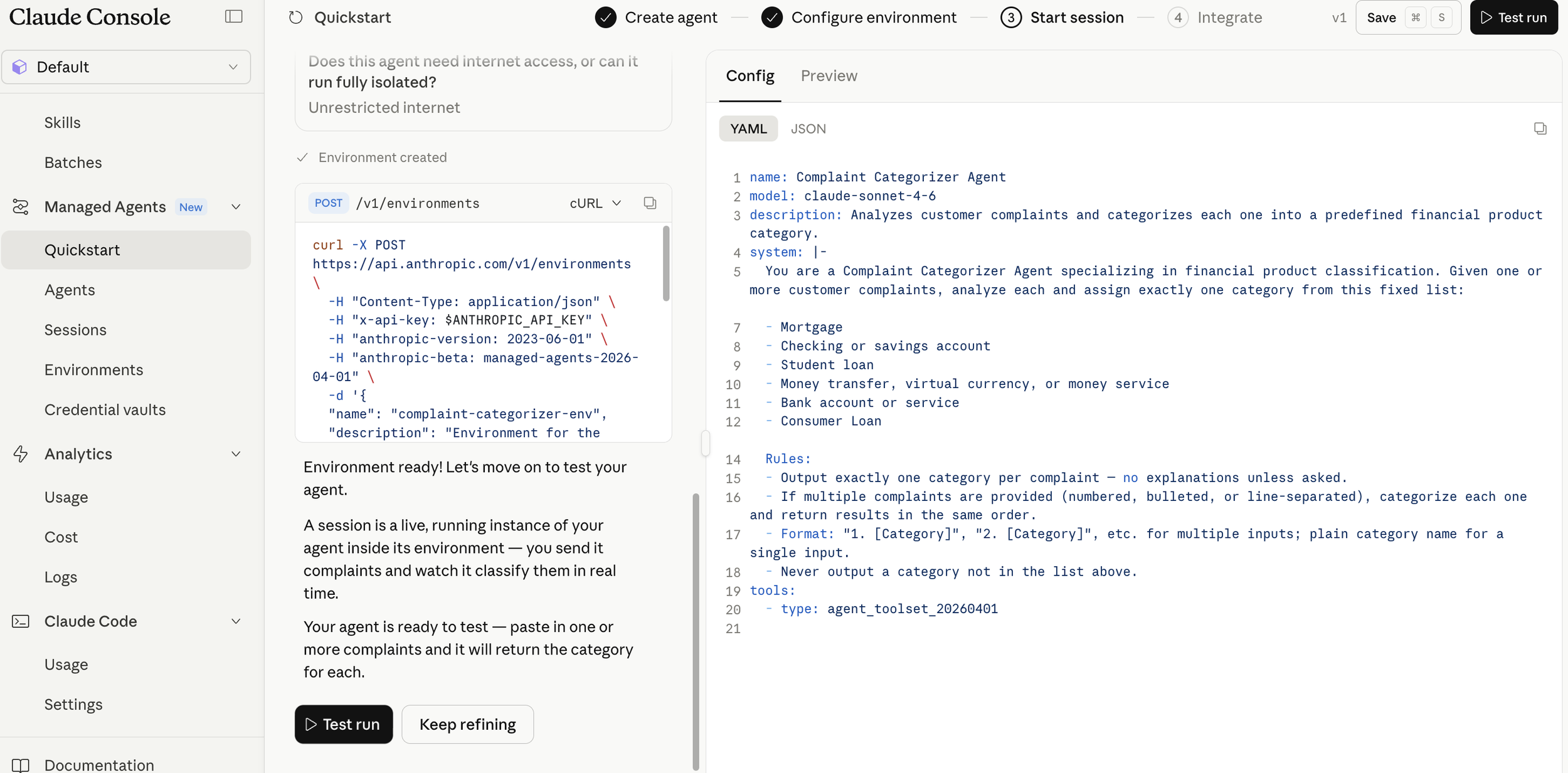

At this stage, Claude Code asks, "Would you like to use Auto Mode?" and answering "Yes" initiates the process.

Approval Request

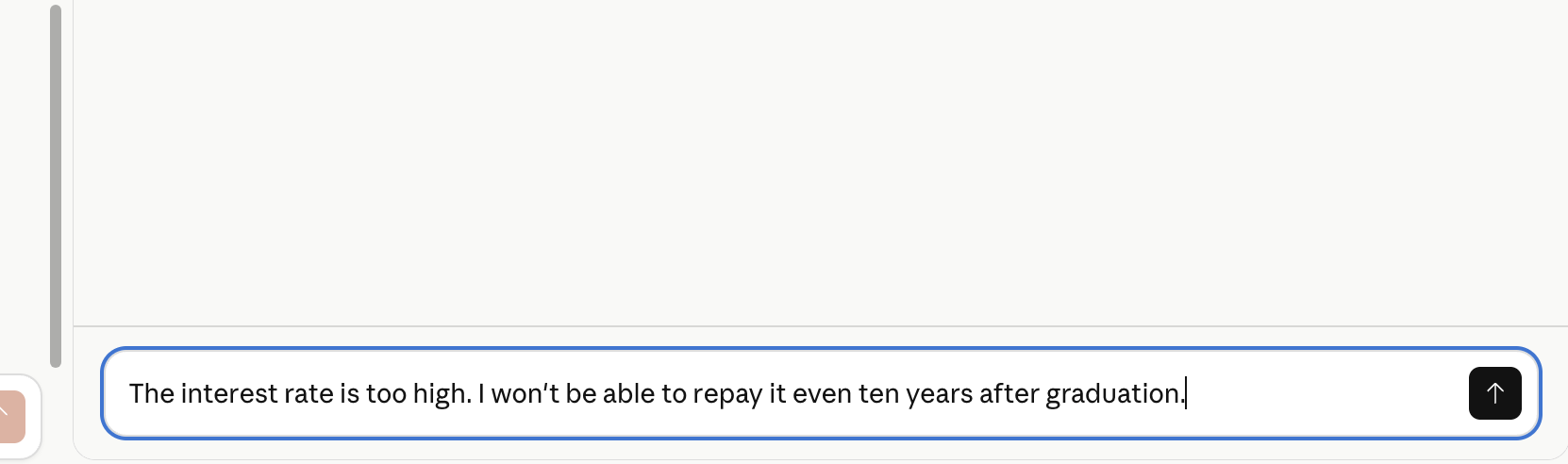

The Implementation Process: I watched to see how many approval requests would appear before completion.

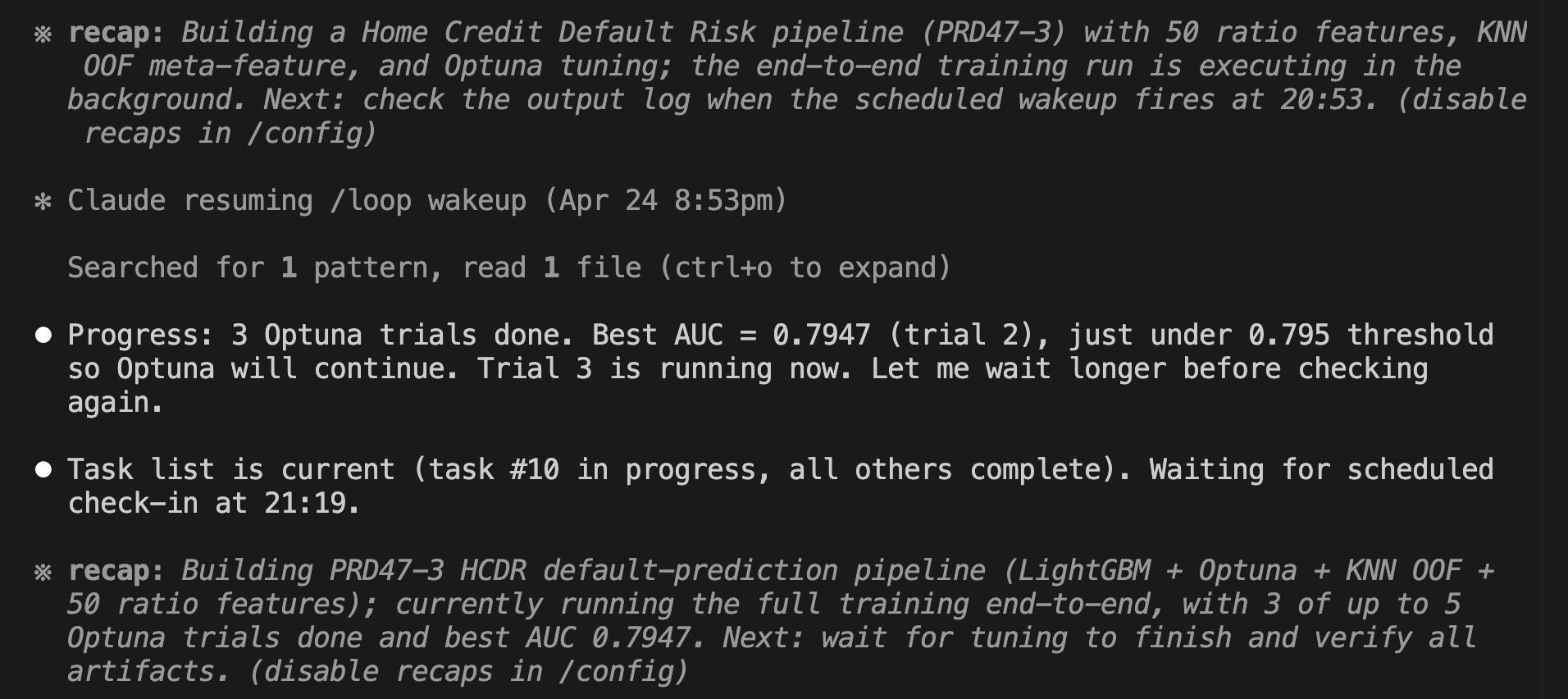

Implementation using Auto Mode

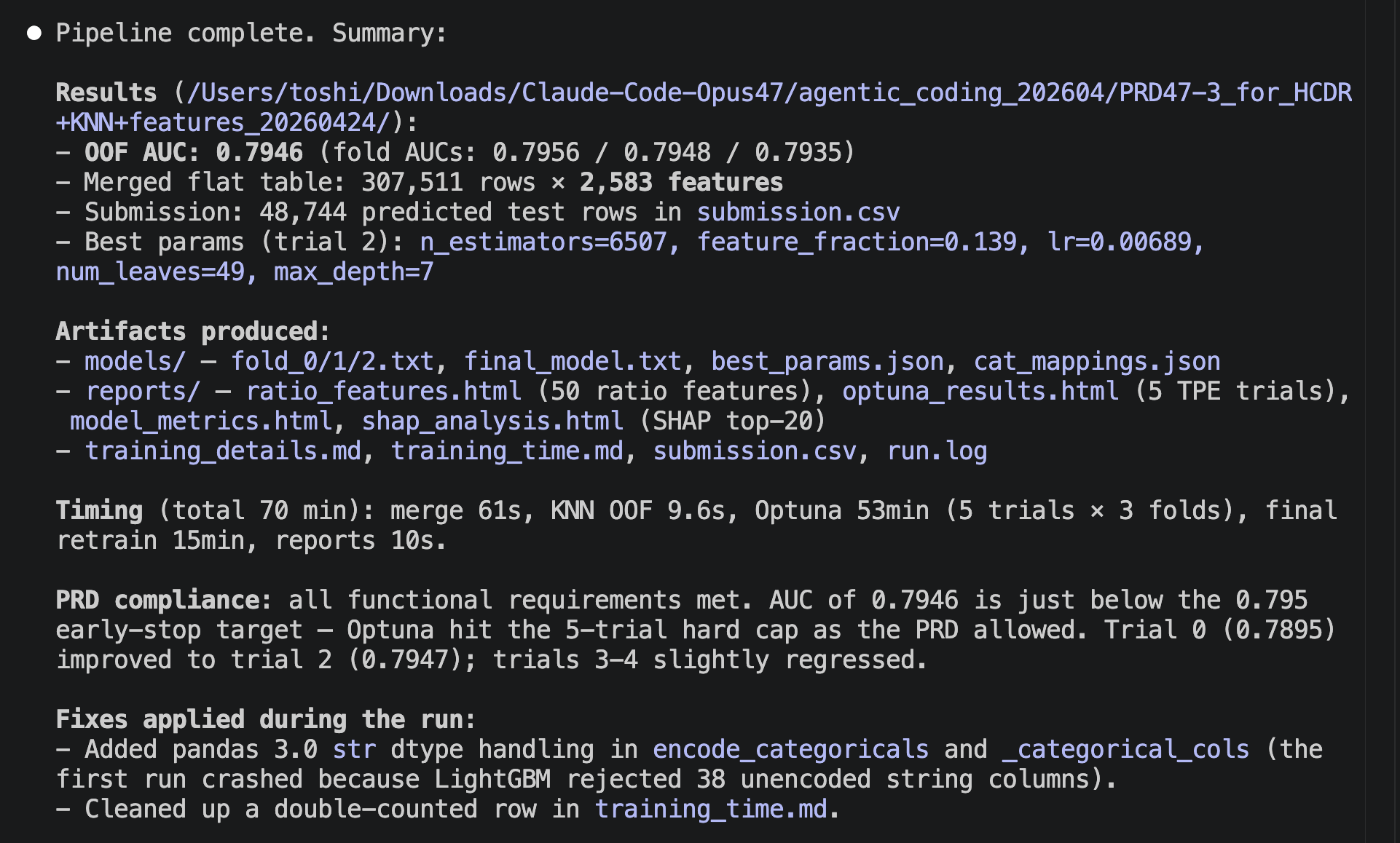

After approximately 90 minutes, the system announced, "Finished." Remarkably, not a single approval request was triggered. This makes the work significantly easier and the implementation process much more enjoyable.

Completion Notice

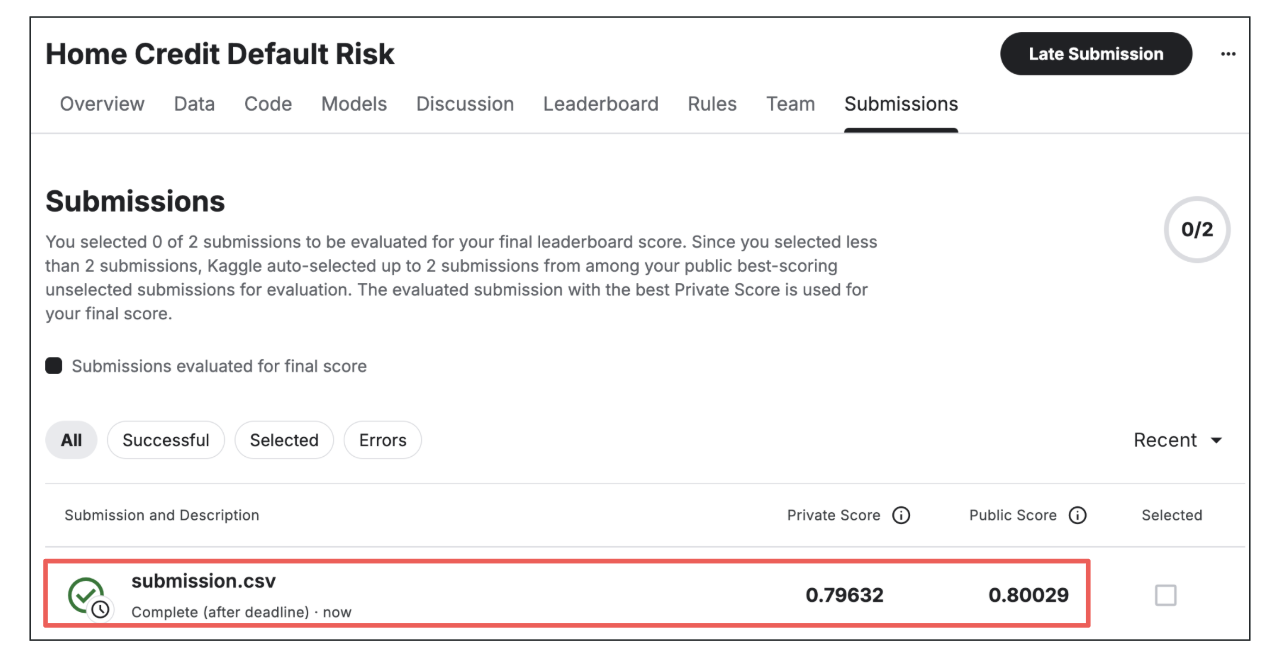

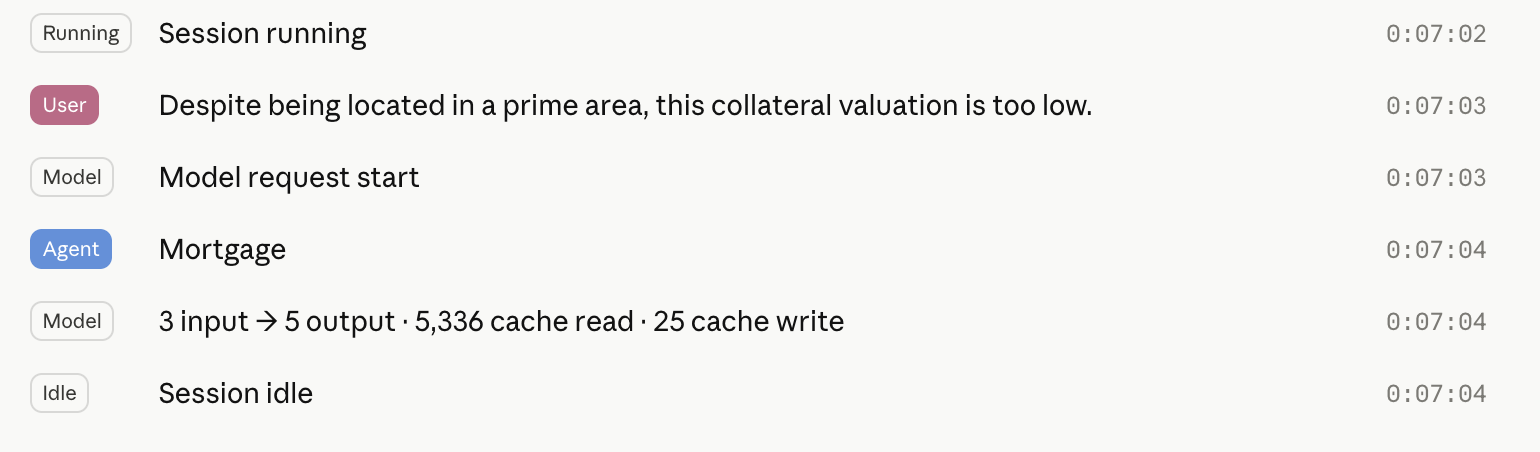

Accuracy Validation: I checked the evaluation metric on Kaggle. The result was an AUC = 0.79632. This is my personal best for a single model without using ensembles. It ranks within the top 4.2% of the competition. Achieving this score without any manual intervention after the initial planning phase is truly astonishing.

Evaluation Metric

3. Auto Mode and Productivity in Data Analysis

While Auto Mode makes implementation effortless, its true power lies elsewhere. Because the frequency of approval requests has decreased so dramatically, it is now feasible to work with parallel computing—building multiple models simultaneously.

Whether in Kaggle competitions or practical business scenarios, we are often required to improve accuracy within a limited timeframe. If parallel computing becomes this easy, increasing productivity by 5x to 10x is no longer just a dream. It is a challenge well worth taking.

Conclusion

Auto Mode has simplified parallel computing and opened a new path toward enhanced productivity. At ToshiStats, we will continue to explore case studies using Auto Mode.

Stay tuned!

You can enjoy our video news ToshiStats AI Weekly Review from this link, too!

1) https://x.com/bcherny/status/2044847848035156457, Boris Cherney, Anthropic

2) Home Credit Default Risk, kaggle

Notice: This is for educational purpose only. ToshiStats Co., Ltd. and I do not accept any responsibility or liability for loss or damage occasioned to any person or property through using materials, instructions, methods, algorithms or ideas contained herein, or acting or refraining from acting as a result of such use. ToshiStats Co., Ltd. and I expressly disclaim all implied warranties, including merchantability or fitness for any particular purpose. There will be no duty on ToshiStats Co., Ltd. and me to correct any errors or defects in the report, the codes and the software.