Every week, a variety of generative AI updates are released, and it feels as though this pace will only continue to accelerate. On the other hand, many people may be feeling lost, wondering how exactly they should navigate these changes. Therefore, in this post, I would like to explore some hints from Anthropic's technical blog (1).

1. Experiments at Anthropic

Mr. Prithvi Rajasekaran from the Labs team has provided a detailed report on several implementation experiments.

The experiments consisted of three projects: front-end design development, full-stack 2D retro game development, and Digital Audio Workstation (DAW) development. This time, I would like to focus specifically on the full-stack 2D retro game development. Through various development and implementation processes, they observed cases where long-running agentic coding failed. A common factor was that the AI often overestimated incomplete implementations, judging them to be at a sufficient level when they were actually still unfinished. They believed that unless this was improved, it would be impossible to achieve satisfactory results in long-running agentic coding.

2. The Key Technology for Success

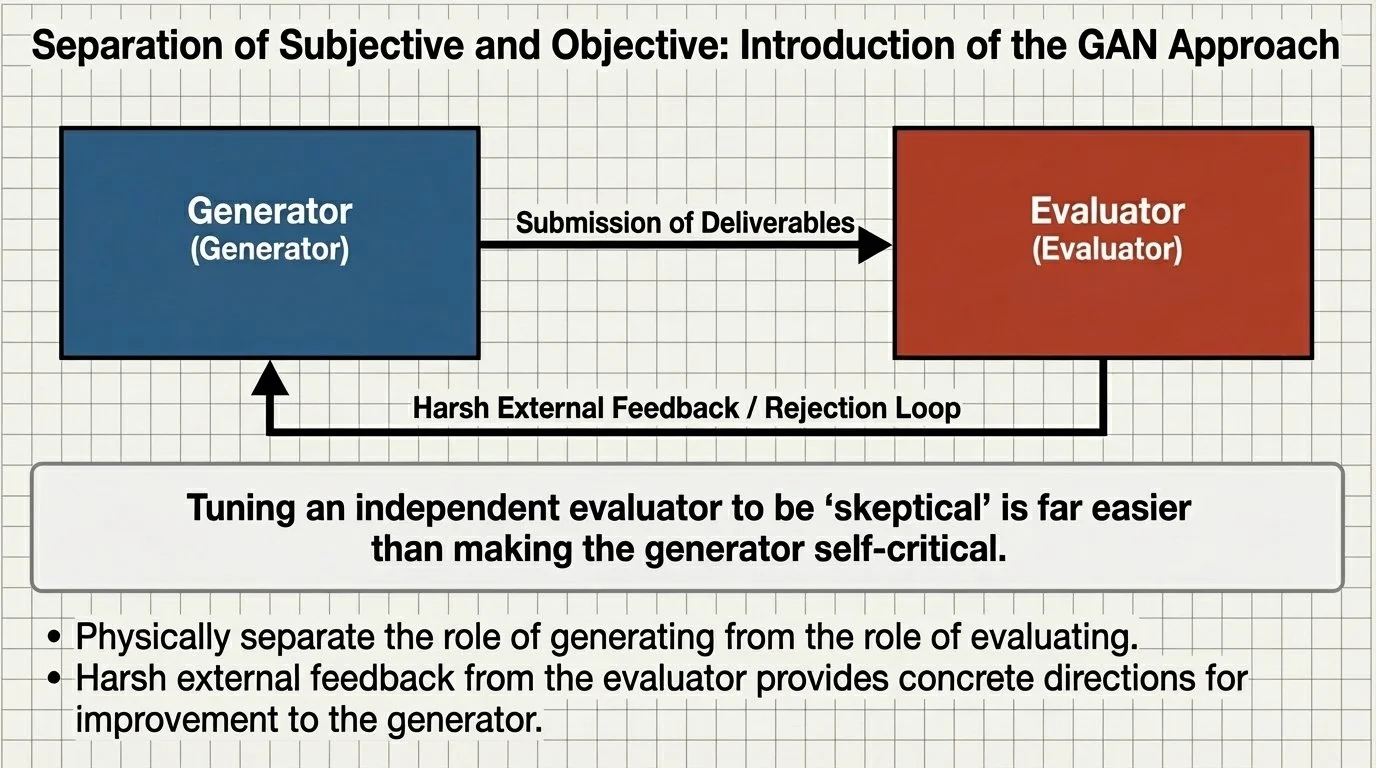

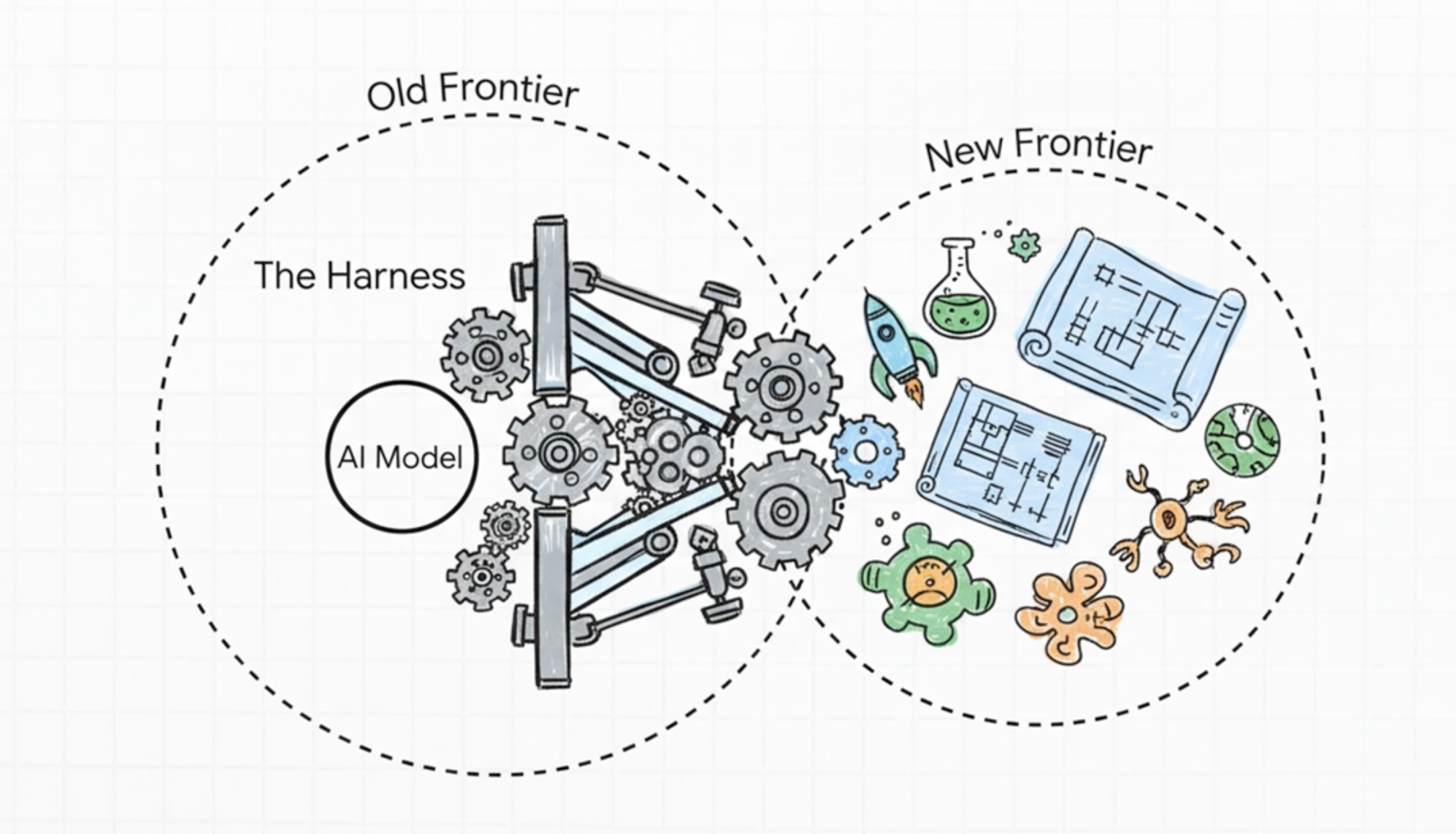

To address this, a "harness" design consisting of a pair of a Generator and an Evaluator was introduced. This was reportedly inspired by a technology well-known in image generation called Generative Adversarial Networks (GANs). For more details, please see below. In short, the model does not evaluate its own work.

New Harness Design

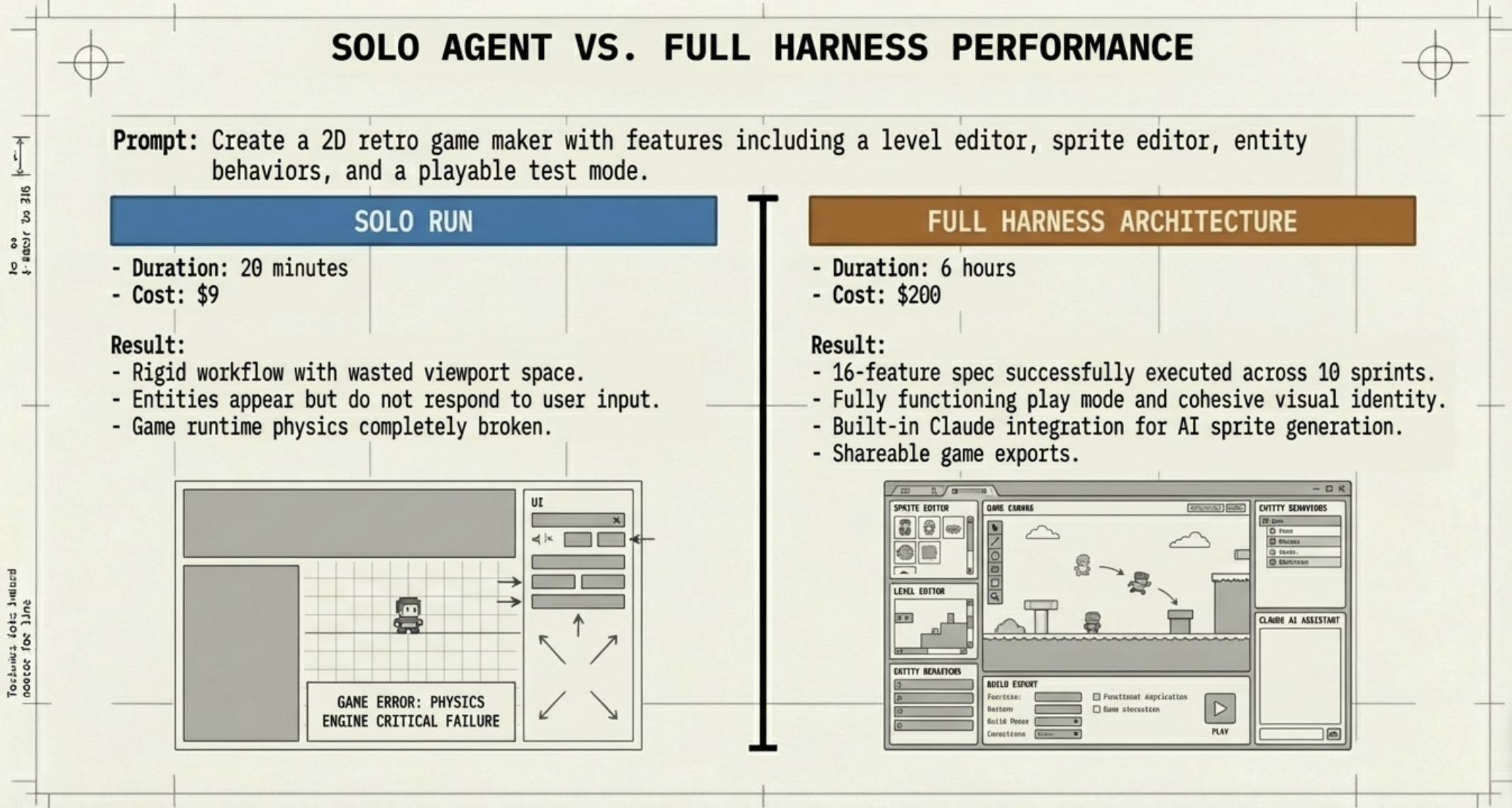

A loop was established between the Generator and the Evaluator, where flawed implementations were subjected to rigorous criticism. Naturally, this took a significant amount of time, and costs jumped by 20 times. However, the quality improved even more than the cost suggested. The return on investment was clearly sufficient.

Performance Comparison: Single Agent vs. Full Harness

3. Gains from the Update from Opus 4.5 to 4.6

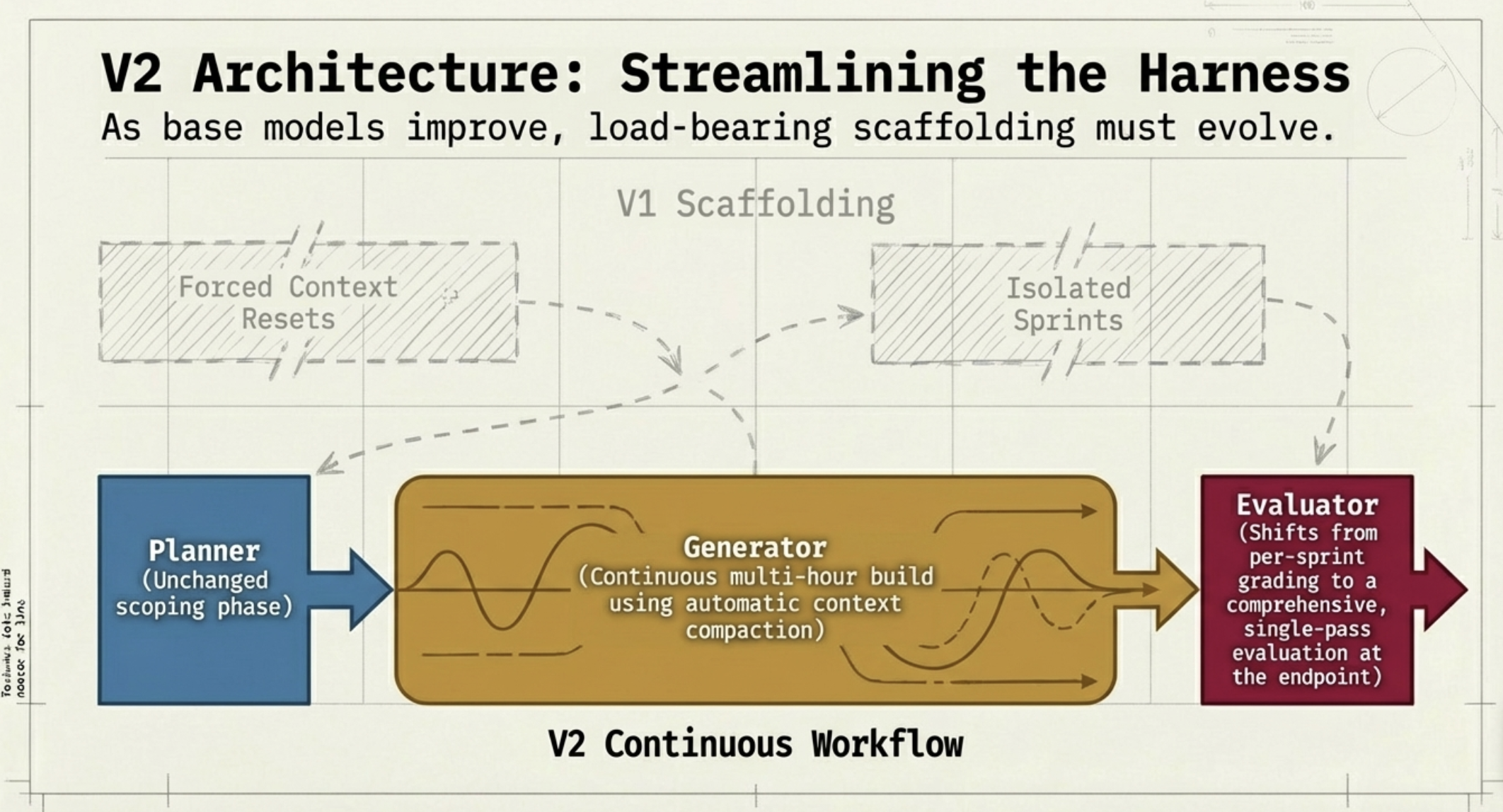

While the AI engineers were continuing to refine the harness, an update for the generative AI model, Opus, was released, moving the version from 4.5 to 4.6. The performance improvement in Opus 4.6 was remarkable, and as a result, part of the harness that had been necessary for Opus 4.5 became redundant. This allowed the implementation to become simpler. Fantastic! Please see the chart below for details. In the V2 harness, a portion of V1 has indeed been removed.

Harness Design with Opus 4.6

Based on this experience, the blog describes the following lessons:

“the better the models get, the more space there is to develop harnesses that can achieve complex tasks beyond what the model can do at baseline.”

“From this work, my conviction is that the space of interesting harness combinations doesn't shrink as models improve. Instead, it moves, and the interesting work for AI engineers is to keep finding the next novel combination.”

In other words, I believe this means: "As the capabilities of generative AI improve, the number of things that can be solved by a standalone baseline model increases, making parts of existing harnesses unnecessary. However, as the capability of the baseline model rises, tasks that were previously unreachable become solvable by improving the harness design." If the things we can do with new generative AI models continue to increase, our opportunities for harness design will also grow, and it looks like we will be kept quite busy.

What did you think? As the capabilities of generative AI rise, it is expected that new harness designs will be required to push those capabilities to their limits. It seems there will be plenty to do, at least until AGI is realized. ToshiStats will continue to feature harness designs, which are the key to improving the accuracy of AI agents. Stay tuned!

You can enjoy our video news ToshiStats AI Weekly Review from this link, too!

1) Harness design for long-running application development, Engineering at Anthropic. Mar 24, 2026

Notice: This is for educational purpose only. ToshiStats Co., Ltd. and I do not accept any responsibility or liability for loss or damage occasioned to any person or property through using materials, instructions, methods, algorithms or ideas contained herein, or acting or refraining from acting as a result of such use. ToshiStats Co., Ltd. and I expressly disclaim all implied warranties, including merchantability or fitness for any particular purpose. There will be no duty on ToshiStats Co., Ltd. and me to correct any errors or defects in the report, the codes and the software.